Systems | Development | Analytics | API | Testing

May 2021

Jeeves Grows Up: How an AI Chatbot Became Part of Unravel Data

Jeeves is the stereotypical English butler – and an AI chatbot that answers pertinent and important questions about Spark jobs in production. Shivnath Babu, CTO and co-founder of Unravel Data, spoke yesterday at Data + AI Summit, formerly known as Spark Summit, about the evolution of Jeeves, and how the technology has become a key supporting pillar within Unravel Data’s software.

DataStore vs FeatureStore

I think it’s safe to say that one of the worst things in Machine Learning is the terminology. The maths and statistics are definitely part of the learning curve, but more than that, it feels like you are learning a new language. In some ways, you are. DataStore and FeatureStore are two of the current buzzwords that people are trying to understand. To be fair, DataStore and FeatureStore feel like family rather than strangers.

The Clear SHOW - S02E07 - Manual Orchestration (Pit Stop!)

9 Ways to Break Through a Website Traffic Plateau

What is Data as a Service (DaaS)?

What is File Transfer Protocol?

The Complete Guide to GDPR Compliance

Say Goodbye to Data Quality with ELT

The Ethics of AI Comes Down to Conscious Decisions

This blog post was written by Pedro Pereira as a guest author for Cloudera. Right now, someone somewhere is writing the next fake news story or editing a deepfake video. An authoritarian regime is manipulating an artificial intelligence (AI) system to spy on technology users. No matter how good the intentions behind the development of a technology, someone is bound to corrupt and manipulate it. Big data and AI amplify the problem. “If you have good intentions, you can make it very good.

Innovation + Flexibility + Acceleration = Competitive Business Advantage

The accelerated exploitation of new technologies and data drive successful digital transformation and is key to gaining competitive business advantage. Hence, executives place a premium on innovation, flexibility and acceleration.

Demo: How to Replicate Salesforce Formula Fields in Your Data Warehouse

How to Compare ETL Tools

Use these criteria to choose the best ETL tool for your data integration needs.

Google Analytics vs. Universal Analytics: 4 Main Differences

Real-time Change Data Capture for data replication into BigQuery

Businesses hoping to make timely, data-driven decisions know that the value of their data may degrade over time and can be perishable. This has created a growing demand to analyze and build insights from data the moment it becomes available, in real-time.

PII Masking Can Protect Your Business

ETLT with Snowflake, dbt, and Xplenty

AutoZone: Exceeding customer expectations with speed of service

“Talend is amazing because it’s open, flexible, and visual. The robustness and reliability of Talend have made it an integral part of our solution set. It’s easy to learn and fast to ramp up.” – Jason Vogel, IT Manager, AutoZone AutoZone is America’s #1 vehicle solutions provider. It was founded in 1979 and has since expanded to more than 6,400 stores across three countries, with over 96,000 employees.

Auditing to external systems in CDP Private Cloud Base

Cloudera is trusted by regulated industries and Government organisations around the world to store and analyze petabytes of highly sensitive or confidential information about people, healthcare data, financial data or just proprietary information sensitive to the customer itself.

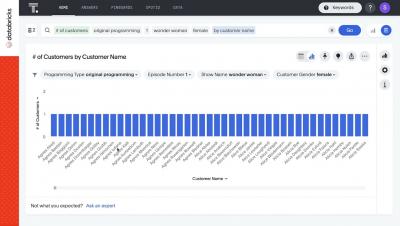

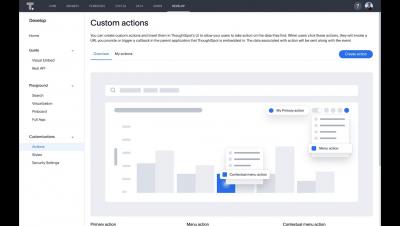

How to define your first business use case with ThoughtSpot

Companies today are faced with an analytics conundrum. On one hand, there’s a higher demand than ever for actionable business insights, but on the other there’s limited resources to deliver BI content to on-technical business end-users. To fill this gap, the industry is increasingly turning to the next generation of self-service analytics tools. These tools reduce time to insight, speed up insight to action, and also allow BI teams to focus on more strategic analytics work.

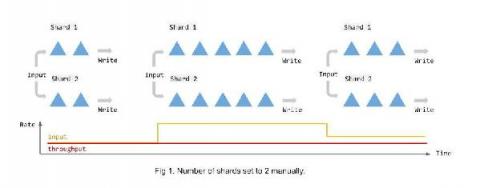

3x Dataflow Throughput with Auto Sharding for BigQuery

Many of you rely on Dataflow to build and operate mission critical streaming analytics pipelines. A key goal for us, the Dataflow team, is to make the technology work for users rather than the other way around. Autotuning, as a fundamental value proposition Dataflow offers, is a key part of making that goal a reality - it helps you focus on your use cases by eliminating the cost and burden of having to constantly tune and re-tune your applications as circumstances change.

6 Steps to Pseudonymize PII

How to Launch Your New Data Engineering Strategy

Democratizing Data Through Search and Natural Language Processing in Cloudera Data Visualization

Since the release of Cloudera Data Visualization (DV) back in Oct 2020, our primary mission has been to expand access to data analytics and predictive insights across enterprise businesses.

Session-based Recommender Systems

Recommendation systems have become a cornerstone of modern life, spanning sectors that include online retail, music and video streaming, and even content publishing. These systems help us navigate the sheer volume of content on the internet, allowing us to discover what’s interesting or important to us. The classic modeling approaches to recommendation systems can be broadly categorized as content-based, as collaborative filtering-based, or as hybrid approaches that combine aspects of the two.

Shorten time to critical insights with Streaming SQL

Data and analytics have become second nature to most businesses, but merely having access to the vast volumes of data from these devices will no longer suffice. Leading enterprises realize that the speed of data presents a new frontier for competitive differentiation. It is imperative for organizations to reduce time-to-insights to gain a competitive advantage by responding decisively to competitors, fine-tuning operations, and serving fickle customers.

From Data Lake To Enterprise Data Platform: The Business Case Has Never Been More Compelling

Companies have had only mixed results in their decades-long quest to make better decisions by harnessing enterprise data. But as a new generation of technologies make it easier than ever to unlock the value of business information, change is coming. We’ve already reaped gains at Hitachi Vantara, where I run a global IT team that supports 11,000 employees and helps more than 10,000 customers rapidly scale digital businesses.

The Future Belongs to the Data-Driven

I’m starting to hear questions like: “What comes next?” “Do things go back to the way they were?” “Are some of the changes wrought by the pandemic here to stay?” I think we all know part of the answer: there is no going (all the way) back. In the analog world, sure, we need some things to revert to bounce back. We need to revitalize retail, tourism and hospitality to get our economies moving again.

Remote work isn't going anywhere-have you addressed these cloud security risks?

It’s been over a year since enterprises around the world had to pivot and transition to work-from-home setups. While some employees are slowly trickling back into the office, majority of organizations have people working both onsite and offsite. This modern workforce has brought out an increasing reliance on cloud infrastructure, an essential tool for collaboration and business continuity. Technology like this isn’t without its risks though.

SaaS in 60 - Edit Data Alerts & Regional Data Settings

The Clear SHOW - S02E06 - DataOps pt. II Whaaa, so easy?!

Why You Need to Make Your Company "Other-Oriented"

Customer success is much more than a function.

Have a cool summer with BigQuery user-friendly SQL

With summer just around the corner, things are really heating up. But you’re in luck because this month BigQuery is supplying a cooler full of ice cold refreshments with this release of user-friendly SQL capabilities. We are pleased to announce three categories of BigQuery user-friendly SQL launches: Powerful Analytics Features, Flexible Schema Handling, and New Geospatial Tools.

10 Best Data Analysis Tools for Data Management

Pushing Past Pilot Paralysis to Launch and Scale IIOT Use Cases

With billions of industrial IoT (IIOT) devices in place, generating massive volumes of data from “the edge,” the potential for proof of concept success for use cases in the factory can be paralyzing. While the value of this digital revolution, aka Industry 4.0, is clear, realizing the full promise has been slow. Research and real-life experience from Accenture shows that many manufacturers get stuck early on or can’t get beyond proof-of-concept pilots to scale.

The Four Upgrade and Migration Paths to CDP from Legacy Distributions

The move into any new technology requires planning and coordinated effort to ensure a successful transition. This blog will describe the four paths to move from a legacy platform such as Cloudera CDH or HDP into CDP Public Cloud or CDP Private Cloud. The four paths are In-place Upgrade, Side-car Migration, Rolling Side-car Migration, and Migrate to Public Cloud.

How to be a data-driven organization: Key learnings from Chief Product Officer Summit

What is Data Catalog?

What is Data Preparation?

What is Data Replication?

Data Warehouse Automation

Loading Data into Snowflake Data Cloud

What is ETL?

How do data integration architectures work, and how are they continuing to evolve?

The Ultimate PII Checklist

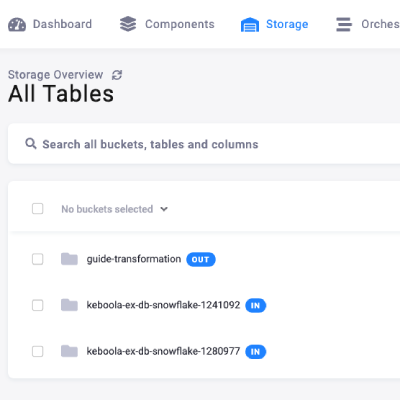

Product announcement: Say hello to the new and improved Storage UI

Network Performance and Data Integration

Data integration can easily become non-performant. Learn about some common bottlenecks.

Celebrating 9 Years of ThoughtSpot

Today, May 21st marks ThoughtSpot’s nine year anniversary as a company. We’ve come a long way from exchanging ideas at Starbucks and working from an office set-up inside LightSpeed Ventures for the initial few weeks. Today, we offer customers the most innovative cloud analytics platform in the world and help thousands of users ask and answer questions with data.

How to Establish an Effective BI Security Strategy

Mapping Your Automation Journey in Financial Services

Automation will fail to achieve most of its potential if treated as a specialty, single-function tool applied only to accelerate narrow parts of business processes. In most industries, financial services included, automation done properly is a journey that delivers a steady stream of benefits resulting from building a broader and increasingly powerful multilayered stack of automated processes and analytics.

Humans and Data? Relationship Status: Complicated

Many people may know me as someone who aims to find a mathematical angle in almost everything. And they wouldn’t be wrong – on my journey to bring my passion for math to the masses, I’ve even shown the mathematical angle for finding love! Yes, really – feel free to read my book on it. So, if there’s just one thing that I want people to take away from my chat with Joe DosSantos on Data Brilliant, it’s that math and data really do touch every part of our lives.

Future of Data Meetup: Collect, Curate, Predict & Visualise your Streaming Data

Effective Cost and Performance Management Amazon EMR Webinar Recording

ThoughtSpot Success Series #4 - Data Modeling Best Practices

ThoughtSpot and Databricks Partner to Deliver the Modern Analytics Cloud for the Data Lakehouse

What Is Fully Managed ELT?

Fully managed ELT allows data integration to be outsourced and automated, saving precious engineering time.

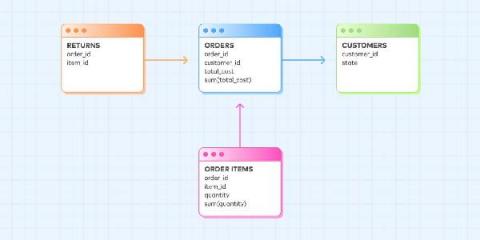

How to Use Measures with One-to-Many Joins

Make sure you don’t duplicate aggregated values when you join tables together.

Automated Anomaly Detection: The next step for CSPs

Today’s telecom engineers are expected to handle, manage, optimize, monitor and troubleshoot multi-technology and multi-vendor networks, in a competitive and unforgiving market with minimal time to resolution and high costs for errors. With the ongoing growth in operational complexities, effectively managing radio networks, current and legacy core networks, services, and transport and IT operations is becoming a radical challenge.

What FERPA Means for Your Data

NVIDIA RAPIDS in Cloudera Machine Learning

In the previous blog post in this series, we walked through the steps for leveraging Deep Learning in your Cloudera Machine Learning (CML) projects. This year, we expanded our partnership with NVIDIA, enabling your data teams to dramatically speed up compute processes for data engineering and data science workloads with no code changes using RAPIDS AI.

The Big Banks Are in Danger of Becoming Utilities. Can Data Make a Difference?

When digital disruption first hit the finance and investment markets, it seemed to signal the death knell for the traditional banks and financial institutions. For a time, it looked as though the best-known brands were doomed to become utilities: only good for basic financial transactions, weighed down by legacy processes and technologies, and viewed as little more than digital dinosaurs.

How 84.51°/Kroger Cut Costs and Improved Efficiency with Unravel Data

84.51° is a wholly owned subsidiary of Kroger, the US retailing giant – the largest supermarket chain in America, and the fifth-largest retailer in the world. As an organization, 84.51° is a descendant of dunnhumby, analytics geniuses who revolutionized customer loyalty programs at Tesco in the UK decades ago.

How to get started with data lineage

Building a Democratized Data Asset with Snowflake and DBT | Snowflake

Dashboards are dead

Building interactive data apps with ThoughtSpot

From "Fluff Metrics" to a 360-Degree Customer View

We use Fivetran ourselves for rapid access to rich, query-ready marketing data — the key to transformative insights.

Three Must-Haves for Your Modern Retail Data Stack

When retailers invest in a culture of data, they gain the ability to quickly navigate shifting customer priorities.

From Months to Days: How Autodesk Reinvented Its Data Ingestion Strategy

Buy or build? Autodesk's VP of Data Platform and Insights Jesse Pedersen cut Autodesk’s data ingestion process from six months to six days: Here’s how.

How to Use Product Analytics for SaaS Sales Pitches

Imagine you are preparing to approach a prospective client. You have done all the market research needed to understand the edge your product or service has over your competitors. You have identified your niche for higher profitability and you have profiled the key decision-makers that will be targeted by your outreach campaign. Would you like your pitch to fall flat just because you did not dazzle the prospect?

5 Reasons to Use Heroku and ETL

ETL tools and Heroku Connect both offer bidirectional data connections to Salesforce. So it would be natural to assume that you only need one or the other for your Salesforce integration. But, in fact, each tool has its own particular strengths that make the two systems complementary. Heroku is a software development platform and cloud service provider that empowers developers who build, deploy and scale web applications.

Security and Business Intelligence: Why it Matters

Companies deal with high volumes of data every day. In fact, 51% of businesses realize a positive difference in their bottom line by using their business intelligence (BI) to predict customer trends. According to one source, the BI market may reach close to $30 billion before the end of 2022. With so much money going into data management and so much resulting from it, the need for effective cybersecurity measures continues to grow by the day.

Streaming Market Data with Flink SQL Part II: Intraday Value-at-Risk

This article is the second in a multipart series to showcase the power and expressibility of FlinkSQL applied to market data. In case you missed it, part I starts with a simple case of calculating streaming VWAP. Code and data for this series are available on github. Speed matters in financial markets. Whether the goal is to maximize alpha or minimize exposure, financial technologists invest heavily in having the most up-to-date insights on the state of the market and where it is going.

The value of CDP Public Cloud over legacy Hadoop-on-IaaS implementations

Prior the introduction of CDP Public Cloud, many organizations that wanted to leverage CDH, HDP or any other on-prem Hadoop runtime in the public cloud had to deploy the platform in a lift-and-shift fashion, commonly known as “Hadoop-on-IaaS” or simply the IaaS model.

What's New in CDP Public Cloud? Data Flow and Operational Database

Building a Global GCP Platform With Snowflake | Rise of The Data Cloud

ThoughtSpot for Databricks

Accelerating Data Science to Production with MLOps Best Practices

The Clear SHOW - S02E05 - DataOps in ClearML Pt. I

History Mode for Databases Has Arrived

Our new feature helps you implement Type 2 slowly changing dimensions for your historical database analytics with no coding needed.

6 Tips for Configuring an ETL Solution in Salesforce

Salesforce is the world's #1 CRM (customer relationship management) platform. The service provides access to valuable data by logging and collecting customer interactions, regardless of the channel in which they take place. Whether it gets the information from phone calls, website transactions, or social media posts, Salesforce delivers customer data in real-time so business owners can gain essential insights.

What is HIPAA, and Why is It Important?

Healthcare information is perhaps the most important data in our lives. Your health records can contain your medical history, results of tests and scans, and details of current health insurance. This data is a special class of personally identifiable information, and HIPAA is the law that protects it.

Data health, from prognosis to treatment

When we talk about a healthy lifestyle, we know it takes more than diet and exercise. A lifelong practice of health requires discipline, logistics, and equipment. It is the same for data health: if you don’t have the infrastructure that supports all your health programs, those programs become moot.

What's new in CDP Private Cloud 1.2?

CDP (Cloudera Data Platform) Private Cloud 1.2 was recently released and builds on the success of CDP Private Cloud Base (see the 7.1.6 release blog). While Private Cloud Base is the ideal modernization of both CDH and HDP deployments for traditional workloads, Private Cloud adds cloud-native capabilities.

Key considerations when making a decision on a Cloud Data Warehouse

Making a decision on a cloud data warehouse is a big deal. Beyond there being a number of choices each with very different strengths, the parameters for your decision have also changed. Modernizing your data warehousing experience with the cloud means moving from dedicated, on-premises hardware focused on traditional relational analytics on structured data to a modern platform.

The search for actionable insights: 4 must-have analytics features

How to use ThoughtSpot and Databricks SQL

Today, the rate of innovation around data processing has accelerated beyond what any of us previously thought possible. Databricks recently announced their Databricks SQL offering, which is the next step in this evolution and builds on the foundation of Delta Lake to deliver interactive analytics at scale. This offering pairs with ThoughtSpot’s Modern Analytics Cloud to empower everyone in an organization to find answers to their questions with simple access to the data lake.

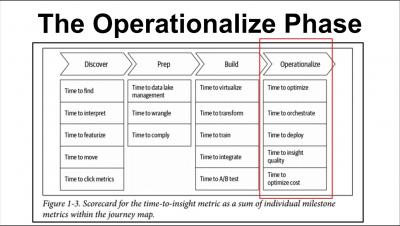

Operationalize Your Insights - The Self-Service Data Roadmap, Session 4 of 4

Why Business Intelligence is a Security Risk

Business intelligence (BI) software, such as Microsoft Power Bi, allows organizations to leverage big data and make better business decisions. Select Hub reports that 48 percent of companies place high or critical importance on these solutions. However, BI tools introduce a level of security risk that businesses must address.

Accelerate Moving to CDP with Workload Manager

Since my last blog, What you need to know to begin your journey to CDP, we received many requests for a tool from Cloudera to analyze the workloads and help upgrade or migrate to Cloudera Data Platform (CDP). The good news is Cloudera has a tried and tested tool, Workload Manager (WM) that meets your needs. WM saves time and reduces risks during upgrades or migrations.

5 Factors to Consider When Choosing a Stream Processing Engine

Are you using the right stream processing engine for the job at hand? You might think you are—and you very well might be!—but have you really examined the stream processing engines out there in a side-by-side comparison to make sure? Our Choose the Right Stream Processing Engine for Your Data Needs whitepaper makes those comparisons for you, so you can quickly and confidently determine which engine best meets your key business requirements.

Kafka Summit Europe in Review - Live with Cloudera

Data insights made simple, flexible, and proactive? Cheers to that.

Stonegate is Britain’s largest pub company, with 1,200 managed pubs and bars across the UK, 3,200 tenanted sites, and multiple brands including Slug & Lettuce, Yates, Be At One, Walkabout, and Popworld. The hospitality business was difficult enough before COVID-19 arrived, but the pandemic forced Stonegate to fundamentally rethink its core business and operational models.

What is a KPI dashboard? 6 key benefits & best practice examples

How to use CDC for database replication

Active Intelligence: Seize Every Business Moment

Expert Panel: AI for Connected Vehicles

Five Things I've Learned From Building Analytics Stacks at J.P. Morgan and Fivetran

A short guide to data management best practices for both large enterprises and fast-growing startups.

Cycle Time: The Most Important Metric that Helps You Nip Problems in the Bud

The art of management is to act at the right moment. Good leaders allow team members to be autonomous when things are going in the right direction. In the same time, they are swift in fixing up issues when it was a minor problem, thus avert a crisis later on in time. Knowing the right time act is not easy. This ability used to come from years of experience through trials and errors. With modern source control tools (e.g.

The Five Types of Data Integration

Data integration is crucial in today’s business world. Business data comes via many sources, from internal databases to clicks on a website. Being able to access all your data in one place helps your business make better, faster decisions. But how do you integrate all your data, and what’s the best way to do it? Here we discuss five data integration methods, how they work and why businesses continue to choose them.

Solving the Right Data Problem. Finally.

In late 2020, a CEO at an American bank revealed the thinking that’s becoming common in many businesses these days. “We’re a 103-year-old bank,” their CEO told me. “We’re doing everything on spreadsheets. But we are trying to become a highly profitable, digital-first bank that anticipates financial needs and empowers our clients with frictionless experiences. We need to become a data company.”

The Clear SHOW - S02E04 - DataOps is All You Need (?)

New Connector: Coupa

Enhance your financial analytics with our new Coupa integration.

The 7 Best Reporting Tools for 2021

Reporting tools solve a key problem for businesses by enabling them to communicate data in a way that is accessible, easy-to-understand, and useful for both frontline staff and management teams. These tools take raw data and turn it into tables, charts, and graphs ready for consumption, turning complicated data into visuals that better enable users to spot patterns and trends.

Security and ELT - A Tragedy

Extract, Load, Transform, or ELT, is a process that extracts data from the source, loads it directly into a data warehouse or data lake, and then transforms it to make it available for business intelligence tools. It supports all data types, from raw to structured. ELT is a popular way to ingest large volumes of raw data quickly, but it brings many security concerns with it.

Automating CDP Private Cloud Installations with Ansible

The introduction of CDP Public Cloud has dramatically reduced the time in which you can be up and running with Cloudera’s latest technologies, be it with containerised Data Warehouse, Machine Learning, Operational Database or Data Engineering experiences or the multi-purpose VM-based Data Hub style of deployment.

cdpcurl: Low-Level CDP API Access

Cloudera Data Platform (CDP) provides an API that enables you to access CDP functionality from a script, or to integrate CDP features with an application. In practice you can use the CDP API to script repetitive tasks, manage CDP resources, or even create custom applications. You can learn more about the API in its official documentation. There are multiple ways to access the API, including through a dedicated CLI, through a Java SDK, and through a low-level tool called cdpcurl.

ThoughtSpot Analytics Cloud

5 Ways to Improve Data Quality with Teradata

In 1979, Teradata began life as a collaboration between Caltech and Citibank. Today, this enterprise software group is all about redefining business intelligence tools and data management. The Teradata Database is now the Teradata Vantage Advanced SQL Engine, The name not only highlights the evolution of the company but also recognizes that tech consumers now expect more from their tools.

Dorel Home Gains Unique Insights Into the Health of Their Business With Qlik

Three Divisions, With Over 8,000 SKUs, Are Leveraging Qlik Sense Enterprise SaaS to Get a Centralized View of Sales, Operations and e-Commerce Performance and Opportunities.

MongoDB vs. MySQL: Detailed Comparison of Performance and Speed

MongoDB and MySQL are similar is some ways, but they also have some obvious differences. Perhaps the most obvious one is that MongoDB is a NoSQL database, while MySQL only responds to commands written in SQL. Potential users may want to examine MongoDB vs. MySQL in the areas of performance and speed. The following article will help you understand the differences, as well as the pros and cons of each database.

Quantifying the value of multi-cloud deployment strategies with CDP Public Cloud

In this article, I will be focusing on the contribution that a multi-cloud strategy has towards these value drivers, and address a question that I regularly get from clients: Is there a quantifiable benefit to a multi-cloud deployment? That question is typically being asked when I explain the ability to leverage container technology that offers a consistent deployment environment across multiple clouds and form factors (public, private, or hybrid cloud).

Mastercard Reduces MTTR and Improves Query Processing with Unravel Data

Mastercard is one of the world’s top payment processing platforms, with more than 700 million cards in use worldwide. In the US, nearly 40% of American adults hold a Mastercard-branded card. And the company is going from strength to strength; despite a dip in valuation of more than a third when the pandemic hit, the company has doubled in value three times in the last nine years, recently reaching a market capitalization of more than $350B dollars.

Unravel Data Featured in CRN's 2021 Big Data 100 List

In a press release delivered today, Unravel Data announced its appearance on CRN’s Big Data 100 list for 2021. Unravel’s entry appears in the Data Management and Integration category. Also featured in this category are other rising stars such as Confluent, Fivetran, Immuta, and Okera, all of whom spoke at new industry conference DataOps Unleashed, held in March.

"Reverse ETL" with Keboola

Future of Data Meetup: Continuous SQL With SQL Stream Builder

The Data Chief Live - People Change Management in Driving Self-Service Analytics

The Data Chief Live - People Change Management for Driving Self-Service Analytics

ThoughtSpot Success Series #3 - Use Case Prioritization

AWS & Iguazio Bring Data Science to Life: Develop on SageMaker | Deploy on Iguazio

Learn more about the joint AWS & Iguazio solution: https://www.iguazio.com/partners/aws/

Start working with MLRun, the open-source MLOps orchestration framework: https://github.com/mlrun/mlrun

End-to-End MLOps Demo of the Iguazio Feature Store

7 More Databox Integrations Now Support Custom Date Ranges

Virtualized environments need a new kind of monitoring

5G is in the process of transforming communications technology, enabling never-before-seen data transfer speeds and high-performance remote computing capabilities. As a cloud-native application, 5G provides advantages in terms of speed, agility, efficiency and robustness.

The Complete Guide to ETL with Teradata

Transferring data is a hassle if you don't have the right tools, particularly when you're transferring from multiple sources or in various formats. Fortunately, you can use tools to make the entire ETL process simpler and less prone to error. Platforms like Teradata make it easy to get your data where it needs to go.

5 Tips on Avoiding FTP Security Issues

Flat files are files that contain a representation of a database (aptly named flat file databases), usually in plain text with no markup. CSV files, which separate data fields using comma delimiters, are one common and well-known type of flat file; other types include XML and JSON. Thanks to their simple architecture and lightweight footprint, flat files are a popular choice for representing and storing information.

Spark on Kubernetes - Gang Scheduling with YuniKorn

Apache YuniKorn (Incubating) has just released 0.10.0 (release announcement). As part of this release, a new feature called Gang Scheduling has become available. By leveraging the Gang Scheduling feature, Spark jobs scheduling on Kubernetes becomes more efficient.

Q2 Features Update: Collaborating With Data, Wherever It, or You, Are

The world loves to talk about data, and how valuable it is. Yet, what that really refers to is insights – data by itself is meaningless, and, only from being able to extract insights that you can do something with, does raw information have value.

How Data Affects Healthcare | Rise of The Data Cloud | Snowflake

Automated Model Management for CPG Trade Effectiveness

Automating and Governing AI over Production Data on Azure - MLOPs Live #14 w/Microsoft

Industrializing Enterprise AI with the Right Platform - MLOps Live #9 - With NVIDIA

Simplifying Deployment of ML in Federated Cloud and Edge Environments - MLOPs Live #12 - with AWS

How Feature Stores Accelerate & Simplify Deployment of AI to Production MLOPs Live #13

The breakdown:

00:00 - Intro

02:15 - MLOps Overview

05:03 - Feature Engineering

07:44 - MLOps Workflow

10:44 - Solution: Feature Store

14:25 - Feature Store Competitive Landscape

17:03 - Features of a Feature Store

21:01 - CTO: Feature Store Sneakpeak

25:55 - Python Code example

27:57 - ML Pipeline example

30:07 - Covid-19 Patient Deterioration

33:26 - LIVE DEMO

52:45 - QA

Fivetran Achieves ISO 27001 Compliance Certification

ISO 27001 certification provides a standardized confirmation for customers regarding security best practices and capabilities.

Fivetran Launches Support for New Databricks + GCP Offering

Businesses can now use Fivetran with the Lakehouse Platform on Google Cloud. We are excited to launch Fivetran support for a newly available solution: Databricks on Google Cloud. We’ve partnered with both Databricks and Google Cloud for many years now, and understand the unique value they each deliver to Fivetran customers, so it was a priority for us to support their joint effort.

The ultimate Google Algorithm update checklist for your website

As we are all well aware, this month Google will be updating its algorithm with the aim of improving the user experience. With these changes, however, it’s reported that many of the top-ranking websites will be affected, meaning they need to take action now to ensure all of the hard SEO work they’ve done is not lost.

What is Vertica and How Can You Use It?

Trying to find the perfect database for your data? With so many choices available, it may be difficult to figure out which one to use. However, Vertica has plenty to offer, particularly if you’re dealing with big data and have massive datasets ready to go.

Streaming Market Data with Flink SQL Part I: Streaming VWAP

Speed matters in financial markets. Whether the goal is to maximize alpha or minimize exposure, financial technologists invest heavily in having the most up-to-date insights on the state of the market and where it is going. Event-driven and streaming architectures enable complex processing on market events as they happen, making them a natural fit for financial market applications.

New: Data scientists, run transformations and model data in R!

Database replication techniques

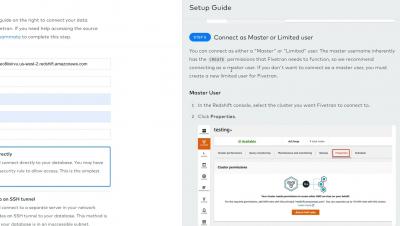

Demo: Fivetran Data Pipelines for AWS Redshift

ThoughtSpot Everywhere - Build Interactive Data Apps

The Clear SHOW - S02E00 - Celebrating ClearML v1.0 !!

Enterprise Data Architecture: Time to Upgrade?

Talend vs. Xplenty: Comparison and Review

The Talend Product Suite tries to be a one-stop-shop for everything data. Whether you need an ESB, iPaaS, API Gateway, or ETL platform, Talend has a tool for it. Xplenty, on the other hand, is a targeted solution that focuses on ETL only. It's an enterprise-grade ETL solution that empowers non-tech-savvy users to build sophisticated data integrations. Both Talend and Xplenty are powerful ETL solutions with excellent reputations and high functionality.

Powering Salesforce with Heroku and Xplenty

Did you know that you can use Heroku and Xplenty with Salesforce? While the Salesforce platform is plenty powerful on its own, you can supercharge it if you know what you’re doing.

Driving Agility and Scalability through Smart Data

Last year presented business and organizational challenges that hadn’t been seen in a century and the troubling fact is that the challenges applied pains and gains unequally across industry segments. While brick-and-mortar retail was crushed a year ago with mandated store closures, digital commerce retailers realized ten years of digital sales penetration in only three months.

ClearML hits 1.0

May 3rd 2021 – With over 11 man-years of working, and tinkering, long into the night, I am pleased to announce we have hit version 1.0. Following quickly after the release of ClearML 0.17.5, we added the last remaining features we felt 1.0 needed. Namely multi-model support, as well as improved batch operations. With these in place, the choice was clear. The next version released should be the baseline moving forward.

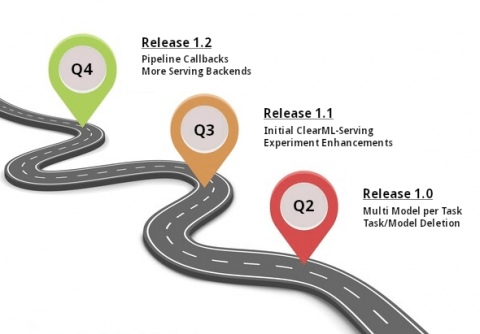

ClearML Community Roadmap

Few things in life are certain, least of all roadmaps. There is a saying I love, apart from the one above, which says “if you want to hear God laugh, tell him/her your plans”. Nowhere is that more true than in software development in a startup. There are grand ideas put forward, people often vie with one another, in short, life happens.

Build interactive analytics in your React App with ThoughtSpot Everywhere

ThoughtSpot has revolutionized access to analytics for business users through search and AI. In addition to being a general purpose analytics tool that allows unprecedented access to business users, product builders can now use ThoughtSpot to deliver search-based analytics to customers. Today, we are launching a brand new SDK that allows you to embed ThoughtSpot into your own web app in literally minutes.

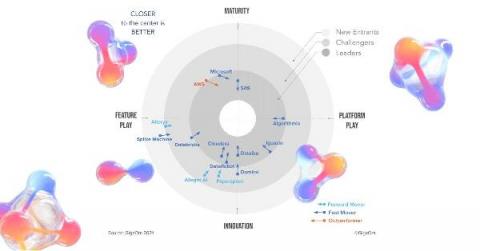

Iguazio Named A Fast Moving Leader by GigaOm in the 'Radar for MLOps' Report

At Iguazio, we’ve spoken and written at length about the challenges of bringing data science to production. The complexity of operationalizing ML can generate huge costs in terms of work hours and compute resources, especially as successful projects get scaled up and expanded. We’re proud to share that the Iguazio Data Science Platform has been named a fast moving leader in the GigaOm Radar for MLOps report.