Systems | Development | Analytics | API | Testing

July 2021

How to Run More Effective One-on-One Meetings with Your Employees: 11 Tips

The Dark Truth Behind Session Recording

A company is entitled to use session recording or session replaying as long as their marketing and analytics needs require so. However, as enticing the recording of everything the user does at all times can be, even within the existing regulations, there is a high chance that doing so will quickly push the data towards a non-compliant realm. And even in cases where regulations may not be explicit on the matter, we see more and more how the industry is leaning towards discouraging these practices.

Free Cycle Time Readout for GitLab Users

To celebrate GitLab’s latest release and GitLab Commit 2021 we offer free cycle time readout of all of your personal and company projects hosted on GitLab.com for a limited time. Use this link and your GitLab credentials to sign into Logilica Insights and we let you know about your software teams cycle time, delivery velocity and much more.

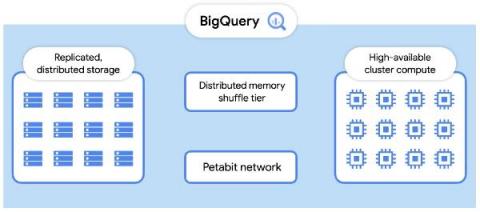

BigQuery Admin reference guide: Query processing

BigQuery is capable of some truly impressive feats, be it scanning billions of rows based on a regular expression, joining large tables, or completing complex ETL tasks with just a SQL query. One advantage of BigQuery (and SQL in general), is it’s declarative nature. Your SQL indicates your requirements, but the system is responsible for figuring out how to satisfy that request. However, this approach also has its flaws - namely the problem of understanding intent.

How to Build a REST API: Types and Requirements

Reflections on Leadership: A Fireside Chat with mentor(SHE:) and Yellowfin

A Window of Opportunity to the Data Cloud

When Apple launched its App Store in 2008, it opened a window of opportunity for thousands of software developers who rushed to invent the mobile-first world in which we all live today. Vendors that embraced the new paradigm, such as game developer Imangi, saw breakthrough success.

Why you need metadata management and how to approach it

ThoughtSpot Success Series #9 - Group Design, Privileges & Sharing

Fivetran Partners With Hightouch to Help Activate Your Data

Hightouch and Fivetran turn your data warehouse into your customer data platform.

Data Onboarding: What You Need to Know

Four Questions To Accelerate Edge-to-Cloud AI Strategy Development

“More than 15 billion IoT devices will connect to the enterprise infrastructure by 2029.” Finding data is not going to be a challenge, clearly, but taking advantage of it all to drive business outcomes will be. Combining AI and machine learning (ML) with data collection and processing capabilities of the edge and the cloud may hold the answer.

Unleash Advanced Geospatial Analytics in Snowflake

Both businesses and governments have been forced to respond to the global pandemic by developing interactive user experiences and spatial applications using location-based data sets to visualize COVID cases, communicate confinement measures, and track vaccine rollout progress.

ClearML and NVIDIA Inception Premier Member announcement

We’re excited to announce ClearML’s elevated recognition by NVIDIA as an Inception Premier Member.

Massive Data Transformation With Pepsi | Rise of The Data Cloud Podcast

Design considerations for SAP data modeling in BigQuery

Over the past few years, many organizations have experienced the benefits of migrating their SAP solutions to Google Cloud. But this migration can do more than reduce IT maintenance costs and make data more secure. By leveraging BigQuery, SAP customers can complement their SAP investments and gain fresh insights by consolidating enterprise data and easily extending it with powerful datasets and machine learning from Google.

What is Change Data Capture in SQL Server?

MLOps World Conference: From 12 Months to 30 Days to AI Deployment An MLOps Journey

Using AI/ML to Increase Gaming Monetization

Gamers are not shy about reaching into their wallets for premium content and features. They also won’t hesitate to tap the uninstall button at the first sign of trouble. It’s not uncommon for a gamer to boot up a hotly anticipated new game or revisit an old favorite only to put it down days or weeks later. The culprit is often gaming monetization issues that get in the way of what would otherwise be a long-term rewarding gaming experience.

Choosing an ERP: 5 Reasons Your Company Needs NetSuite

The 6 Soft Skills Data Engineers Need to Succeed

Five Strategies to Accelerate Data Product Development

With this first article of the two-part series on data product strategies, I am presenting some of the emerging themes in data product development and how they inform the prerequisites and foundational capabilities of an Enterprise data platform that would serve as the backbone for developing successful data product strategies.

[MLOps] The Clear SHOW - S02E13 - mlops_this: Copilot Shenanigans

Analysis: Fivetran is the Fastest-Growing Cloud ELT Provider

Gartner recognizes Fivetran as the “vendor of choice” for enterprises looking to modernize their data infrastructure.

How Achieve uses Databox to Boost Efficiency by 40%

Analyzing Return User Behavior

How to ensure data integrity with analytics testing

Data collections and analysis is critical to ongoing business operations, but maintaining data integrity is an often overlooked problem. Ensuring data integrity is not only a consumer trust issue, but is often also mandated by legal regulations. Without accurate data, business leaders could make decisions that are slightly (or majorly) misguided.

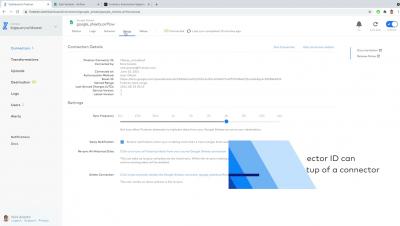

Demystifying a Heroku Salesforce Connector

How Military Data Innovation Drives Higher and Lower Risk Tolerance

“Data acumen” is a powerful new term. I heard it used recently in relation to a historically hard problem for the Department of Defense (DoD). The problem is the speed of data change. David Spirk, DoD’s Chief Data Officer, gave an historic keynote at GovCon Wire’s Data Innovation Forum, which was held in June 2021. He was joined by three key leaders in DoD data: Thomas Sasala (Navy), Eileen Vidrine (Air Force) and David Markowitz (Army).

7 Steps to Operationalize Your Data Warehouse

Beginner's Guide to Cloudera Operational Database

My name is Shanmukha Kota and I am a recent graduate from University at Buffalo. I interned with Cloudera last summer and joined Cloudera as a software engineer a couple of weeks ago and this is my first experience with CDP and CDP Operational Database. For a new hire college graduate in the industry with only academic experience with HBase, I can only say it is very simple and easy to set up and work with CDP Operational Database.

Data doesn't speak for itself: Why data storytelling is so important

How to Setup Knox LDAP Password Alias in CDP

How does BigQuery store data?

Richard Clark - Slack Hack Your Salesforce - Integrating Slack with Salesforce (Demo)

Extending the power of Chronicle with BigQuery and Looker

Chronicle, Google Cloud’s security analytics platform, is built on Google’s infrastructure to help security teams run security operations at unprecedented speed and scale. Today, we’re excited to announce that we’re bringing more industry-leading Google technology to security teams by integrating Chronicle with Looker and BigQuery.

5 Real-time Streaming Platforms for Big Data

Understanding Data-Driven CPQ

How to do data transformation in your ETL process?

Data Warehouse vs. Database

Data warehouses are a particular kind of database. Learn how they are uniquely suited to analytics.

Crux chose BigQuery for rock-solid, cost-effective data delivery

At Crux Informatics, our mission is to get data flowing by removing obstacles in the delivery and ingestion of data at scale. We want to remove any friction across the data supply chain that stops companies from getting the most value out of data, so they can make smarter business decisions. But as you may know, if you’re in the business of data, this industry never stands still. It’s constantly evolving and changing.

What is the Difference Between FTP and SFTP?

Embedding AI Into Every Aspect of Your Business

Most businesses, whether you are in Retail, Manufacturing, Specialty Chemicals, Telecommunications, consider a 10% market capitalization increase from 2020 to 2021 outstanding. But what would you say to your shareholders when they found out your competitors’ market capitalization grew 35%?

[MLOps] The Clear SHOW - S02E12 - Goodbye Fig .1 [Sculley15]

The Importance of ETL Security

ETL security is essential for safeguarding your customer's data and complying with regulations. Learn about the Fivetran approach.

Use HubSpot Data to Build a Holistic View of Your Customers

HubSpot data becomes vastly more valuable when you can use it to build a comprehensive view of customer behavior.

Transforming supply chain and logistics analytics at Avnet with ThoughtSpot and Azure Synapse

Supply chain and logistics operations can be a company's biggest source of financial risk or competitive advantage. The key is reconciling external supplier data like tariff and shipping information with internal data to deliver insights across teams and geographies.

20 Ways to Increase Your Revenue with Netsuite

Data Streaming (CDC) with Qlik

How Bee Inbound Uses Databox to Unify Client Data and Streamline Reporting

SFTP to Salesforce - Guide to a Secure Integration

Accelerate Offloading to Cloudera Data Warehouse (CDW) with Procedural SQL Support

Did you know Cloudera customers, such as SMG and Geisinger, offloaded their legacy DW environment to Cloudera Data Warehouse (CDW) to take advantage of CDW’s modern architecture and best-in-class performance? In addition to substantial cost savings upon moving to CDW, Geisinger is also able to search through hundreds of million patient note records in seconds providing better treatment to their patients.

Widen Your Focus to Drive Better Business Decisions

Data and technology are often hailed as a magic ingredient that can help solve so many problems. But, as I discussed with Joe DosSantos on Data Brilliant – it’s just one piece of the puzzle. In order to truly navigate uncertainty, the key is widening your focus and opening up your information sources. It comes down to balancing breadth of perspective with depth of expertise.

Build a Data Pipeline with Heroku ETL & Hadoop

A Reference Architecture for the Cloudera Private Cloud Base Data Platform

The release of Cloudera Data Platform (CDP) Private Cloud Base edition provides customers with a next generation hybrid cloud architecture. This blog post provides an overview of best practice for the design and deployment of clusters incorporating hardware and operating system configuration, along with guidance for networking and security as well as integration with existing enterprise infrastructure.

Optimizing Risk and Exposure Management - Roundtable Highlights

We recently hosted a roundtable focused on optimizing risk and exposure management with data insights. For financial institutions and insurers, risk and exposure management has always been a fundamental tenet of the business. Now, risk management has become exponentially complicated in multiple dimensions. In this session we explored what firms are doing to approach the uncertainty with more predictability.

CNC: The journey from Excel spreadsheets to automated data pipelines and fast, reliable insights

What's new in CDP Public Cloud? Administrators Edition

Future of Data Meetup: Hello, Kafka! (An Introduction to Apache Kafka)

Understanding jobs & the reservation model in BigQuery

ThoughtSpot Success Series #8 - Row Level Security Design Patterns

Why Does My Business Need to Transform Data?

Fivetran pipelines reliably load your data to your chosen destination, but then what? Without joining, filtering, and aggregating your data, your business can’t produce data models to answer critical business decisions. This is why data transformations are essential to every business looking to maximize value from the data they collect from disparate sources.

Using Analytics to Disrupt the Home Insurance Industry

A French startup is leveraging its modern data stack to overturn a longstanding business model.

Automatically Sync Formula Field Values in Salesforce

You no longer need to coordinate with your administrator and manually translate formulas from Salesforce SQL to Standard SQL.

New Integration: Track and Visualize Survey Responses with SurveyMonkey + Databox

11 Google Ranking Factors You Shouldn't Ignore

The Future of the Modern Data Stack

The Modern Data Stack is quickly picking up steam in tech circles as the go-to cloud data architecture, and although its popularity has been quickly rising, it can be ambiguously defined at times. In this blog post we’ll discuss what it is, how it came to be, and where we see it going in the future. Regardless of whether you’re new to the modern data stack or have been an early adopter, there should be something of interest for everyone.

When to Use Change Data Capture

Why CFOs Should Champion the Consumption Business Model

Traditionally, CFOs focus on cost and cost control when managing the financial actions of a company. “Value” is assessed by putting a quantifiable number behind everything. It’s time to shift that mindset and take into account a different kind of value—the kind you can derive from an investment. Rather than worrying solely about the bottom line, ask the question: If you spend more today, what will you end up creating tomorrow?

Demo Jam Live: Perform Flink stream processing and analytics using SQL

The Wonderful World of Data Governance with Disney Streaming's Anita Lynch | Rise of The Data Cloud

How Can We Improve Website Time on Page and Dwell Time?

Improving Accuracy in Business Forecasting

8 Best CRMs for Ecommerce in 2021

EDI Integration & Why It's Important to Your Business

5 Reasons Why Row-Level Security is Wrong for Your Data Warehouse

Integrating Data to Build Emotional Health: How SU Queensland Uses Talend to Enrich Service Delivery

The mission statement is so direct and uncomplicated. SU Queensland, a non-profit organization based in Australia, is all about “bringing hope to a young generation.” The realities of delivering on this charter, of course, are multi-dimensional and complex. SU Queensland provides a wide range of services to schools, churches, and community groups across Australia, including youth camps, school chaplains, community engagement programs, and training and support for youth workers.

Operationalizing Machine Learning for the Automotive Future

It’s no secret that global mobility ecosystems are changing rapidly. Like so many other industries, automakers are experiencing massive technology-driven shifts. The automobile itself drove radical societal changes in the 20th century, and current technological shifts are again quickly restructuring the way we think about transportation. The rapid progress in AI/ML has propelled the emergence of new mobility application scenarios that were unthinkable just a few years ago.

Delivering Modern Enterprise Data Engineering with Cloudera Data Engineering on Azure

After the launch of CDP Data Engineering (CDE) on AWS a few months ago, we are thrilled to announce that CDE, the only cloud-native service purpose built for enterprise data engineers, is now available on Microsoft Azure. CDP Data Engineering offers an all-inclusive toolset that enables data pipeline orchestration, automation, advanced monitoring, visual profiling, and a comprehensive management toolset for streamlining ETL processes and making complex data actionable across your analytic teams.

A CDO's Field Guide to Finding Value in Data

A proverb from the Democratic Republic of the Congo says, “A good chief is like a forest: Everyone can go there and get something.” And, a Chief Data Officer is no exception. According to Forrester Research published in January 2021, data leaders today face a broad mandate as the role has expanded over the years. In the early years, CDOs mostly reported to technology leaders.

[MLOps] The Clear SHOW - S02E11 - DIY Strikes Back! Building the Model Store!

dbt Explained

Learn how dbt adds data modeling and transformation to the modern data stack.

Why rebuilding data trust is key to driving business value with analytics

As a modern data leader, you know that real-time access to data-driven insights is key to driving higher levels of business growth and innovation, and better customer experiences. You also know that when frontline employees have easier access to data they’re able to make better decisions that ultimately boost your bottom line. But what happens when employees don’t trust the data in front of them?

5 data-driven solutions for global supply chains disruptions

In 2020, the pandemic tested supply chains in a manner few have seen in our lifetimes, with businesses like Apple struggling to predict demand and keep factory lines moving. The weaknesses exposed by this crisis are not brand new, but they should be a wake-up call that current strategies are not sustainable. The limitations of modern supply chains were becoming apparent last year when companies struggled to react to new tariffs and restrictions caused by Brexit and the U.S.-China trade war.

Choose the Right ERP Software for Business

[MLOPS] From #GTC21: Best Practices in Handling Machine Learning Pipelines on DGX Clusters

[MLOPS] From #GTC21: How to Supercharge Your Team's Productivity with MLOps

[MLOPS] From #GTC21: Workshop - Demonstrating an End-to-End Pipeline for ML/DL Leveraging GPUs

Introducing the Fivetran Protocol

How SaaS providers can build bulletproof data replication APIs.

How Can We Improve Website Time on Page and Dwell Time?

5 Reasons You Should Mask PII

What You Need to Know About NetSuite

Cloudera Operational Database Replication in a Nutshell

In this previous blog post we provided a high-level overview of Cloudera Replication Plugin, explaining how it brings cross-platform replication with little configuration. In this post, we will cover how this plugin can be applied in CDP clusters and explain how the plugin enables strong authentication between systems which do not share mutual authentication trust.

4 Considerations When Building Your Government Data Strategy

If you’ve followed Cloudera for a while, you know we’ve long been singing the praises—or harping on the importance, depending on perspective—of a solid, standalone enterprise data strategy. While certainly not a new concept, Government missions are wholly dependent on real time access/analysis of data (wherever it may be (legacy data centers or public cloud) to render insight to support operational decisions.

How to Launch Fivetran Through the Amazon Redshift Console

Amazon Redshift supports native integration with Fivetran. Here’s how to set it up.

How to Build a Modern Data Stack From the Ground Up

A data architecture expert gives a crash course on moving analytics to the cloud — including a step-by-step plan to ensure success.

How to Move Kubernetes Logs to S3 with Logstash

How Enterprise Data Lakes Help Expose Data's True Value

For all of the buzz surrounding both artificial intelligence and data-driven management, many companies have seen mixed results in their quest to harness the value of enterprise data. To avoid those pitfalls, we mixed best-of-breed and proprietary solutions to develop our enterprise data platform (EDP), focusing much of our attention on a combination of smart changes in technology, culture and process for data lakes.

Why Yellowfin built our own CRM analytics solution

How to Build a Modern Data Stack from the Ground Up

Fivetran's Airflow Provider Overview

The Data Chief Live: How to Organize Data & Analytics Teams

ThoughtSpot Success Series #7 - Intro to Advanced Searches

How to Prepare Data for Microsoft Power BI

Two Ways to Migrate Hortonworks DataFlow to Cloudera Flow Management

Hortonworks DataFlow (HDF) 3.5.2 was released at the end of 2020. The new releases will not continue under HDF as Cloudera brings the best and latest of Apache NiFi in the new Cloudera Flow Management (CFM) product. Getting the latest improvements and new features of NiFi is one of many reasons for you to move your legacy deployments of NiFi on this new platform. To that end, we released a few blog posts to help you migrate from HDF to CFM.

What to Measure?

In my previous blog posts, I’ve talked about how you can aggregate data depending on the data type, as well as how you can re-express your data to get more value from it. For this post, let’s look at some of the different ways of measuring your data.

Get the most out of Shopify Analytics

Four ways static dashboards are costing your business

Ask any analyst how they spend the majority of their work day and they’ll tell you: Performing remedial tasks that provide no analytics value. 92% of data workers report that their time is being siphoned away performing operational tasks outside of their roles. Data teams waste an inordinate amount of time maintaining the delicate data-to-dashboards pipelines they’ve created, leaving only 50% of their time to actually analyze data.

Achieving Data Agility Fuels Growth for Financial Services

Data paves the way for every strategic move made by banks and insurance companies. Whether looking to create a new service, complying with regulations, or overhauling and re-engineering legacy operations, a massive data project is always central to the effort. For financial services businesses, the pace at which they can reshape and repurpose data has become a key determinant of their ability to predict market trends and meet client expectations.

SaaS In 60 QlikView Apps with IE Plugin and New Dark Base Map Background Layer

Custom Date Ranges Now Enabled for Custom Tokens and Third-Party Integrations

The Complete Guide to Using Heroku Postgres

How to Identify Your Customers' Purchase Habits Using Google Analytics

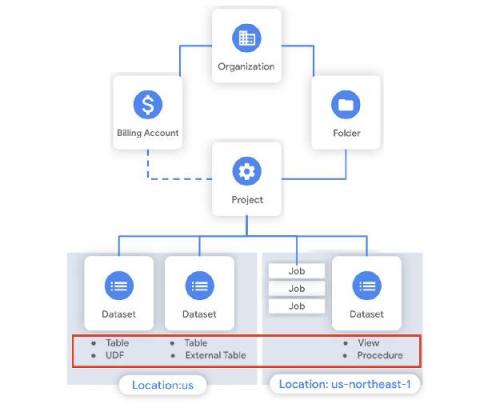

BigQuery admin reference guide: Tables & routines

Last week in our BigQuery Reference Guide series, we spoke about the BigQuery resource hierarchy - specifically digging into project and dataset structures. This week, we’re going one level deeper and talking through some of the resources within datasets. In this post, we’ll talk through the different types of tables available inside of BigQuery, and how to leverage routines for data transformation.

PII Pseudonymization: Explained in Plain English

What's new with BigQuery ML: Unsupervised anomaly detection for time series and non-time series data

When it comes to anomaly detection, one of the key challenges that many organizations face is that it can be difficult to know how to define what an anomaly is. How do you define and anticipate unusual network intrusions, manufacturing defects, or insurance fraud? If you have labeled data with known anomalies, then you can choose from a variety of supervised machine learning model types that are already supported in BigQuery ML.

Privacy & Security Rules for Healthcare Marketers

Turning Your Data Lake Into a Data Swamp

2021: The Year Banks Rethink Their API Strategy

Co-authored by Samta Bansal More than two million British people use Open Banking-based products today, a number that, despite the disruption of COVID-19, is double that seen at the start of 2020. No wonder International Banker dubbed 2021 “The Year of Open Banking.” As open banking spreads worldwide, its growth comes with the pressing need to drive standardization that bridges geographic boundaries and regulatory frameworks.

Yellowfin 9.6 release highlights

Tapping into the pulse of your data

During the product keynote at our recent QlikWorld online event, we unpacked the power of the analytics data pipeline to transform raw data into informed action. Imagine a data pipeline where information flows continuously into everyday processes, allowing your organization to seize every business moment, as it happens...