Systems | Development | Analytics | API | Testing

October 2021

Google Analytics Keyword Report: A Step By Step Guide on How to Track Keywords in Google Analytics

Building a startup: Three questions every startup must answer to beat their behemoths

When speaking about the historical trajectory of significant movements, Ghandi once said, “First they ignore you, then they laugh at you, then they fight you, then you win.” For startup founders, these words aptly illustrate the road — and obstacles — that lie ahead. Startups that succeed are destined, at some point, to face off against the most powerful incumbents in the world. Still, there has never been a better time to be a startup than right now.

Quickly, easily and affordably back up your data with BigQuery table snapshots

Mistakes are part of human nature. Who hasn’t left their car unlocked or accidentally hit “reply all” on an email intended to be private? But making mistakes in your enterprise data warehouse, such as accidentally deleting or modifying data, can have a major impact on your business.

What Is Data Integrity and Why Is It Important?

Migrating from Postgres to Amazon Redshift

These Are the Top 5 Snowflake Database Features for Salesforce Users Everywhere

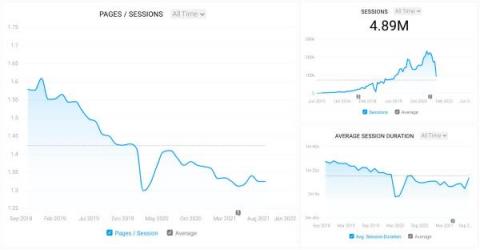

Special Broadcasting Service (SBS): Knowing and serving audiences better through data integration

SBS is Australia’s most diverse broadcaster, offering a multi-platform portfolio of TV, radio, and digital services. The company’s data is also diverse and multi-platform, and SBS understands its data is a key element in contributing to improving viewership, customer satisfaction, and loyalty. But what’s the key to using data more effectively? How do you bring together dozens of data sources into a single, central data platform?

High Availability (Multi-AZ) for CDP Operational Database

CDP Operational Database (COD) is an autonomous transactional database powered by Apache HBase and Apache Phoenix. It is one of the main Data Services that runs on Cloudera Data Platform (CDP) Public Cloud. You can access COD right from your CDP console. With COD, application developers can now leverage the power of HBase and Phoenix without the overheads that are often related to deployment and management.

How to defend against Ransomware, the threat that holds your data and business hostage

With contributions by Anwar Haq and George Alifragis Ransomware has grown to become a significant threat to organizations today, no matter the size or industry. Cybercriminals are exploiting vulnerabilities in small businesses and enterprises alike, creating short-term and long-term damage that can impact everything from your employees’ productivity to your relationship with customers.

Entrepreneurship Is Power

Live with Cloudera: Running NiFi flows in a Hybrid Data Cloud

Fueling financial freedom with data: A Q&A with Darren Pedroza, VP Enterprise Data and Analytics at First Command Financial Services

For members of the military, financial planning is a difficult and ever-changing process. Few businesses understand this better than First Command Financial Services, which focuses on serving the nation’s military families with flexible solutions to home mortgages, car loans, and wealth management.

11 Experts Share Their SaaS Growth Hacking Secrets

The Modern Data Stack Ecosystem - Fall 2021 Edition

In our previous article, The Future of the Modern Data Stack, we examined the motivations of the modern data stack, its current state, and looked optimistically into the future to see where it is headed. If you’re new to the modern data stack, we highly recommend giving the aforementioned article a read. A question we often get from new adopters of the modern data stack is “What tech should we be looking into?”.

New Report Reveals Best Practices for Hybrid & Multi-Cloud Data Management

It Worked Fine in Jupyter. Now What?

You got through all the hurdles getting the data you need; you worked hard training that model, and you are confident it will work. You just need to run it with a more extensive data set, more memory and maybe GPUs. And then...well. Running your code at scale and in an environment other than yours can be a nightmare. You have probably experienced this or read about it in the ML community. How frustrating is that? All your hard work and nothing to show for it.

Commercial Lines Insurance- the End of the Line for All Data

I’ve had the pleasure to participate in a few Commercial Lines insurance industry events recently and as a prior Commercial Lines insurer myself, I am thrilled with the progress the industry is making using data and analytics. However, I do not think Commercial Lines insurance gets the credit it deserves for the industry-leading role it has played in analytics. Commercial Lines truly is an “uber industry” with respect to data.

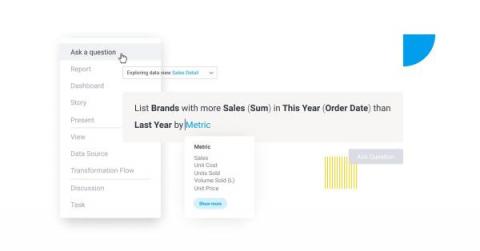

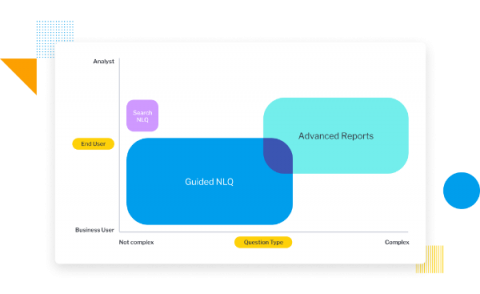

3 business considerations before you adopt NLQ

Twelve Best Cloud & DataOps Articles

Interested in learning about different technologies and methodologies, such as Databricks, Amazon EMR, cloud computing and DataOps? A good place to start is reading articles that give tips, tricks, and best practices for working with these technologies. Here are some of our favorite articles from experts on cloud migration, cloud management, Spark, Databricks, Amazon EMR, and DataOps!

Practical Advice for SAP Divestitures

Erin Byrne is a Senior Customer Solutions Engineer at Qlik and shares her practical advice to successfully divest your SAP systems. Erin has worked with SAP systems for over 20 years, has built more than 100 SAP systems and managed in excess of 30 divestiture projects.

C40 Cities Continues To Advance Climate Action By Harnessing Data With Qlik

The latest United Nations IPCC report paints a sobering picture. Climate change looks likely to accelerate in all regions as we approach the critical global warming threshold of 1.5°C. Such an uptick in temperatures will increase sea level rise and intensify the frequency and magnitude of extreme weather events. For cities, these changes will make governance more difficult in nearly every respect.

Live with Cloudera: Flink Forward in Review

Online Forex Broker Axi Moves to KX Insights

SaaS Reporting: How Performance Reports Helped SaaS Businesses in Improving Key Processes

5 Reasons Why Consolidating Your Analytics Data Is A Good Investment

Data is the lifeblood that runs through your organization. It powers automated workflows, gives customer service reps the full story every time the phone rings, drives every upgrade planned for a product, informs decision-making leaders on what to focus next, and an endless list of etceteras. Wouldn’t it be amazing to have all your data in one place? Yes. Can you? Well…. It’s complicated.

Data Transformation: Explained

5 Ways to Integrate Salesforce With Other Platforms

Is Your Business Ready for Digital Disruption?

It’s one of the great paradoxes of doing business in the 21st century: Customers expect more personalized products and services even as in-person customer contact decreases. This is just one of several disruptive trends facing every industry. At the first day of Hitachi Financial Services Summit 2021, I addressed these challenges in a keynote session with Marek Chlebicki of Raiffeisen Bank International.

Snowflake Announces Support for Google Cloud Private Service Connect

Snowflake was architected with cross-cloud security built into its core, providing multiple layers of robust protection from network access, to authentication and access control, to data protection using encryption (for more details on Snowflake security, check out the on-demand session from Snowflake Summit). For the most-regulated customers around the world, enabling private connectivity is a critical first line of defense.

The Customer Knows - Qlik's Leadership Highlighted in BARC BI & Analytics Survey 22

It’s great when analysts, media members and industry thought leaders tout your company’s leadership; it’s a point of pride for all of us behind the scenes working to continually improve Qlik Sense’s position as a leading and world-class analytics platform. However, the biggest praise (for me) comes from customers/users – the people Qlik Sense helps – whose recognition assure me we’ve really delivered.

Most Profitable Business Models for Agencies: According to 20 Agencies

The 11 Best Low-Code Development Platforms

The Ultimate Map to finding Halloween candy surplus

As Halloween night quickly approaches, there is only one question on every kid’s mind: how can I maximize my candy haul this year with the best possible candy? This kind of question lends itself perfectly to data science approaches that enable quick and intuitive analysis of data across multiple sources.

Do you want to build an ETL pipeline?

Introducing Qlik Forts - Brief Overview and Demo

Hybrid Cloud Analytics: What are Qlik Forts?

Hybrid Cloud Analytics: Multiple Geo's, Multiple Clouds

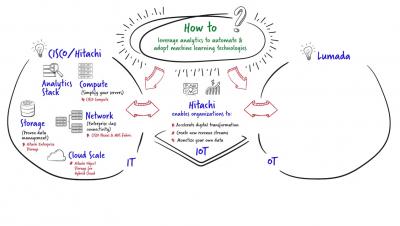

How to leverage analytics to automate & adopt machine learning technologies

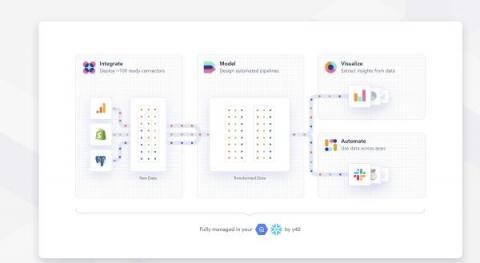

Y42 raises $31M to build the first scalable data platform that anyone can run

Google Analytics Automated Reports: Everything You Need to Know

Why Your Company Needs API Management

How to Bring Breakthrough Performance and Productivity To AI/ML Projects

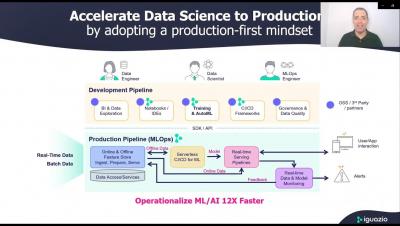

By Jean-Baptiste Thomas, Pure Storage & Yaron Haviv, Co-Founder & CTO of Iguazio You trained and built models using interactive tools over data samples, and are now working on building an application around them to bring tangible value to the business. However, a year later, you find that you have spent an endless amount time and resources, but your application is still not fully operational, or isn’t performing as well as it did in the lab. Don’t worry, you are not alone.

Cloudera Machine Learning Workspace Provisioning Pre-Flight Checks

There are many good uses of data. With data, we can monitor our business, the overall business, or specific business units. We can segment based on the customer verticals or whether they run in the public or private cloud. We can understand customers better, see usage patterns and main consumption drivers. We can find customer pain points, see where they get stuck, and understand how different bugs affect them.

New Features in Cloudera Streams Messaging Public Cloud 7.2.12

With the launch of the Cloudera Public Cloud 7.2.12, the Streams Messaging for Data Hub deployments have gotten some interesting new features! From this release, Streams Messaging templates will support scaling with automatic rebalancing allowing you to grow or shrink your Apache Kafka cluster based on demand.

The Business Case for Sustainable Supply Chains Is in the Data

Businesses and consumers are getting better at recognizing the direct carbon cost of the products they use. As such, we’re seeing an increased use of sustainable materials in consumer goods and global products. That is a big positive trend, but there’s a bigger picture to explore. Value chains make up 90% of an organization’s environmental impact, according to the Carbon Trust.

What Are APIs and How Do They Work?

Top 9 Data Aggregation Tools

How to Automate Apache NiFi Data Flow Deployments in the Public Cloud

With the latest release of Cloudera DataFlow for the Public Cloud (CDF-PC) we added new CLI capabilities that allow you to automate data flow deployments, making it easier than ever before to incorporate Apache NiFi flow deployments into your CI/CD pipelines. This blog post walks you through the data flow development lifecycle and how you can use APIs in CDP Public Cloud to fully automate your flow deployments.

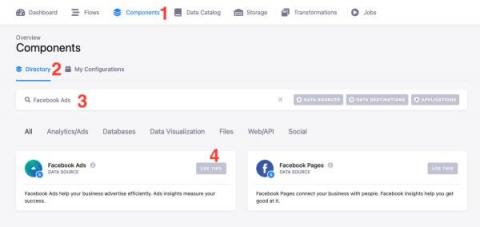

How to Transform Data using Xplenty

How to Setup a MySQL Connection within Xplenty

How to Setup a Database Connection in Amazon RDS with Xplenty

Qlik Sense - Product Tour

KX launches its on-demand training portal that widens access to game-changing Real-Time Analytics

Unlocking 360-Degree Customer Data for Retailer johnnie-O

Building an enterprise-class retail customer data warehouse

Quality Engineering Discussions: 5 Questions with James Espie

In this series, real (and really good) QA practitioners use their experience to support—or debunk what you might know about software quality. James Espie is a test specialist, a quality engineering proponent, and a continuous learner from Auckland, New Zealand. He shares his insights and sporadic bursts of inspiration in a hilarious newsletter called Pie-mail. If you haven’t seen it, you should check it out.

Using Search Query Report in Google Ads: 7 Best Practices for Refining Your PPC Campaigns

The Rise of the Cloud Data Platform and Index-Driven Data Lake

How API Management Works

How to Use Heroku Postgres to Migrate Data

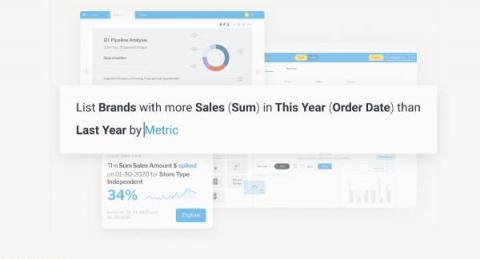

Natural language query: 5 benefits of Guided NLQ

M1 Democratizes Data Analytics with Snowflake as Part of Its Digital Transformation

Vibrant and dynamic digital-first telco M1, a subsidiary of Keppel Corporation, is focused on transforming telecommunications in Singapore. M1 provides a suite of services to more than 2 million customers and is Singapore’s first digital network operator. Data analytics have played an essential role in M1’s growth since the company launched commercial services in 1997.

Why Explainability Is the Foundation of Trust in Data

When you were a child, how many times did you use the argument with your parents “but everyone else is doing it” to rip holes in the knees of your jeans or dye your hair blonde or steal beer out of the fridge to take to a party? Only to be countered with “well, would you jump off a cliff just because all your friends did?” Wow, the frustration I felt at such a logical but basic argument.

ClearML-Data Lemonade: getting local datasets quickly and easily

Congratulations on creating a clean(ish) dataset to use for training! Now while the dataset is stored where it’s accessible to everyone, the distribution itself is a hassle! Local workstations, local GPU machines, and cloud machines (that may be spun up and down without disk persistence) are getting data everywhere. …and to say it is annoying is an understatement!

Data And The Food Industry | Deliveroo

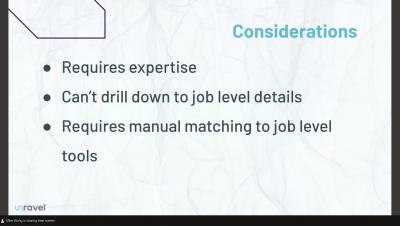

Managing Cost & Resources Usage for Spark

Xplenty's Rest API Component Tutorial

How to Setup a Snowflake Connection within Xplenty

OKR Reporting: Best Practices Shared by 29 Marketers

Operationalizing AI: Lessons from the Field

A casual stroll through recent tech headlines in the past few years makes two things abundantly clear: investment in AI is at an all-time high, and companies really struggle to get value out of AI technology. At first glance, these ideas seem to be at odds with each other: why consider investing in a field that hasn’t lived up to the hype? If you dig into the details, you’ll notice that a gap exists between the development and production use of AI in many companies.

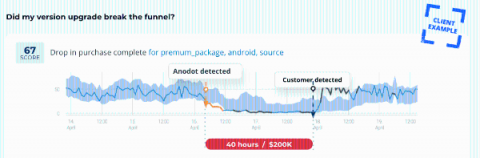

Real-Time Anomaly Detection: Solving Problems and Finding Opportunities

Success in today’s high-velocity business environments means having the correct information to make the right decisions at the right time. As marketplaces grow more competitive and customer expectations continually rise, the “right time” is often real-time. Every transaction generates a plethora of data. Anomalies within your company’s data set can represent opportunities and threats to the business.

5 Secrets to Integrating Snowflake

Integrating Snowflake with MS SQL Server using DreamFactory

How to Gain Greater Confidence in your Climate Risk Models

We are just over one week until the UN Climate Change Conference of the Parties, COP26 convenes in Glasgow. As governments gather to push forward climate and renewable energy initiatives aligned with the Paris Agreement and the UN Framework Convention on Climate Change, financial institutions and asset managers will monitor the event with keen interest.

Leveraging Automation Technologies for Data Governance

Modern Data Foundation for AI-Driven Results The following is Part II of a three-part series. In Part I of this series, I noted the following: “With just a few clicks on my smart device, I can review data on every place I’ve been, how much I spent, each step I took, what the weather was like and who I was with. Businesses collect the same abundance of data. However, are we getting the benefit and insights from what’s collected?

Dataiku Honours Data Science Heroes

8 Key Steps in a SaaS Sales Process to Win More Deals

5 Tips for Pushing Data from Your Warehouse to Salesforce

Introducing Self-Service, No-Code Airflow Authoring UI in Cloudera Data Engineering

Airflow has been adopted by many Cloudera Data Platform (CDP) customers in the public cloud as the next generation orchestration service to setup and operationalize complex data pipelines. Today, customers have deployed 100s of Airflow DAGs in production performing various data transformation and preparation tasks, with differing levels of complexity.

Bringing More to the Table: Azure and UDTF Support with Snowpark

In June, we announced that Java functions and the Snowpark API were available for preview in AWS. Today, we’re announcing a few additions to that preview: We’re expanding both where Snowpark is available as well as what you can do with it.

The Snowflake Media Data Cloud Enables Disney Advertising Sales' Innovative Clean Room Data Solution

Snowflake’s newly announced Media Data Cloud unites Snowflake’s powerful data sharing technology, the highest standards of privacy and governance, Snowflake- and partner-delivered solutions, and industry-specific data sets to help marketers, publishers, and advertising technology businesses succeed in the rapidly changing media and entertainment industry.

Qlik Sense SaaS in 60 - Master Visualization Charts in Tooltips

Developing a Basic Web Application using an Operational DB on CDP

How to Maximize Profit and Manage Revenue Streams: 8 Strategies for Agencies

New: Connect Your Dashboards Together in Databox Using Looped Databoards

Top 5 Informatica Alternatives

Fueling Sustainable Power Starts With Data

When power company executives were asked to list the most important issues facing their organizations, 45% overwhelmingly cited their top concern as “renewables, sustainability or the environment.” At the same time, global energy consumption continues to rise faster than the population. These two realities are reshaping the international energy sector. The push to produce more energy, in a greener manner, is propelling the industry to re-imagine the power grid of the future.

Don't settle for multi-cloud. Aspire to cross-cloud.

Organizations are more often running data and applications on multiple clouds, and that’s great. However, multi-cloud isn’t enough. To let loose the true power of data on your business, you must be cross-cloud. Cross-cloud means data moves easily between multiple public clouds without any additional work. It means never worrying about where your data and applications live or where your business and technical people are located.

New Snowflake Features Released in September 2021

Support for unstructured data is now in public preview! That’s one of many of the exciting announcements made in September, in addition to a new serverless tasks feature, expanded public cloud regions, enhanced business continuity capabilities, and several new providers on Snowflake Data Marketplace.

Management Reporting: 8 Best Practices to Create Effective Reports

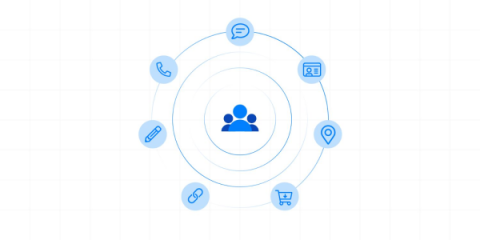

User Profiles Are Indispensable to Great CX (and They Must Be Privacy-Compliant)

Until not very long ago, many organizations defined business strategies based on isolated figures used by isolated teams. Unfortunately for them, that approach just won’t cut it anymore. Instead, we are fastly moving to a world where data will be the critical asset for businesses, and handling it accurately will be essential to survival.

The Complete Guide to Data Modeling Techniques

Apache Ozone - A High Performance Object Store for CDP Private Cloud

As organizations wrangle with the explosive growth in data volume they are presented with today, efficiency and scalability of storage become pivotal to operating a successful data platform for driving business insight and value. Apache Ozone is a distributed, scalable, and high performance object store, available with Cloudera Data Platform Private Cloud.

How Do You Choose the Right Data Career Path?

I am a firm believer that data is shaping the world around us, and so I constantly strive to drive awareness about the value of data and how it can bring great benefits to businesses and people. And, as I explained to Joe DosSantos in the latest episode of Data Brilliant, that means helping people identify the right data career path for them, and helping organizations understand the roles and skills they need within their business to help them succeed.

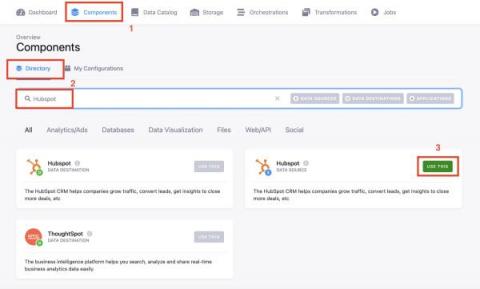

Do you want to get the most out of your HubSpot data?

The Role of Data in Financial Service Mergers

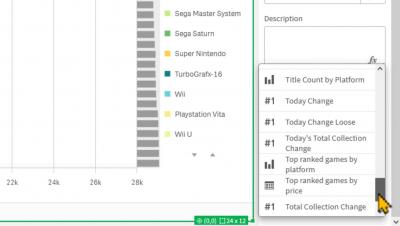

What's the Best Chart Type for Your Dashboard Metrics?

The Ultimate Guide to Building a Data Pipeline

11 Tools for the Citizen Integrator

Announcing CDP Public Cloud Regional Control Plane in Australia and Europe

We’re excited to announce CDP Public Cloud Regional Control Plane in Australia and Europe. This addition will extend CDP Hybrid capabilities to customers in industries with strict data protection requirements by allowing them to govern their data entirely in-region.

An introduction to Yellowfin Guided NLQ: What is it?

Harness the Power of AND

How to Create Scheduled Emails in Google Analytics: A Step-By-Step Guide

Disrupting the credit landscape with data: A Q&A with Credit Karma CTO, Ryan Graciano

Financing in America can be a confusing and complex process. The myriad offerings, rates, and forms are daunting for even the savviest consumer. Credit Karma simplifies the lending process by anonymizing individual borrower data and procuring multiple financing offers depending on what the consumer is looking to finance. Whether it be a sofa, a car, or a house, customers no longer need to fill out multiple forms; Credit Karma is their one-stop credit application.

Fivetran in 2022 and Beyond

Our VP of Product previews the next stage in the evolution of Fivetran.

AWS and CDC: A Dream Team for CDC

7 Tips for Building an API Management Strategy

Your Parents Still Don't Know What a Hashtag Is. Let's Teach Them the Basics of Machine Learning and Streaming Data

Quite often, the digital natives of the family — you — have to explain to the analog fans of the family what PDFs are, how to use a hashtag, a phone camera, or a remote. Imagine if you had to explain what machine learning is and how to use it. There’s no need to panic. Cloudera produced a series of ebooks — Production Machine Learning For Dummies, Apache NiFi For Dummies, and Apache Flink For Dummies (coming soon) — to help simplify even the most complex tech topics.

How to Turn your Data Center into a True Private Cloud

According to Domo, on average, every human created at least 1.7 MB of data per second in 2020. That’s a lot of data. For enterprises the net result is an intricate data management challenge that’s not about to get any less complex anytime soon. Enterprises need to find a way of getting insights from this vast treasure trove of data into the hands of the people that need it. For relatively low amounts of data, public cloud is a possible path for some organizations.

Fivetran Integrations Highlighted at Google Cloud Next '21

Fivetran integrates seamlessly with multiple Google Cloud services, and the addition of HVR technology will improve the experience.

What is new in Cloudera Streaming Analytics 1.5?

At the end of May, we released the second version of Cloudera SQL Stream Builder (SSB) as part of Cloudera Streaming Analytics (CSA). Among other features, the 1.4 version of CSA surfaced the expressivity of Flink SQL in SQL Stream Builder via adding DDL and Catalog support, and it greatly improved the integration with other Cloudera Data Platform components, for example via enabling stream enrichment from Hive and Kudu.

The Road Ahead: Digital Infrastructure For the Data-Driven

When conversations turn to digital transformation, it is usually smart phone apps, dizzying feats of AI, and domestic robots that capture all the attention. But the unsung heroes of I.T. know the truth: digital infrastructure is the foundation on which data-driven transformations are built. And increasingly we’re talking about two qualities digital infrastructure must possess if it is to be an effective backbone for digital transformation.

Qlik Sense SaaS in 60 - Last Evaluation, New Analytics Connectors and Chart Label Improvement

5 Tips for Pushing Data from Your Warehouse to Marketo

Accelerate Your Data Mesh in the Cloud with Cloudera Data Engineering and Modak Nabu

Modak, a leading provider of modern data engineering solutions, is now a certified solution partner with Cloudera. Customers can seamlessly automate migration to Cloudera’s cloud-based enterprise platform CDP from on-prem deployments and dynamically auto-scale cloud services with Cloudera Data Engineering (CDE)’s integration with Modak Nabu™.

Why natural language query (NLQ) didn't take off

The Rubber-Band Effect: How Organizations Are Catching Up To Themselves

In 2020, in response to the pandemic, we saw an urgent shift to SaaS and various emerging technologies. It was covered at length in “Introducing Trends 2021 – 'The Great Digital Switch'.” Largely driven by necessity, organizations needed to make drastic moves “to keep the lights on” and cater to operations in a more virtual and remote style. This big leap forward drastically changed the IT landscape and infrastructure in a lot of organizations.

Do you want to create and automate a digital marketing report?

Model Serving and Monitoring with the Iguazio MLOps Platform

Sales Report Templates For Daily, Weekly & Monthly, Quarterly and Yearly Statements (Sourced from 40+ Sales Pros)

How to Transfer Data from Postgres to Salesforce

Admission Control Architecture for Cloudera Data Platform

Apache Impala is a massively parallel in-memory SQL engine supported by Cloudera designed for Analytics and ad hoc queries against data stored in Apache Hive, Apache HBase and Apache Kudu tables. Supporting powerful queries and high levels of concurrency Impala can use significant amounts of cluster resources. In multi-tenant environments this can inadvertently impact adjacent services such as YARN, HBase, and even HDFS.

How to Create an Xplenty Workflow

Watch now: 3 secrets CROs need to know before going to market

How to Use the Talend Stitch Integration in the Amazon Redshift Console

Goals Based Reporting: Everything You Need to Know

New Report Shares Best Practices for Modern Enterprise Data Management in Multi-Cloud World

13 Great Reasons To Add APIs To Your Business

Talend iPaaS momentum grows. Talend recognized in the 2021 Gartner Magic Quadrant for Enterprise iPaaS

As organizations continue to embrace cloud-based computing as the cornerstone of their digital transformation, the integration platform as a service (iPaaS) has become a critical component of their integration environments. An iPaaS solution simplifies the integration of data, applications, and systems, whether in the cloud or on-premises, through unified support for API, application, data, and B2B integration styles.

How Cloudera DataFlow Enables Successful Data Mesh Architectures

In this blog, I will demonstrate the value of Cloudera DataFlow (CDF), the edge-to-cloud streaming data platform available on the Cloudera Data Platform (CDP), as a Data integration and Democratization fabric. Within the context of a data mesh architecture, I will present industry settings / use cases where the particular architecture is relevant and highlight the business value that it delivers against business and technology areas.

The Great Data Revolution Is Here, and Qlik Customers Are at the Heart of It

Data – the amount we create, how we create it, how it is accessed (think both people and Artificial Intelligence/machines), and how we use it to inform, propel and influence everyone and everything is one of the biggest challenges and opportunities we face in our lifetime. And it’s driving enormous change.

The Data Chief Live: Beyond the Buzz in Data Mesh, Lakehouse, Data Warehouse

Processing DICOM Files With Spark on CDP Hybrid Cloud

Why I joined Continual

Today, I’m excited to share that I’ve joined Continual as Head of Marketing. Continual is radically simplifying the path to operational AI with the first continual AI platform built for the modern data stack. More in a bit on what that means, but the “so what?” is about opening the door for more organizations to embed AI across their business at scale.

How Did You Detect and Handle Change Data Capture from The Source?

Snowflake BUILD 2021: Opening Keynote

Healthcare And Big Data | Rise Of The Data Cloud

Data lakes vs. data warehouses

Successful analytics depends on choosing the right approach to storing your enterprise data.

Benchmark Reporting: How to Prepare, Analyze and Present a Good Benchmark Report?

New: Create Custom Metrics from Google Search Console in Databox

Imagining the Future of Analytics and the Modern Data Stack

Three tech leaders discuss the future of analytics and data architecture — and how to get the most value from them.

5 Common API Management Tools and their Usage

Struggling to Manage your Multi-Tenant Environments? Use Chargeback!

If your organization is using multi-tenant big data clusters (and everyone should be), do you know the usage and cost efficiency of resources in the cluster by tenants? A chargeback or showback model allows IT to determine costs and resource usage by the actual analytic users in the multi-tenant cluster, instead of attributing those to the platform (“overhead’) or IT department. This allows you to know the individual costs per tenant and set limits in order to control overall costs.

An Introduction to Ranger RMS

Cloudera Data Platform (CDP) supports access controls on tables and columns, as well as on files and directories via Apache Ranger since its first release. It is common to have different workloads using the same data – some require authorizations at the table level (Apache Hive queries) and others at the underlying files (Apache Spark jobs). Unfortunately, in such instances you would have to create and maintain separate Ranger policies for both Hive and HDFS, that correspond to each other.

Troubleshooting Amazon EMR

7 Helpful Insights You Can Learn From a Data Attribution Report

Meet The Analyst - and Data Scientist - of the Future: Investec's Quaanitah Manique and Keotshepile Mosito

This blog is part of our ongoing "meet the analyst of the future" series, which profiles analysts who are transforming their organizations and supercharging their careers by embracing the future of analytics today.

Lenses.io joins forces with Celonis to bring streaming data to business execution

Today, I’m thrilled to announce that Lenses.io is joining Celonis, the leader in execution management. Together we will raise the bar in how businesses are run by driving them with real-time data, making the power of streaming open, operable and actionable for organizations across the world. When Lenses.io began, we could never have imagined we’d reach this moment.

Introducing the Kafka to Celonis Sink Connector

Apache Kafka has grown from an obscure open-source project to a mass-adopted streaming technology, supporting all kinds of organizations and use cases. Many began their Apache Kafka journey to feed a data warehouse for analytics. Then moved to building event-driven applications, breaking down entire monoliths. Now, we move to the next chapter. Joining Celonis means we’re pleased to open up the possibility of real-time process mining and business execution with Kafka.

5 Tips for Pushing Data From Your Warehouse to Zendesk

What Is a Citizen Integrator?

Xplenty Acquires FlyData, Adding Data Replication To Our Product Suite

Our reflections on the 2021 Gartner Magic Quadrant for Data Quality Solutions

Success for any business starts with data that is easily discoverable, understandable, and of value to the people who need it. We call this type of data “healthy data.” You should look at a wide set of measures and metrics to determine whether data is healthy or not, but at the core of all healthy data is a high level of quality.

Four Pillars of an Agile Data Infrastructure

Forbes Insights defines the modernized data center as being built to change just as much as it is built to last. One of the key pillars for a modernized data center is an agile data infrastructure. The Forbes Insights briefing explains, “This means it’s not wedded to any specific deployment method or solution set.

What is natural language query (NLQ)?

Business Forecasting with Cosmo, Chief Destiny Officer

For background on Cosmo, Chief Destiny Officer, and his alternative methods, read the earlier posts in this series about The role of a CDO and Customer Segmentation. Predicting the future always sounds a little magical, but we were intrigued to meet a CDO who says he actually makes business forecasts using magic instead of modeling. While at Talend we work exclusively with healthy data, we’ve always wondered what goes on at organizations that don’t rely on data for business decisions.

Quarterly Business Review: How to Write One and How to Present It Successfully

It's Official: Fivetran and HVR Are Now One

Fivetran has closed its acquisition of HVR. CEOs George Fraser and Anthony Brooks-Williams explain how it happened and what it means for customers.

Everything You Need to Know About Netsuite and Salesforce Integration

Solving the Cloud Cost Paradox

“You’re crazy if you don’t start in the cloud; you’re crazy if you stay on it,” quips a recent blog post from Andreessen Horowitz. Many companies are surprised by cloud bills that have shot past their expectations. Indeed, IDC predicts increased investment in public cloud cost management through 2022 as enterprises seek to cut cloud waste by 50%. This is the Cloud Cost Paradox.

Closing the Gap Between Data and Action - All in One Cloud

A favorite moment of mine is when I get to share Qlik’s vision for Active Intelligence with a customer for the first time. It usually goes like this: genuine excitement about the possibility – taking informed action in the moment from real-time data…invariably followed by many questions – where do I begin? What do I need? What about the tech stack I have already acquired?