Systems | Development | Analytics | API | Testing

February 2022

The Complete Guide to Profits Interest

Startups and limited liability companies are known for their limited funds, and they prefer to cut down on employee payments to preserve money and recycle whatever they generate into their growth. As a result, they often offer equity compensation to their employees; more specifically, profits interests.

Top 3 Benefits of MRI Automation for Real Estate Professionals

Financial professionals in real estate contend with a wide array of responsibilities—managing financial statements, office space floors, signage, storage space, land, and more. Regulations and interest rates are in a state of constant flux, and they must be assessed as changes arise to build accurate reports.

89% of Finance Pros Are Unhappy: Here's Why

Eighty-nine percent of financial professionals across multiple geographies and industries are dissatisfied with their operational reporting tools. Why is that number so high? What are the challenges they’re facing? More importantly, what would it take to turn that dissatisfaction into satisfaction?

How to embed Live Analytics to your React-based web app with ThoughtSpot Everywhere

ThoughtSpot Everywhere provides SDKs to make embedding ThoughtSpot into your application easy. In addition to the more general JavaScript components for embedding ThoughtSpot, developers can also use React-specific components for those writing React applications. Using the React components you can quickly embed analytics with just a few lines of code.

How To Extract Data From AWS Redshift Through SQL With Ease

Start delivering trusted insights - here's how

Data may be everywhere, but it isn’t free. It takes a lot of work and infrastructure to turn raw data into useful insights. Research suggests that the cost of handling data is only going to increase, by as much as 50% over five years. The same source suggests that part of that cost comes from confusion — users may spend up to 40% of their time searching for data and up to 30% of their time on data cleansing. The issue here is data trust.

Playing Offense Against Ransomware with a Modern Data Infrastructure

Has your company faced a ransomware attack yet? If not, count yourself lucky, for now. A June 2021 article in Cybersecurity Ventures predicts that ransomware will cost its victims approximately $265 billion annually by 2031. And, according to CRN, “Victims of the 10 biggest cyber and ransomware attacks of 2021 were hit with ransom demands totaling nearly $320 million.”

Systems development lifecycle (SDLC) with the Qlik Active Intelligence Platform -Part 1

Agent Remote Execution and Automation

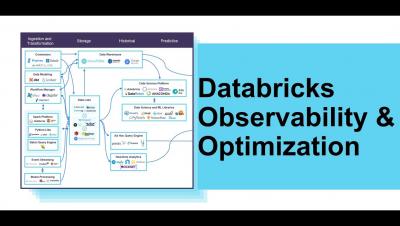

Databricks Monitoring, Observability, Optimization and Tuning

10 Key Operational Metrics to Track for Better Business Results

How Weidert Group Made a +$26k Pivot for One Client with the Help of Databox

What Is the Difference Between AWS Redshift and RDS?

Trends for 2022: How is data management evolving?

We all know the world is changing in profound ways. In the last few years, we’ve seen businesses, teams, and people all adapting — showing incredible resilience to keep moving forward despite the headwinds. To shed some light on what to expect in 2022 and beyond, let’s look at five major trends with regard to data. We’ve been watching these particular data trends since before the pandemic and seen them gain steam across sectors in the post-pandemic world.

Loading Hacker News Into Snowflake

Hello World - Spaces API

Transformative Budgeting and Planning: A CFO's Guide

Operational Reporting Trends Report

How to Write a Great Financial Report? Tips and Best Practices

Will Data Mesh, Data Fabric, or the Data Lakehouse Dethrone the Data Warehouse?

It feels like a holy war is brewing in data management. At the heart of these rumblings is something that may seem sacrilege to many data architects: the days of the traditional data warehouse are numbered. For good reason. As data volumes continue to grow exponentially, the industry is united in recognizing we need a faster, more agile way to leverage data to unearth insights and drive actions. But that’s about all the industry agrees on.

Talend drives data solutions for global automotive supplier

When you take your car in for a repair, it’s almost inevitable that the mechanic will identify additional problems you didn’t realize you had. But there’s positive flip side to that coin — sometimes when you solve one problem, you end up unexpectedly creating solutions for other challenges—that was the case for this global automotive supplier. In 2019, this automotive supplier set a goal and created a roadmap to integrate its master data.

How to build a data championship team to solve business problems

Sometimes it can feel like you’re stranded on a data island, scratching “SOS” in the sand in hopes of catching the eye of anyone who can rescue you. Companies everywhere are facing an explosion of data — with more data sources, more shadow IT, more people demanding access, and a growing number of business problems that can only be solved with data. As your company’s data leader, every one of those problems lands on you.

The Power and Possibility of Intentionality

In the latest installment of the EMEA Influential Women in Data webinar series, we welcomed Shirley Collie, Chief Health Analytics Actuary at Discovery Health to discuss everything from how the pandemic has impacted working, to the opportunities within data, and the importance of intentionality.

Install MongoDB on Ubuntu: 5 Easy Steps

Do you want to Install MongoDB on Ubuntu? Are you struggling to find an in-depth guide to help you set up your MongoDB database on your Ubuntu installation? If yes, then you’ve landed at the right place! Follow our easy step-by-step to seamlessly install and set your MongoDB database on any Ubuntu and Linux-powered system! This blog aims at making the installation process as smooth as possible!

#40 ServiceNow's Vijay Kotu on the Power of Micro Decisions and Aligning Data to Business

Global Trends Report from insightsoftware and Hanover Research Reveals Nearly 90% of Finance Professionals Struggle With Operational Reporting

Acquisition Reports In Google Analytics: Everything You Need to Know

How to build data platforms using the modern data stack

Learn how data consultancy Untitled Firm effortlessly connects to its customer data and creates powerful analytic applications – using Powered by Fivetran.

Global Trends Report from insightsoftware and Hanover Research Reveals Nearly 90% of Finance Professionals Struggle With Operational Reporting

Nearly one in three financial reports are manually produced Many decision-makers spend hours on recurring reports, which creates inefficiencies and costs companies tens of thousands per team member RALEIGH, N.C. – February 23, 2022 – insightsoftware, a global provider of financial reporting and performance management solutions for the Office of the CFO, today announced The Operational Reporting Global Trends Report.

Cap Table Management 101+Downloadable Excel Template

A capitalization table, more commonly referred to as a “cap table,” provides a detailed record of the ownership stakes held by various investors, employees, and others who own shares in your company. The cap table documents who owns what, when it was acquired, what conditions may apply to ownership of specific shares, and more.

Cloudera: Enabling the Cloud-Native, Data-Driven Techco

The telecommunications industry has been doing well since the pandemic started (not that many would notice). Revenues have remained relatively stable, while consumption has gone up, as virtual engagement has become the primary mode of operations for many businesses (and families!) In the mean-time, digital transformation has been accelerating both as a means to respond to the pandemic, and as a mechanism to drive costs down further, allowing for margin growth.

How Hyperautomation Can Remove the Obstacles to Your Digital Transformation

For almost a decade now, global business leaders have heralded the beginning of the Fourth Industrial Revolution, which refers to how technologies like AI, robotics, IoT, autonomous vehicles and computer vision are blurring the lines between the physical, digital, and biological spheres. Industry 4.0 has paved the way for transformative changes in business, unleashing advances in business process automation in the front and back office, driving unprecedented productivity and growth.

Cloud Management for the Modern Workload

The road to the data-driven enterprise is not for the faint of heart. The continuous waves of data pounding into ever-complex hybrid multicloud environments only compound the ongoing challenges of management, governance, security, skills, and rising costs, to name a few. But Hitachi Vantara has developed a path forward that combines cloud-ready infrastructure, cloud consulting and managed services to optimize applications for resiliency and performance, and automated dataops innovations.

Enterprise Hybrid-Cloud Stack: Consistent Kubernetes Experience Across Multicloud

Organizations today face challenges from rapidly changing markets, new technologies, and the need to build new modern apps running in a multicloud environment. For this reason, business leaders are demanding faster delivery of new applications, services, and insight, requiring greater agility and efficiency from IT. Enterprises, rightly so, are investing in modernizing their on-premises infrastructure with increased use of the cloud.

Building a Flexible Hybrid Cloud for Today and Tomorrow

Although many enterprises are at varying stages in their cloud journeys, most are adopting distributed mixes of on-premises and public cloud environments in order to maintain certain data and applications close by, while making others more accessible and available online. With such distributed cloud networks, core tenants of the enterprise, such as management, scalability and security, become increasingly challenging. There is a path forward, however.

What is Integrate.io?

Setting up the Modern Data Stack from Scratch

How Can Small Business Owners Leverage Location-Based Marketing? 10 Tips and Examples

DORA metrics with the Humanitec IDP

We are happy to announce our latest addition of out-of-the-box analytics support for software lifecycle DevOps tools: Welcome to the Humanitec Insights connector! Humanitec is the Internal Developer Platform (IDP) that does the heavy lifting of Role-based access control (RBAC), Infrastructure Orchestration, Configuration Management and more. Humanitec’s API platform enables everyone to self-serve infrastructure and operate apps independently.

Automating MLOps for Deep Learning: How to Operationalize DL With Minimal Effort

Operationalizing AI pipelines is notoriously complex. For deep learning applications, the challenge is even greater, due to the complexities of the types of data involved. Without a holistic view of the pipeline, operationalization can take months, and will require many data science and engineering resources. In this blog post, I'll show you how to move deep learning pipelines from the research environment to production, with minimal effort and without a single line of code.

Introducing Apache Iceberg in Cloudera Data Platform

Over the past decade, the successful deployment of large scale data platforms at our customers has acted as a big data flywheel driving demand to bring in even more data, apply more sophisticated analytics, and on-board many new data practitioners from business analysts to data scientists. This unprecedented level of big data workloads hasn’t come without its fair share of challenges.

Data Classification Now Available in Public Preview

Organizations trust Snowflake with their sensitive data, such as their customers’ personal information. Ensuring that this information is governed properly is critical. First, organizations must know what data they have, where it is, and who has access to it. Data classification helps organizations solve this challenge.

Unlocking New Revenue Models in the Data Cloud

Today’s applications run on data. Customers value applications not only for the functionality they provide, but also for the data itself. It may sound obvious, but without data, apps would provide little to no value for customers. And the data contained in these applications can often provide value beyond what the app itself delivers. This begs the question: Could your customers be getting more value out of your application data?

How to use the BigQuery command-line tool

Lenovo: Improving customer segmentation with real-time analytics

10 Digital Marketing Metrics Your Business Pays Too Much Attention To (And Which Ones Actually Matter)

How to Write a Monthly Business Report? 5 Report Examples and Templates

Streaming data into BigQuery using Storage Write API

BigQuery is a serverless, highly scalable, and cost-effective data warehouse that customers love. Similarly, Dataflow is a serverless, horizontally and vertically scaling platform for large scale data processing. Many users use both these products in conjunction to get timely analytics from the immense volume of data a modern enterprise generates.

The 5 Types of Data Processing

Why is AWS Redshift Used? Integrate.io Has the Answer

Snowpark Explained In Under 2 Minutes

Qlik Lineage Connectors Brief Overview and Use Case

8 Ways to Choose the Right Facebook Ad Objectives for Your Agency or SME

Top 3 Reasons Spreadsheets Miss the Mark for Cap Table Management

Cap tables are a valuable tool for a close look at the equity capitalization within your organization. But relying on static spreadsheets makes it difficult to gain a comprehensive, real-time view of your capitalization structure. Sifting through spreadsheets manually and reconciling disconnected systems are both time-consuming and cumbersome.

Make Your AWS Data Lake Deliver with ChaosSearch (Webinar Highlights)

Loading Data to Redshift: Five Options and One Solution

Upgrade Hortonworks Data Platform (HDP) to Cloudera Data Platform (CDP) Private Cloud Base

CDP Private Cloud Base is an on-premises version of Cloudera Data Platform (CDP). This new product combines the best of Cloudera Enterprise Data Hub and Hortonworks Data Platform Enterprise along with new features and enhancements across the stack. This unified distribution is a scalable and customizable platform where you can securely run many types of workloads. CDP is an easy, fast, and secure enterprise analytics and management platform with the following capabilities.

Connected Apps or Managed Apps: Which Model to Implement?

We recently wrote about the interest we’re seeing in connected applications that are built on Snowflake. Connected applications separate code and data such that the app provider creates and maintains the application code, while their customers manage their own data and provide their data platform for processing the application’s data. Some of our partners choose the connected application model because it has benefits for both customers and application providers.

Operationalizing Data Pipelines With Snowpark Stored Procedures, Now in Preview

Following the recent GA of Snowpark for our customers on AWS, we’re happy to announce that Snowpark Scala stored procedures are now available in preview to all customers on all clouds. Snowpark provides a language-integrated way to build and run data pipelines using powerful abstractions like DataFrames. With Snowpark, you write a client-side program to describe the pipeline you want to run, and all of the heavy lifting is pushed right into Snowflake’s elastic compute engine.

Looking Back: To All the Reports I've Generated

This post is going to be a bit of a step back into the past. As Mork from Ork would say: “nanu nanu.”

Looking Back: Expanding the Data Analytics Universe

Here’s one of the most memorable quotes I have heard from a customer here in Asia: “Every time they tell me it’s ‘not in the universe’, I feel like mine is collapsing.”

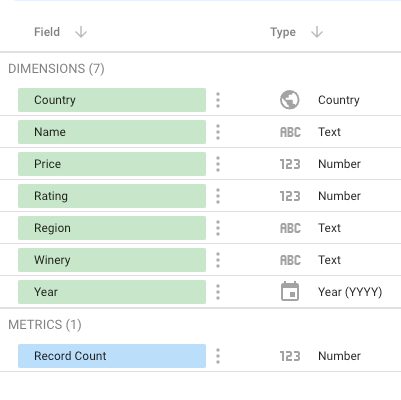

How to get data from Keboola to Google Data Studio?

How Monte Carlo Built A Data Reliability Platform On Snowflake

West Midlands Police Force | Faster Data, Safer Streets

Trends that will dominate analytics & BI in 2022

Fully managed ELT, DataOps and more trends that will change the way we use data this year.

Ecommerce Analytics 101: How to Drive More Online Sales With Data

Lenses 5.0: The developer experience for mass Kafka adoption

Kafka is a ubiquitous component of a modern data platform. It has acted as the buffer, landing zone, and pipeline to integrate your data to drive analytics, or maybe surface after a few hops to a business service. More recently, though, it has become the backbone for new digital services with consumer-facing applications that process live off the stream. As such, Kafka is being adopted by dozens, (if not hundreds) of software and data engineering teams in your organization.

A visual guide to transformations: The Salesforce data model

Analysis-ready data models are built using sequences of transformations. Here's an example using Fivetran’s data model for Salesforce.

6 SAP companies driving business results with BigQuery

Digital technology promises transformative results. Yet, it’s not uncommon to encounter potholes and speed bumps along the way. One area that frequently trips up businesses is putting data into action. It can be extraordinarily difficult to take advantage of the right data at exactly the right time — in real time — to drive decision-making. For SAP customers wanting to maximize the value of their data, Google Cloud offers a number of capabilities.

Executing Data Integration on Amazon Redshift

6 steps towards healthier data

The value of healthy data is obvious. But how do you build that practice in your own business? The difference between people who live a healthy lifestyle and those who don’t isn’t whether they know how to be healthier — it’s whether or not they prioritize diet, sleep, and exercise in their daily life. The same is true for your data: if you don’t have the infrastructure that supports your customer 360 initiatives , those initiatives become moot.

Of Muffins and Machine Learning Models

While it is a little dated, one amusing example that has been the source of countless internet memes is the famous, “is this a chihuahua or a muffin?” classification problem. Figure 01: Is this a chihuahua or a muffin? In this example, the Machine Learning (ML) model struggles to differentiate between a chihuahua and a muffin.

Snowpark For Python In 2 Minutes

The Modern Data Stack Outlook for 2022

4 Things You Can Learn About Your Brand's Strength by Analyzing Direct Traffic in Google Analytics

Can SQL be a library language?

The time has come for the open-source software revolution to reach SQL.

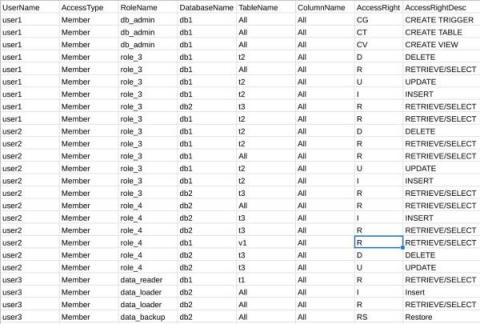

Speed up your Teradata migration with the BigQuery Permission Mapper tool

During a Teradata migration to BigQuery, one complex and time consuming process is migrating Teradata users and their permissions to the respective ones in GCP. This mapping process requires admin and security teams to manually analyze, compare, and match hundreds to thousands of Teradata user permissions to BigQuery IAM permissions. We already described this manual process for some common data access patterns in our earlier blog post.

Transformative Budgeting and Planning: Five Factors to Consider

Budgeting is one of those essential processes in which every business must engage. It’s critical to have a meaningful financial plan in place, to have realistic targets to achieve. Unfortunately, traditional models for financial planning and budgeting are increasingly strained as businesses strive to cope with change. Many are seeking leaner, more agile budgeting and planning options.

8 Benefits of Setting Up a Data Warehouse in AWS Redshift

New from Talend Data Inventory: Create data APIs in minutes

It’s official — Self-Service Data APIs are available as a new feature of Data Inventory! Highly skilled and less-technical users can now make their datasets available to applications and partners via APIs in just a few clicks. Self-Service Data APIs empower every permissioned employee to leverage data across the business to generate valuable insights or even extend data availability beyond the organization to trusted partners.

Piano Brings Advanced Analytics to Customer-Centric Organizations with Snowflake

Piano is a leading provider of software and services that help organizations better understand their audiences across digital channels. With these insights in hand, Piano customers can intelligently serve audiences with personalized experiences that keep them engaged and drive revenue, all through a single unified platform.

When Do You Need Data Observability?

Almost every organization is a data organization. Yet, as data proliferates, data ecosystems have become increasingly complex, making it harder for organizations to control and prevent data quality issues from occurring. Data Observability gives organizations the ability to monitor data usage throughout the data ecosystem.

SaaS in 60 - KPI background Color (other KPI improvements)

Thinking beyond the Data Warehouse, Data Lake and Data Lakehouse

Top three requirements for self-service analytics according to Harvard Business Review

Being data-driven is no longer optional. With increased digital dexterity among customers and fast-changing market conditions and disruptions, organizations have entered the defining decade of data. This new era is characterized in part by the need to put live data directly into the hands of frontline decision-makers with self-service analytics.

How Healthy is Your Sales Pipeline? 8 Strategies to Make It Stronger

Connect to your customers' data using a Connect Card link

Learn the 6 steps to easily and securely onboard data from your customers, without ever touching their login credentials. It’s as easy as sending an email.

To user-friendly SQL with love from BigQuery

Thirty five years ago, SQL-86, the first SQL standard, came into our world, published as an ANSI standard in 1986 and adopted by the International Standards Organization (ISO) in 1987. On this Valentine’s Day, we, in BigQuery, reaffirm our love and commitment to user-friendly SQL through a whole slew of new SQL features that we’re pleased to share with you, our beloved BigQuery users.

Examining the Skills Most in Demand by Tax Teams: The Merger of Tech and Finance

Finance teams have played a leading role in the adoption of technology to transform previously inefficient manual or spreadsheet-based processes. While investment in tax and transfer software has tended to lag that in core finance systems, adoption is maturing and pressure from the office of the CFO to implement digital tools is beginning to grow.

A Beginner's Guide to Amazon Redshift

Integrate.io Now Has a New NetSuite Connector!

Make the leap to Hybrid with Cloudera Data Engineering

Note: This is part 2 of the Make the Leap New Year’s Resolution series. For part 1 please go here. When we introduced Cloudera Data Engineering (CDE) in the Public Cloud in 2020 it was a culmination of many years of working alongside companies as they deployed Apache Spark based ETL workloads at scale.

Founder's Guide to Setting Up a Data Analytics Foundation

How to Present Qualitative Data in a Business Report? A Step-By-Step Guide

10 Ways to Maximize Your Amazon Redshift Experience

Top 9 Machine Learning Events for 2022

Getting Started with Machine Learning

In recent years, Ethical AI has become an area of increased importance to organisations. Advances in the development and application of Machine Learning (ML) and Deep Learning (DL) algorithms, require greater care to ensure that the ethics embedded in previous rule-based systems are not lost. This has led to Ethical AI being an increasingly popular search term and the subject of many industry analyst reports and papers.

The Year of the Forever Skills

During the information age, and throughout the 4th industrial revolution, technology, data and information were in abundance. But, soon came the realization that technology and access to all of the data and information, by itself, was not the solution.

Episode 3: How telematics leader Geotab powers innovation with BigQuery

Experiment Management Best Practices

Hevo Enables Lovebox to Gain Deeper Customer Insights

High Shopping Cart Abandonment Rate: Causes and Potential Solutions

It's Not Just About Customer Retention, it's About Customer Loyalty

It’s no secret that it’s much cheaper to retain a customer than get a new one — 5 times cheaper, according to Forbes. The paradigm however seems to be changing: retaining is not enough when there are a lot of easily reachable competitors out there. You need to go one step further: make customers loyal.

Top 7 AWS Redshift ETL Tools

Top 7 Virtual Salesforce Conferences And Events For 2022

How to break down silos and free your data

As a modern, data-driven organization, you are likely pulling data from a multitude of diverse sources. There’s consumer data from marketing programs, CRM, and point of sale systems, plus financial data from accounting software and banking services. Finally, there is product data from user logs and web applications. With so much data pouring in every day, it feels like you should have everything you need to answer any question that could arise. And yet, so many times you don’t.

Announcing the GA of Cloudera DataFlow for the Public Cloud on Microsoft Azure

After the launch of Cloudera DataFlow for the Public Cloud (CDF-PC) on AWS a few months ago, we are thrilled to announce that CDF-PC is now generally available on Microsoft Azure, allowing NiFi users on Azure to run their data flows in a cloud-native runtime. With CDF-PC, NiFi users can import their existing data flows into a central catalog from where they can be deployed to a Kubernetes based runtime through a simple flow deployment wizard or with a single CLI command.

How Data & AI Can Help Make Utility Line Inspections Safer

Electricity is fundamental to our society. As climate change becomes more severe and demand for clean energy increases, the future is the electrification of everything and along with it, the need for reliable energy. The U.S. infrastructure spans over a vast 200,000 miles and inspecting all of it is a time-consuming and high-risk process that often calls for hanging from helicopters or climbing tall towers. It is inefficient, costly, and dangerous.

The 7 Ts of product-Led Transformation

The Data Chief LIVE: Better for everyone: How to battle bias in AI

What is a data mart?

Data marts are miniature, specialized data warehouses.

Analytics For Small Business: Here's Why SMEs Must Adopt It

Unified data and ML: 5 ways to use BigQuery and Vertex AI together

Are you storing your data in BigQuery and interested in using that data to train and deploy models? Or maybe you’re already building ML workflows in Vertex AI, but looking to do more complex analysis of your model’s predictions? In this post, we’ll show you five integrations between Vertex AI and BigQuery, so you can store and ingest your data; build, train and deploy your ML models; and manage models at scale with built-in MLOps, all within one platform. Let’s get started!

How Wayfair says yes with BigQuery-without breaking the bank

At Wayfair, we have several unique challenges relating to the scale of our product catalog, our global delivery network, and our position in a multi-sided marketplace that supports both our customers and suppliers. To give you a sense of our scale we have a team of more than 3,000 engineers with tens of millions of customers. We supply more than 20 Million items using more than 16,000 supplier partners.

Logi Analytics, an insightsoftware Company, Wins Dresner Advisory Services Award for Embedded Business Intelligence

2021 Technology Innovation Awards recognize top performers in Wisdom of Crowds® Thematic Market Studies Raleigh, N.C., February 9, 2022 – Logi Analytics, an insightsoftware company, today announced it has been named a winner for Embedded Business Intelligence (BI) in the 2021 Technology Innovation Awards by Dresner Advisory Services.

Amazon Redshift to Snowflake Data Integration

8 Great Data Predictions for 2022

Heading into a new year filled with myriad crosscurrents, this much is certain: more organizations will find smarter ways to use data as they realize the benefits of digitally transforming their operations. We’re seeing this trend toward data-driven decision making already play out in different industries around the world as companies modernize their infrastructures.

Introducing Self-service data APIs - Fall '21 release

What's New in ThoughtSpot Analytics Cloud 8.0.0

Fivetran now available in Microsoft Azure Marketplace

Microsoft customers can discover, transact and deploy Fivetran’s fully managed and automated data pipelines in Azure and more.

How to Write a Great Business Consulting Report: Best Practices and Report Examples

Preventing Customer Churn with Continual, Snowflake, and dbt

In this article, we’ll take a deep dive into the customer churn/retention use case. This should contain everything needed to get started on the use case, and enterprising readers can also try this out for themselves in a free trial of Continual, following the customer churn example in the linked github repository.

How to Set Up Amazon Redshift on AWS

Gartner Recognizes Cloudera in Critical Capabilities for Cloud Database Management Systems for Operational Use Cases

Cloudera has been recognized as a Visionary in 2021 Gartner® Magic Quadrant™ for Cloud Database Management Systems (DBMS) and for the first time, evaluated CDP Operational Database (COD) against the 12 critical capabilities for Operational Databases.

Supply Chains: Staying Ahead of the Unpredictable With an Analytics Data Pipeline

2021 demonstrated the precariousness of our global supply chains and the potential cost to business. The Suez Canal blockage held up around $9.6bn of trade each day, while the true impact of the pandemic won’t be known for years. Many of us have also felt it during our weekly shopping and the disappointment when one of our favorite items is replaced by cardboard cutouts instead of the foodstuff themselves.

The rise of the data analytics engineer

8 Google BigQuery Data Types: A Comprehensive Guide

Having a firm understanding of Google BigQuery Data types is necessary if you are to take full advantage of the warehousing tool’s on-demand offerings and capabilities. We at Hevo Data (Hevo is a unified data integration platform that helps customers bring data from 100s of sources to Google BigQuery in real-time without writing any code) often come across customers who are in the process of setting up their BigQuery Warehouse for analytics.

Unleashing the power of Java UDFs with Snowflake

8 Insights You Can Gain from Analyzing Your Exit Pages

11 Redshift Tips for Startups

Control Issues: Overcoming Departmental Territorialism to Increase Data Sharing

Here’s a scenario that might feel painfully familiar. Your marketing department captures customer leads, and passes them to the sales department. Marketing’s success is measured in part on the number and size of deals that result. But a squabble breaks out over how the sales department handles, nurtures, and attributes those conversions. Result: Neither department really wants to share their data.

Steps to Install Kafka on Ubuntu 20.04: 8 Easy Steps

Apache Kafka is a distributed message broker designed to handle large volumes of real-time data efficiently. Unlike traditional brokers like ActiveMQ and RabbitMQ, Kafka runs as a cluster of one or more servers which makes it highly scalable and due to this distributed nature it has inbuilt fault-tolerance while delivering higher throughput when compared to its counterparts. This article will walk you through the steps to install Kafka on Ubuntu 20.04 using simple 8 steps.

SaaS Pricing Model Considerations when Powered by Snowflake

Google Analytics User-ID Reports: Everything You Need to Know

What is a data lake?

Data lakes serve as central destinations for business data and offer users a platform to guide business decisions.

Ultimate Guide to Equity Compensation Management and Software

For small entrepreneurial businesses, equity compensation can be a very attractive way to attract and retain highly talented employees. In a nutshell, equity compensation is defined as non-cash remuneration that takes the form of stock options, restricted shares, employee stock purchase plans, and other vehicles that provide employees with an equity stake in the company. Equity compensation may also apply to non-employee services provided by independent contractors, board members, or advisors.

How Change Data Capture Works

HBase to CDP Operational Database Migration Overview

This blog post provides an overview of the HBase to CDP Operational Database (COD) migration process. CDP Operational Database enables developers to quickly build future-proof applications that are architected to handle data evolution. It helps developers automate and simplify database management with capabilities like auto-scale and is fully integrated with Cloudera Data Platform (CDP).

Data Programmability with Snowpark API and Java UDFs

How to upgrade from HDP to CDP

Incorporating Privacy Enhancing Techniques in your Data Privacy Strategy

Competitive Benchmarking: What It is and How to Do It

Get started with Salesforce and Heroku

Gooooaaaallll: Winning at the data game

Sometimes the lifestyles of the rich and famous aren’t as glamorous as they seem at first glance. We all know that professional athletes can make incredible amounts of money. But by the age of 35, most pro athletes are already at the end of their prime earning years. Historically, a lot of them haven’t managed their money well — and they may even go bankrupt in retirement.

Failover Across Clouds

Healthy data, healthy business with Talend

Google Analytics Enhanced Ecommerce Reporting: A Step-by-Step Guide

What is data transformation?

Learn why data transformation is essential to data modeling and bringing your organization to the forefront of data literacy.

Choosing the Right Evaluation Metric

Is your model ready for production? It depends on how it’s measured. And measuring it with the right metric can unlock even better performance. Evaluating model performance is a vital step in building effective machine learning models. As you get started on Continual and start building models, understanding evaluation metrics helps to productionize the best performing model for your use case.

Budget Forecasting: What It Is and How To Do It

We often hear different terms used to describe forward-looking versions of a company’s financial statements. People frequently use these terms interchangeably, with some having a deeper understanding of the nuances in terminology than others. Forward-looking financial documents may include budgets, projections, forecasts, and pro forma financials.

Top 5 ETL Tools For Heroku

The Complete Guide to Using the Iguazio Feature Store with Azure ML - Part 4

Last time in this blog series, we provided an overview of how to leverage the Iguazio Feature Store with Azure ML in part 1. We built out a training workflow that leveraged Iguazio and Azure, trained several models via Azure's AutoML using the data from Iguazio's feature store in part 2. Finally, we downloaded the best models back to Iguazio and logged them using the experiment tracking hooks in part 3. In this final blog, we will.

Snowflake Unlocks Data Value While Safeguarding Privacy for Both Roku and ViacomCBS

In the past, data leaders had to manage a balancing act between data access and governance. Granting too much access meant opening up the business—and the privacy of consumers—to risk. But if you hold back data, you can’t deliver great experiences and value to customers. The Snowflake Media Data Cloud empowers companies to let go of the balancing act. They now have a single platform to store, govern, and share data while maintaining strict data governance.

Top Cloud Data Migration Challenges in 2022 and How to Fix Them

We recently sat down with Sandeep Uttamchandani, Chief Product Officer at Unravel, to discuss the top cloud data migration challenges in 2022. No question, the pace of data pipelines moving to the cloud is accelerating. But as we see more enterprises moving to the cloud, we also hear more stories about how migrations went off the rails. One report says that 90% of CIOs experience failure or disruption of data migration projects due to the complexity of moving from on-prem to the cloud. Here are Dr.

Experiment Manager Hands-on

New Fivetran Pricing Delivers Greater Accessibility to Customers

Unlimited resyncs, moving from credits to dollars, new pricing plan, and more.

Your Online Store Has High Traffic, but No Sales? 16 Potential Causes and Solutions

4 Key Flow Metrics for Agile Delivery Teams

Flow Engineering is the science of creating, visualizing and optimizing the flow of value from your company to the customers. In the end that is the million-dollar (likely more) challenge of most product companies: How to we create value in the form of product and services and ship those to our customers as quickly, sustainably and frictionless as possible.

7 Ways To Reverse ETL

The Most Unique Snowflake

Okay, I admit, the title is a little click-batey, but it does hold some truth! I spent the holidays up in the mountains, and if you live in the northern hemisphere like me, you know that means that I spent the holidays either celebrating or cursing the snow. When I was a kid, during this time of year we would always do an art project making snowflakes. We would bust out the scissors, glue, paper, string, and glitter, and go to work.

Hitachi Receives Double Recognition for IoT Leadership

More than a decade into the Internet of Things (IoT) era, the immense potential of IoT is becoming real. We’re moving from proof of concepts and pilots to projects at scale. What’s become increasingly clear is the vast complexity of deploying IoT solutions at scale and the necessity to do so to become a data-driven business.

Your Digital Transformation Program is Wasting Your Money

How to use descriptive analytics to drive company growth?

O'Reilly Report | What is Data Observability?

Disclaimer: This post is a preview of the O'Reilly Report. If you wish to directly download it, click here -