Systems | Development | Analytics | API | Testing

April 2022

Interview With Director of Data Science, Michael Chang

For our latest expert interview on our blog, we’ve welcomed the Director of Data Science and Machine Learning at Included, Michael Chang. Michael helps measure and optimize workforce diversity and inclusion efforts through data. Prior to Included, Michael worked in various data capacities at Facebook, Teach for America, Interactive Corp, and eBay. Michael also enjoys teaching and is an adjunct instructor for data science at UCLA Extension and Harvard FAS.

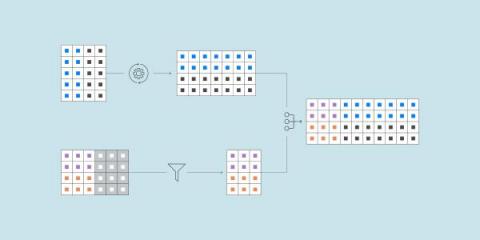

Data Blending: 4 Use Case Examples to Improve Your Marketing Reporting Process

A Shopify Tech Stack for Building a High-Traffic E-Commerce Store

Unraveling AI Entrepreneurs #7

Unraveling AI Entrepreneurs #6

Unraveling AI Entrepreneurs #8

Unraveling AI Entrepreneurs #9

Data Legends Podcast: Differentiate or Drown: Managing Modern-Day Data

The Value of Relational Analytics + Search in the Same Platform, According to Transeo

The Secrets Behind Personalization: The Customer 360 view

Three Ways Active Intelligence Can Support the CFO

Finance has been at the forefront of enterprise analytics for decades. Over the years, these analytics have evolved from reactive, descriptive analytics related to financial performance, treasury holdings, and inventory management to predictive and prescriptive analytics for risk, credit, and financial business modeling.

Store & Access Information at Scale: How Drawbacks Lead to Innovation

The Ultimate Guide to Sales Analysis Reports

Snowflake Live: Unstructured Data Support in Snowpark

How To Scale Threat Detection & Response With Snowflake & Securonix

Data Legends Podcast: Musings on Data Lakes, Computer Science, AI & More

Data Legends Podcast Episode 2, Amr Awadallah

Data Legends Podcast: Making Sense of Data Quality Amongst Current Seasonality & Uncertainty

How companies can turn data into their most valuable asset?

SEMrush vs. Ahrefs: Which SEO Tool is Better?

4 Years Ago, the GDPR Changed Everything. Now What?

The EU’s General Data Protection Regulation is approaching its 4th year anniversary since it was implemented in May 2018. Since its inception, it has been hailed as a groundbreaking framework for making users’ rights on the Internet a human right. Its impact in many other markets has been undeniable and it truly has affected how the world of the Internet works, even outside EU borders.

The Ultimate Guide to Report Types in Salesforce: 4 Reports Every Sales Pro Should Use

Fivetran gives analysts a new way to transform data

With integrated scheduling and data lineage, Fivetran now enables data orchestration from a single platform with minimal configuration and code.

A Window Into the Future of Data in Motion and What It Means for Businesses

Modern businesses have vast amounts of data at their fingertips and are acutely aware of how enterprise data strategies positively impact business outcomes. Despite this, only a handful of organisations interact with all stages of the data life cycle process to truly distill information that distinguishes future-ready businesses from the rest.

Power to the people - creating trust in data with collaborative governance

Today’s enterprise IT organizations are experiencing a massive upheaval due to pressure from employee forces. It’s a familiar story. Just think of the turmoil caused by the dawning of the bring-your-own-device (BYOD) era, with employees demanding to use their beloved personal mobile phones for work.

Pentaho 9.3 Suite Drives Modern Business Intelligence Across the Hybrid Cloud

Hitachi Vantara’s latest improvements to Pentaho make it significantly easier for organizations to move data workloads from on premises to the cloud and back again. The new Pentaho 9.3 Long-Term Support (LTS), part of Hitachi’s Lumada portfolio, offers a cloud deployment option that we anticipate will be a critical accelerant of data-driven transformation.

The Ultimate Guide to E-commerce Tech Stack

Why SLAs Are Critical to Ensuring Data Reliability.

As far back as the 1920s, Service Level Agreements (SLA) were used to guarantee a certain level of service between two parties. Back then, it was the on-time delivery of printed AR reports. Today, SLAs define service standards such as uptime and support responsiveness to ensure reliability. The benefit of having an SLA in place is that it establishes trust at the start of new customer relationships and sets expectations.

insightsoftware Expands Jet Reports Integration with Microsoft 365

Project Management Report: 6 Best Practices for Writing One

How Your Organization Can Win in Today's Data Economy

The skyrocketing value of data has created a global supply and demand for data, data applications, and data services. This new data economy is powered by technologies that enable data access and sharing, including cloud platforms, exchanges, and marketplaces.

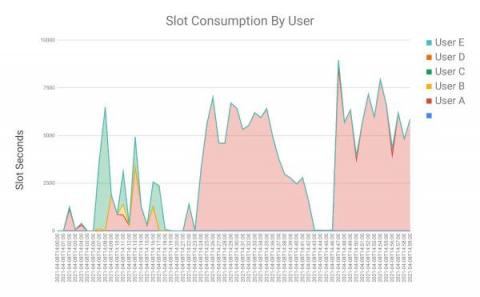

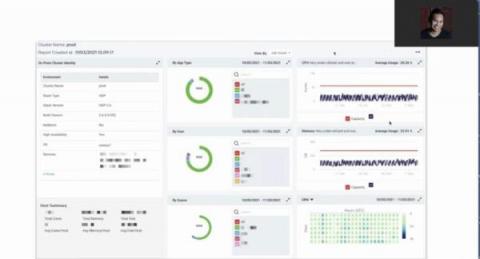

Monitor & analyze BigQuery performance using Information Schema

In the exponentially growing data warehousing space, it is very important to capture, process and analyze the metadata and metrics of the jobs/queries for the purposes of auditing, tracking, performance tuning, capacity planning, etc. Historically, on-premise (on-prem) legacy data warehouse solutions have mature methods of collecting and reporting performance insights via query log reports, workload repositories etc. However all of this comes with an overhead of cost-storage & cpu.

Overcome These 4 Common D365 F&SCM Challenges with Jet Reports

So, you’re working for a medium to large enterprise that uses Microsoft Dynamics 365 Finance & Supply Chain (D365 F&SCM) as its ERP system. You have multiple options for reporting and analysis available to you from Microsoft. But if your business is growing, you are probably looking to push beyond the out-of-the-box capabilities to develop your own custom analysis and meaningful data insights.

Winning with Analytics: The Transformation of Clinical Trials for Scientists

The end goal of clinical technology organizations in the US and abroad is to use modern technology to bring life-saving new treatments to fruition. Leaders in this sphere help generate the evidence and insights to help biotech, pharmaceutical, medical device, and diagnostic companies accelerate value, minimize risk, and optimize outcomes. Life sciences clients recognize that technology is the answer to inefficiencies and delays in delivering new treatments to the public.

Beyond Observability for the Modern Data Stack

The term “observability” means many things to many people. A lot of energy has been spent—particularly among vendors offering an observability solution—in trying to define what the term means in one context or another. But instead of getting bogged down in the “what” of observability, I think it’s more valuable to address the “why.” What are we trying to accomplish with observability? What is the end goal?

Great Financial Storytelling Begins with Great Tech

Finance professionals know that data matters, but stories convey truth in ways that mere numbers simply cannot. Those who work in finance may describe themselves as “numbers people.” They have a natural affinity for quantitative information, as well as a knack for drawing meaningful conclusions when presented with a collection of numerical figures. Even so, finance team members probably understand and retain information more readily when it’s presented in narrative form.

Data Observability Driven Development | The perfect analogy for beginners

When explaining what Data Observability Driven Development (DODD) is and why it should be a best practice in any data ecosystem, using food traceability as an analogy can be helpful. The purpose of food traceability is to be able to know exactly where food products or ingredients came from and what their state is at each moment in the supply chain. It is a standard practice in many countries, and it applies to almost every type of food product.

Automated ELT: A force multiplier for one-person data teams

To be effective, solo data analysts or engineers need to focus on refining algorithms and analyzing data, not building data pipelines.

What Are Embedded Data Visualization Tools?

Support Multiple Data Modeling Approaches with Snowflake

Since I joined Snowflake, I have been asked multiple times what data warehouse modeling approach Snowflake best supports. Well, the cool thing is that Snowflake supports multiple data modeling approaches equally. Turns out we have a few customers who have existing data warehouses built using a particular approach known as the Data Vault modeling approach, and they have decided to move into Snowflake. So the conversation often goes like this.

How E-Commerce Influences B2B Analytics in Your Data-Driven Enterprise

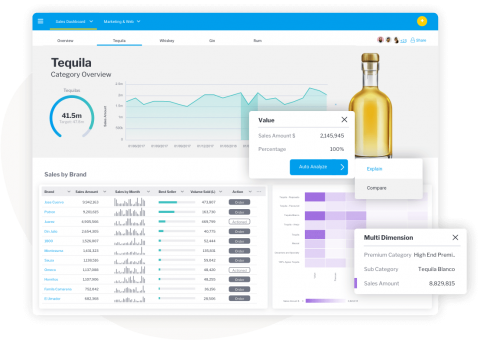

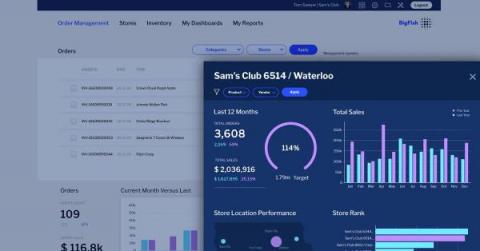

What's new in ThoughtSpot Analytics Cloud 8.2.0

Wondering how to add custom HTML styling to your chart headers and descriptions, or add conditional formatting to your KPI charts? See how in ThoughtSpot's 8.2.0.cl release!

To learn more, please visit https://www.thoughtspot.com/new-features/8.2.0-cloud

Americanas Gets Closer to Trusted Data with Soda

3 Use Cases for Embedded BI in 2022 to Enhance Your SaaS Product

Telco 5G Returns Will Come from Enterprise Data Solutions

Communications service providers (CSPs) are rethinking their approach to enterprise services in the era of advanced wireless connectivity and 5G networks, as well as with the continuing maturity of fibre and Software-Defined Wide Area Network (SD-WAN) portfolios.

How to Create and Use Reporting Snapshots in Salesforce: A Step By Step Guide

What's an E-Commerce Dashboard? & Why Does it Matter?

insightsoftware Acquires Legerity, Adding Cloud-Based Accounting Rules Offering for Complex Financial Transformation

Sales Pipeline Report: How to Build One, What to Include In It, Benefits, and Best Practices

What is ETL?

A brief explanation of how data integration continues to evolve.

Automatically extract TML definitions from tml/export

ThoughtSpot elements such as search, Liveboards, and data connections are all defined in a JSON-based metadata definition called ThoughtSpot Modeling Language, or TML. Recently, I blogged about how you can use Postman to access platform APIs to import/export TML as part of your devops processes; for example, to check in TML definitions and push to another environment via a continuous integration process. The TML export is pretty straightforward.

Webinar Recap: Functional strategies for migrating from Hadoop to AWS

In a recent webinar, Functional (& Funny) Strategies for Modern Data Architecture, we combined comedy and practical strategies for migrating from Hadoop to AWS. Unravel Co-Founder and CTO Shivnath Babu moderated a discussion with AWS Principal Architect, Global Specialty Practice, Dipankar Ghosal and WANdisco CTO Paul Scott-Murphy. Here are some of the key takeaways from the event.

Building vs. Buying Your Modern Data Stack: A Panel Discussion

One of the highlights of the DataOps Unleashed 2022 virtual conference was a roundtable panel discussion on building versus buying when it comes to your data stack. Build versus buy is a question for all layers of the enterprise infrastructure stack. But in the last five years — even in just the last year alone — it’s hard to think of a part of IT that has seen more dramatic change than that of the modern data stack.

Elevate Gives Retailers a Powerful New Tool for Managing Supply Chains

Why Google Cloud BigQuery for SAP Enterprises?

Automate the Creation of Data Streams

AstraZeneca: Building a collaborative culture between data leaders and business teams

The Sprint towards Digital Healthcare

The pandemic changed our healthcare behaviors. Planned hospital and doctor visits were reduced while telemedicine, for physical and mental health, increased. As healthcare providers and insurers/payers worked through mass amounts of new data, our health insurance practice was there to help.

Top 3 Automation Best Practices for Viewpoint Users

Construction is a vast and complex industry. As such, the simpler and more streamlined your processes are, the better. Complicated, manual tasks hamper construction professionals’ ability to conduct business by slowing down efficiency and productivity. Despite the obvious benefits of modern automated workflows, a survey conducted by McKinsey Global Institute found construction is the second slowest adopter of digitization.

The Ultimate Shopify E-Commerce Tech Stack Guide

From the Ground Up: The Truth About Data Innovation

Data holds incredible untapped potential for Australian organisations across industries, regardless of individual business goals, and all organisations are at different points in their data transformation journey with some achieving success faster than others. To be successful, the use of data insights must become a central lifeforce throughout an organisation and not just reside within the confines of the IT team. More importantly, effective data strategies don’t stand still.

Making Sense of Data Quality Amongst Current Seasonality & Uncertainty

The New Breed: How to Think About Robots

You’ve heard the saying “if you do what you love, you’ll never work a day in your life,” right? Well, I hate to say it, but that’s me. I never dreamed that I would wind up in a field that combined all of my interests, but somehow that happened. Through my research at the MIT Media Lab I get to apply my legal and social sciences background to human-robot interaction. Which yes, does mean that I mostly get to play with robots all day.

Unraveling AI Entrepreneurs #5

Data Mesh, Data Stack, and the Holy Grail

Heureka Group: Empowering over 5,000 e-commerce shops with data insights and generating 450k EUR per year by enriching data

12 Best Metrics for Agencies to Include in Their Semrush Reports

MeDirect Bank: Thinking ahead for a seamless transition to the cloud

MeDirect Bank is a bank and financial services company based in Malta that provides services ranging from deposit accounts to mutual funds to wealth management. The company has evolved from its regional roots to become the third largest bank in Malta, with customers all over the world. And it’s done so by evolving with its customers’ needs; providing accessible, transparent services wherever customers are — physically or digitally.

Webinar Recap: Optimizing and Migrating Hadoop to Azure Databricks

The benefits of moving your on-prem Spark Hadoop environment to Databricks are undeniable. A recent Forrester Total Economic Impact (TEI) study reveals that deploying Databricks can pay for itself in less than six months, with a 417% ROI from cost savings and increased revenue & productivity over three years. But without the right methodology and tools, such modernization/migration can be a daunting task.

The Best Tools for Visualizing E-Commerce Data

Data Lake vs Data Warehouse: 7 Critical Differences

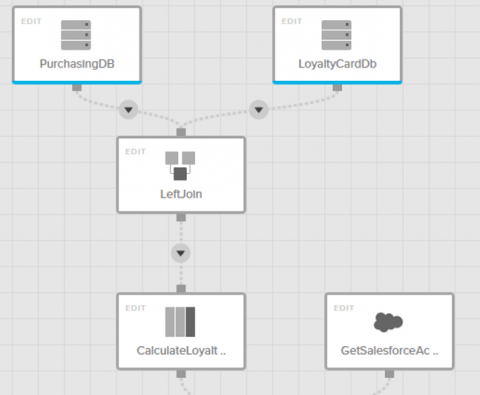

How To Simplify The ETL Code Process with Low-Code Tools

Top 3 Data and Analytics Trends to Prepare for in 2022

New Snowflake Features Released in March 2022

In March, Snowflake continued to enhance its capabilities around data programmability and data pipeline development, with the Snowpark API and stored procedures for Java now in public preview, schema detection now generally available, and the Snowflake SQL API generally available. In addition, Snowflake’s user interface, Snowsight, is generally available. Not to mention an expanded selection of new partners to choose from in Snowflake Data Marketplace.

Get Your Retail Plan in Shape: A 7-Step Regimen for Year-Round Selling

Once upon a time, the retail calendar centered itself on the Christmas season. Now, the retail surge is year-round. Not just the wave of traditional seasonal holidays from Valentine’s Day to the 4th of July, but also newer sales holidays, such as Cyber Monday, or even holidays created by some gigantic companies themselves, like Amazon’s Prime Day. Now, instead of a steady pace leading up to a frenzied December, retailers are in sprint mode all the time.

Automate your Reports on Google Sheets with Hevo Activate

Usually, your business users request you share the business reports in Spreadsheets. They are highly familiar with Sheets and prefer their reports on Sheets only. They assume delivering reports in XLS format is easy and quick. But, we understand the efforts and time required to export reports to Spreadsheets. Every time, you will have to run queries on your centralized data at the warehouse and then export results in XLS format. You may need to edit and update the Sheet regularly.

Estée Lauder: Transforming the retail experience during a pandemic

How to Improve Your Reporting Process: The Do's and Don'ts

5 Secrets to Understanding Value Shoppers

Data In Motion: NASA and Aurica

Some 300 million years ago, Earth had one continent called Pangea. Over millions of years, that vast single land mass broke up and drifted in different directions, creating the seven continents that exist today. Since the planet changed so dramatically over millennia, it raises an obvious question: How will it change in the future? The same forces, plate tectonics and continental drift, that broke up Pangea hundreds of millions of years ago still exert themselves.

AI Winter is coming. Get ready with Data Observability.

“Without clean data, or clean enough data, your data science is worthless.” Michael Stonebraker, adjunct professor, MIT AI is one of the fastest-growing and most popular data-driven technologies in use. Nine in ten of Fortune 1000 companies currently have ongoing investments in AI. So you may be wondering: how could there possibly be another AI winter?

Commerzbank | Unleashing Hidden Data Treasures for Customers

Modernizing the Analytics Data Pipeline

Automate the Creation of Data Streams

Build or Buy Embedded Analytics: What's the difference?

Cash Flow Forecasting for SaaS: 6 Best Practices

Automate Your Yardi Real Estate Data Collection and Management

From managing financial statements, signage, storage space, office space floors, and land, real estate financial professionals manage many of their businesses’ most critical moving parts. And real estate is growing–by 2026, the market is expected to reach $5388.87 billion by 2026 at a compound annual growth rate (CAGR) of 9.6%. When the time comes for month-end reporting, ERPs like Yardi manage and compartmentalize data with out of the box reports.

Cloud vendor's MLOps or Open source?

If someone had told my 15-years-ago self that I’d become a DevOps engineer, I’d have scratched my head and asked them to repeat that. Back then, of course, applications were either maintained on a dedicated server or (sigh!) installed on end-user machines with little control or flexibility. Today, these paradigms are essentially obsolete; cloud computing is ubiquitous and successful.

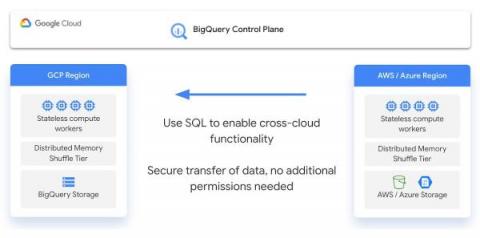

BigQuery Omni innovations enhance customer experience to combine data with cross cloud analytics

IT leaders pick different clouds for many reasons, but the rest of the company shouldn’t be left to navigate the complexity of those decisions. For data analysts, that complexity is most immediately felt when navigating between data silos. Google Cloud has invested deeply in helping customers break down these barriers inherent in a disparate data stack. Back in October 2021, we launched BigQuery Omni to help data analysts access and query data across the barriers of multi cloud environments.

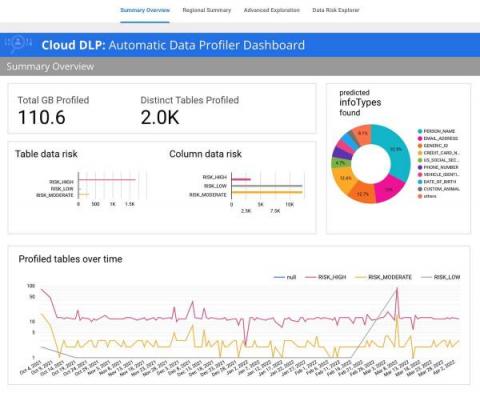

Automatic data risk management for BigQuery using DLP

Protecting sensitive data and preventing unintended data exposure is critical for businesses. However, many organizations lack the tools to stay on top of where sensitive data resides across their enterprise. It’s particularly concerning when sensitive data shows up in unexpected places – for example, in logs that services generate, when customers inadvertently send it in a customer support chat, or when managing unstructured analytical workloads.

What is data replication and why is it important?

Your company needs data replication for analytics, better performance and disaster recovery.

Business Intelligence on the Cloud Data Platform: Approaches to Schemas

Why You Need a Fully Automated Data Pipeline

Unstructured Data Now Generally Available in Snowflake, Processing with Snowpark in Public Preview

We’re excited to announce the general availability of the unstructured data management functionality in Snowflake. We launched public preview of this functionality in September 2021, and since then we have seen adoption by customers across industries for a variety of use cases. These use cases include storing and securing call center recordings, securely sharing PDF documents in Snowflake Data Marketplace, storing medical images and extracting data from them, and many more.

MongoDB vs. PostgreSQL: Detailed Comparison of Database Structures

Analyzing Unstructured Data With Snowflake Explained In 90 Seconds

Helping I.T. take flight - Mark Settle

Building and Managing the Modern Datastore: The Data Lakehouse

GDPR Prevails: Google Analytics Running into Trouble in the EU?

Almost 6 years ago, the European Union’s General Data Protection Regulation (better known for its acronym, GDPR) changed the world of personal data protection forever. The groundbreaking ruling has since been replicated, albeit with changes, in over a dozen other markets.

24 Facebook Ads Statistics Marketers Need to Know in 2022

Streamline Reporting During (and After) Your Deltek Vision to Vantagepoint Transition

Deltek Vantagepoint is the newly branded, freshly reimagined next version of Deltek Vision built specifically for professional services organizations. Deltek has set a rough deadline for Vision customers to upgrade to Vantagepoint. While this date may be delayed based on rate of adoption, many Deltek users have either already upgraded or are starting to consider the impact of the change, and how to best approach it.

The Repurchase Rate: How to Calculate It and Why it Matters for E-commerce

Becoming an AI-first Organization

The term “AI-first” has received its share of attention lately, especially in the boardroom where strategies to gain a competitive advantage are always welcome. But before a company embarks on an AI-first strategy, it pays to understand what it is and how it will transform the organization.

Integrate.io Achieves Google Cloud Ready - BigQuery Designation!

Build Robust and Efficient Analytics Engine with Hevo's Data Transformation

In today’s digital age, robust and faster data analytics is essential for your organization’s growth and success. The faster you deliver analytics-ready data to your analyst, the faster they can analyze and derive insights. Though you would have adopted the ELT process with EL data pipelines to load data quickly to the warehouse, your team would still face inefficient and delayed analysis.

The Modern Data Stack Ecosystem: Spring 2022 Edition

Welcome to the Spring 2022 Edition of the Modern Data Stack Ecosystem. In this article, we’ll provide an in-depth look at the Modern Data Stack (MDS) ecosystem, updated from our Fall 2021 edition. We also highly recommended our article, The Future of the Modern Data Stack, to anyone who is new to the MDS and wants to learn about its history.

What is Semrush? A Complete Guide to SEMrush Features, Metrics, and Reporting Dashboards

Switching to an annual plan can really pay off

Don’t take our word for it – hear what our customers have to say.

New Pathways to New Insights

To this point, AI has been applied to augment analytics in a somewhat bifurcated fashion. On one hand, we have seen natural language support the business consumer that requires simple answers to known questions, helping them quickly take action. And, on the other, AI helps content authors and BI developers auto-suggest charts and automate data preparation, improving efficiency and reducing manual workloads. But, there’s a gap, and the value is huge.

How to Conduct a Thorough Landing Page Analysis | Data Snack #14 | Hubspot Marketing & Databox

SaaS in 60 - Insight Advisor Analysis Types & Spanish Support for Insight Advisor Chat

Unraveling AI Entrepreneurs #4

Unraveling AI Entrepreneurs #3

Getting data to the front lines of your business - Scott Holden, CMO of ThoughtSpot

insightsoftware Launches Angles Operational Reporting for NetSuite and Deltek

What Does Embedded BI Really Mean? OEM Reporting Tools Defined

How to Write a Great Business Report Conclusion: Everything You Need to Know

What is data governance?

How policies, processes, roles and technology come together to ensure data integrity, data quality and access control.

How to Identify and Analyze Your Most Successful Landing Pages

Do You Have What it Takes to Manage the Flood of Data?

In 2010, Eric Schmidt, then CEO of Google, made the startling claim that every two days we humans generate as much information as we did from the dawn of civilization to today, or about five exabytes of data. At the time, we had TB disk drives and could only imagine an exabyte, which is one million terabytes. The next increments from TB is the peta byte and then the zettabyte, which is 1,000 exabytes. By the end of 2010, the world had crossed the zettabyte threshold.

Net Revenue Formula: What Startups Should Know

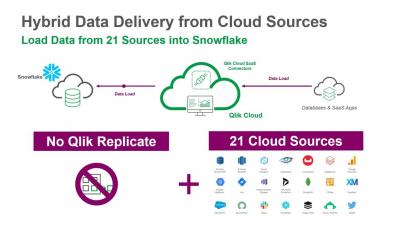

Hybrid Data Delivery (Data Services) - "Cloud Data Sources" - Complete Walk-through

Assessing the Validity and Relevance of Data To Discover True, Actionable Information and Insights

In a previous article, we talked about the lost art of questioning and its importance when working with data and information to find actionable insights. In this article, we will expand on this topic and explain how questioning differs depending on what stage in the process you are from transforming data and information into insights.

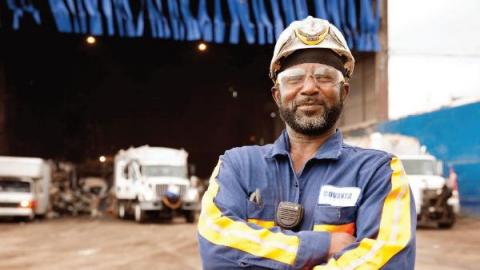

At Covanta, data health improves the business and the planet

At Talend, we tend to describe poorly organized, unhealthy data as “digital landfills.” But we don’t often talk about actual landfills. That’s right, the ones filled with trash. As anyone watching real estate prices will know, land is a finite resource. It’s crazy to think that we’re still dedicating land to storing our garbage, where it will sit releasing pollutants and greenhouse gases for decades to come.

How Mercado Libre Builds Upon a Continuous Intelligence Ecosystem with BigQuery and Looker

At Mercado Libre, we are obsessed with unlocking the power and potential of data. One of our key cultural principles is to have a Beta Mindset. This means that we operate in a “state of beta”, constantly asking new questions of our data, experimenting with technologies and iterating our business operations in service of creating the best experiences for our customers.

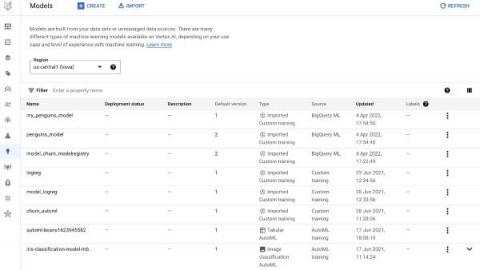

MLOps in BigQuery ML with Vertex AI Model Registry

Without a central place to manage models, those responsible for operationalizing ML models have no way of knowing the overall status of trained models and data. This lack of manageability can impact the review and release process of models into production, which often requires offline reviews with many stakeholders.

What's New in Amazon EMR Unveiled at DataOps Unleashed 2022

At the DataOps Unleashed 2022 virtual conference, AWS Principal Solutions Architect Angelo Carvalho presented How AWS & Unravel help customers modernize their Big Data workloads with Amazon EMR. The full session recording is available on demand, but here are some of the highlights.

Customer Profitability Analysis in E-Commerce

Real-Time Streaming for Data Science

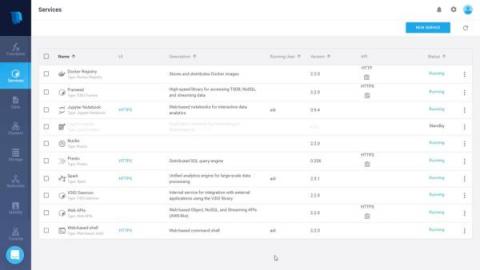

First, we collect data from an existing Kafka stream into an Iguazio time series table. Next, we visualize the stream with a Grafana dashboard; and finally, we access the data in a Jupyter notebook using Python code. We use a Nuclio serverless function to “listen” to a Kafka stream and then ingest its events into our time series table. Iguazio gets you started with a template for Kafka to time series.

5 benefits of modernizing your application's analytics with embedded analytics

As an ISV company selling a SaaS application, you have built analytics into your software because you know customers highly value insights into the data that's held within your application. Giving your customers business intelligence (BI) and analytics within your application offers them a window of insight into the data to help them optimize their business. You deliver more value which boosts end user adoption and means your client buys for longer.

GigaOm Names Iguazio a Leader and Outperformer for 2022

We’re proud to share that the Iguazio MLOps Platform has been named a leader and outperformer in the GigaOm Radar for Data Science Platforms: Pure-Play Specialist and Startup Vendors report. The GigaOm Radar reports take a forward-looking view of the market and are geared towards IT leaders tasked with evaluating solutions with an eye to the future. GigaOm analysts emphasize the value of innovation and differentiation over incumbent market position.

How Olfin Car increased its sales by 760%

Little Fluffy Hybrid Clouds

In this series of demystifying the tech trends, my colleagues and I will be looking at busting the buzzwords to help you keep on track. Concerned about puzzling parlance, analytics argot, techie terminology – or plain old jargon? This series breaks down words and concepts to give you the deepest insight and understanding into how to talk the talk in the world of tech, so you can engage in conversations with the confidence of being data literate.

What defines the modern data stack and why you should care

When I was working at Google back in the mid 2000’s, we dealt with tens of billions of ad impressions a day, trained several machine learning models on years worth of historic data, and used frequently-updated models in ranking ads. The whole system was an amazing feat of engineering and there was no system out there that was even close to handling this much data. It took us years and hundreds of engineers to make this happen, today, the same scale can be achieved in any enterprise.

The value of integrating varied HR systems

Better HR analytics bring benefits to every business, but data integration must come first.

Revolt BI: Implementing Keboola results in a 20x faster data simulation model, 3-5% revenue increase, or even identifying 815,000 EUR of savings

FinTech Companies Thrive and Innovate with ChaosSearch

Turn Your Excel Spreadsheets into Beautiful, Custom Dashboards in Databox

Talend's acquisition of Gamma Soft offers exciting new capabilities for our customers

I am so pleased to announce that Talend has acquired Gamma Soft, a change data capture market innovator. This is a significant enhancement in the capabilities Talend can provide its customers and partners. Talend’s company vision is to take the work out of working with data, and we're thrilled to add Gamma Soft’s technology to our offerings to do this. Change data capture (CDC) technology is highly sought after by many companies.

Now in preview, BigQuery search features provide a simple way to pinpoint unique elements in data of any size

Today, we are excited to announce the public preview of search indexes and related SQL SEARCH functions in BigQuery. This is a new capability in BigQuery that allows you to use standard BigQuery SQL to easily find unique data elements buried in unstructured text and semi-structured JSON, without having to know the table schemas in advance. By making row lookups in BigQuery efficient, you now have a powerful columnar store and text search in a single data platform.

Anodot Fintech Demo at Finovate 2022 Conference

Lacework Brings New Tools To Cloud Security

How to replicate SAP data in BigQuery

Unraveling AI Entrepreneurs #1

Unraveling AI Entrepreneurs #2

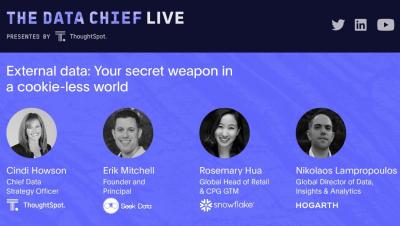

Data Chief Live: External data: Your secret weapon in a cookie-less world

Talend acquires Gamma Soft

Google Cloud names ThoughtSpot a Google Cloud Ready - BigQuery company to help customers dominate the decade of data

We’re entering the defining decade of data. While every aspect of our lives have been changed by data in recent years, the next ten will see data rebuild the world around us. Every business, in every industry, needs a plan to adapt to this new world if they want to thrive. But how? That’s a question in the minds of data leaders, CEOs, and board members. The right approach is critical if companies want to dominate this new era. The wrong decision can spell disaster.

Space-Based AI Shows the Promise of Big Data

At a distance of a million miles from Earth, the James Webb Space Telescope is pushing the edge of data transfer capabilities. The observatory launched Dec. 25 2021 on a mission to look at the early universe, at exoplanets, and at other objects of celestial interest. But first it must pass a rigorous, months-long commissioning period to make sure that the data will get back to Earth properly. Mission managers provided an update Feb.

Cost-Per-Order Formula for E-Commerce Explained

SQL Puzzle Optimization: The UDTF Approach For A Decay Function

Data Warehouse Automation: What, Why, and How?

Top 5 Challenges of Analyzing Marketing Performance (How Agencies Solved Them)

Solve a Problem, Change the World w/ Amr Awadallah

Cortex leverages ThoughtSpot Everywhere to innovate in B2B marketing intelligence

Gaining an accurate view of revenue intelligence for B2B markers is challenging. With disconnected and dirty data residing in many systems, customers need a solution that collects, normalizes, and aggregates information into reports that answer the questions B2B marketers should have a handle on. And let’s face it, no matter how great a set of standard reports might be, every customer wants to see their data a little differently.

Data-Centric AI with Continual and Snowflake

Data infrastructure is rapidly growing and evolving along with infrastructure for AI/ML, with the latter growing largely independent from the former. An emerging generation of AI/ML tooling emphasizes data-centric versus model-centric approaches to the ML development lifecycle. These tools recognize that data is the foundation for AI and seek to open opportunities for all data professionals to participate by eliminating the unnecessary complexity of traditional model-centric solutions.

Track and Visualize Data from Google Sheets Easily with New Setup Wizard

Hybrid Data Delivery "Cloud Sources" Walkthrough

Hitachi Vantara Is For The Data-Driven

AstraZeneca: Building a finance data hub

Building Product Analytics At Petabyte Scale

Creating a step change in an era of incremental innovation

Iguazio named in Forrester's Now Tech: AI/ML Platforms, Q1 2022

We are delighted to share that Iguazio has been named along with Microsoft, Databricks, Cloudera, Alteryx and others in Now Tech: AI/ML Platforms, Q1 2022, Forrester’s Overview of the Leading AI/ML Platform Providers, by Mike Gualtieri. This report by Forrester Research looks at AI/ML Platform providers, to help technology executives evaluate and select one based on functionality aligned with their needs.

Top 8 Machine Learning Resources for Data Scientists, Data Engineers and Everyone

Machine learning is a practice that is evolving and developing every day. Newfound technologies, inventions and methodologies are being introduced to the community on a daily basis. As ML professionals, we can enrich our knowledge and become better at what we do by constantly learning from each other. But with so many resources out there, it might be overwhelming to choose which ones to stay up-to-date on. So where is the best place to start?

How to Write an Executive Summary for a Report: Step By Step Guide with Examples

A Real-Time Data Integration Fabric for Active Intelligence

Greek philosopher Heraclitus wasn’t talking about the challenge of today’s enterprise IT landscape but the quote certainly fits. From the advent of the first digital computer in the 1940s to the emergence of first public cloud in 2004, the rate of change has only accelerated. In fact, over 60% of corporate data resides in the cloud in 2022, up from 50% last year.

Why Can't we Advance Healthcare and Life Sciences this Fast all the time?

Vaccine development became the top priority for the life sciences industry – delivering new vaccines at unprecedented speed and maneuvering large-scale production processes. Numerous factors helped accelerate the vaccine roll-out including prior research, genome sequencing, jumping the FDA approval queue and a plethora of testing volunteers. So now that we’ve experienced these advancements, how can the industry keep momentum to speed-up innovative solutions across healthcare?

Turning data into a life-saving asset

A global leader in pharmaceuticals found themselves faced with a unique spin on a common challenge: Their biopharmaceutical division — responsible for producing vaccines and generating over $1 billion in annual sales — was struggling to turn raw data into trusted insights. Data underlies everything the global pharmaceutical company does, however, without data they can trust, they would be at risk of taking longer to get vaccines to market and incurring higher expenses along the way.

Stitch vs. Fivetran vs. Integrate.io: A Comprehensive Comparison

Getting Started with Continual and Snowflake

This guide will show you how to easily add Continual as the AI layer to your modern data stack with Snowflake at the core. The intention is to provide an introduction to using Continual on Snowflake. After completing this tutorial, users are invited to try more advanced examples. We are going to demonstrate connecting Continual to Snowflake, building feature sets and models from data stored in Snowflake, and analyzing and maintaining the predictive model continuously over time.

The E-Commerce Purchase Funnel Explained

Best 15 ETL Tools in 2023

ETL stands for Extract, Transform, and Load. It is defined as a Data Integration service and allows companies to combine data from various sources into a single, consistent data store that is loaded into a Data Warehouse or any other target system. ETL serves as the foundation for Machine Learning and Data Analytics workstreams. Through multiple business rules, ETL organizes and cleanses data in a way that caters to the Business Intelligence needs, like monthly reporting.

ClearML-Data

Data Warehouse Automation: What, Why, and How?

Shopify Reporting Guide: How to Get the Most Out of Built-In Shopify Reports

Introducing Active Assist recommendations for BigQuery capacity planning

BigQuery already offers highly flexible pricing models, such as the on-demand and flat-rate pricing for running queries, to meet the diverse needs of our users. Today, we’re excited to make it even easier for you to optimize BigQuery usage with new BigQuery slot recommendations powered by Active Assist, a part of Google Cloud’s AIOps solution that uses data, intelligence, and machine learning to reduce cloud complexity and administrative toil.

24 Free-Forever Marketing and Sales Software Tools

The Best Guide to AUR-in Retail

Apache Kafka to BigQuery: 2 Easy Methods

Organizations today have access to a wide stream of data. Data is generated from recommendation engines, page clicks, internet searches, product orders, and more. It is necessary to have an infrastructure that would enable you to stream your data as it gets generated and carry out analytics on the go. To aid this objective, incorporating a data pipeline for moving data from Apache Kafka to BigQuery is a step in the right direction.