Systems | Development | Analytics | API | Testing

June 2023

Moving Data From MySQL to Redshift: 4 Ways to Replicate Your Data

Running a BI Team with limited resources

Business Intelligence (BI) teams often face several resource constraints that can impact their ability to deliver their objectives effectively. They must run effective operations with limited time, resources, budget and people. The role can be incredibly challenging when multiple projects are highly prioritised, where data and reports were required yesterday. That said, there are ways to make these challenges more manageable.

Creating BI data pipelines at speed

Data is the invaluable currency of the digital age, holding immense importance in driving critical business decisions and guiding organizations towards success. Yet far too many neglect the mechanisms that manage our data. Data integration is one of the most important parts of digital transformation.

Navigating the Cloud: Insights from our recent webinar with Google Cloud

Discover the key challenges organizations face during cloud migration and explore actionable solutions to unlock the full potential of the cloud for your business.

CDP Private Cloud | Cloud-native analytics on-premises

Data-Led Growth: How FinTechs Win with App Event Analytics

Yellowfin vs Grafana Helix: Which is Best for BMC Smart Reporting Users?

How to Manage Risk with Modern Data Architectures

The recent failures of regional banks in the US, such as Silicon Valley Bank (SVB), Silvergate, Signature, and First Republic, were caused by multiple factors. To ensure the stability of the US financial system, the implementation of advanced liquidity risk models and stress testing using (MI/AI) could potentially serve as a protective measure.

Exploring The Salesforce Data Integration Landscape

Database Sync: Diving Deeper into Qlik and Talend Data Integration and Quality Scenarios

A few weeks ago, I wrote a post summarizing "Seven Data Integration and Quality Scenarios for Qlik | Talend," but ever since, folks have asked if I could explain a little deeper. I'm always happy to oblige my reader (you know who you are), so let's start with the first scenario: Database-to-database Synchronization.

When and why to trust SCIM, SSO JIT, and SSO Role Mapping

Cloud-first organizations now outnumber on-premises ones by 3:1. Despite broad adoption, uncertainty about security still chains some organizations to an on-premises solution. If security and compliance concerns have blocked efforts to move your organization to the cloud, it’s time to reevaluate. Let’s examine modern cloud security by looking into the trusted user management capabilities in the latest release of Talend Cloud.

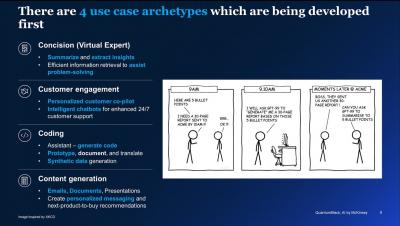

What is Enterprise Generative AI and Why Should You Care?

It seems like we are witnessing a new quantum leap of technological advancement, with Generative AI taking the world by storm earlier this year. Generative AI (GenAI) has emerged as a powerful tool that combines artificial intelligence with creativity, empowering machines to generate original content, such as images, music, and even text, that imitates human-like creativity from structured and unstructured data.

[DEMO] Talend and Cloudera better together

Kensu launches the first Data Observability solution for Matillion enhancing data productivity for the Enterprise

Built with BigQuery: How to supercharge your product data with Google Cloud and Harmonya

Harmonya relies on BigQuery to build and maintains data pipelines and train and serve machine learning models for its product enrichment service.

Snowflake Expands Programmability to Bolster Support for AI/ML and Streaming Pipeline Development

At Snowflake, we’re helping data scientists, data engineers, and application developers build faster and more efficiently in the Data Cloud. That’s why at our annual user conference, Snowflake Summit 2023, we unveiled new features that further extend data programmability in Snowflake for their language of choice, without having to compromise on governance.

Bring Gen AI & LLMs to Your Data

The potential of generative AI and large language models (LLMs) for enterprises is massive. We’ve talked about this opportunity before and at Summit 2023, we announced a number of capabilities that come together to help our customers bring generative AI and LLMs directly to their proprietary data, all delivered through a single, secure platform.

Data Integration: How to Retain Customers in Recession

Version control REST APIs with Git integration

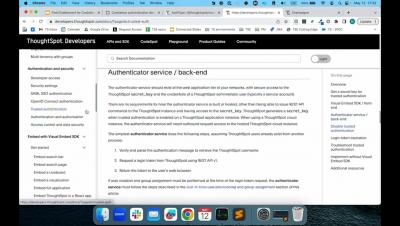

Cookieless authentication in ThoughtSpot Everywhere

MLOps for Gen AI - MLOPs Live #23 - QuantumBlack AI by McKinsey

The Ultimate Guide to Nonprofit Dashboards: Types, Use Cases, Top Metrics, Best Practices, and Examples

3 data quality obstacles to beat with Talend and Snowflake

In today's fast-paced, data-driven world, deeper data insights and faster time to value are paramount if you want your business to stay competitive and thrive. Decision-makers need instant access to all their data sources to make sound business decisions — and they need to have trust in their data. However, data quality is often overlooked. According to Gartner, poor data quality costs organizations an average of $12.9 million annually. What’s going on?

Five Ways A Modern Data Architecture Can Reduce Costs in Telco

During the COVID-19 pandemic, telcos made unprecedented use of data and data-driven automation to optimize their network operations, improve customer support, and identify opportunities to expand into new markets. This is no less crucial today, as telcos balance the needs to cut costs and improve efficiencies while delivering innovative products and services.

Top SFTP ETL Tools for Secure & Seamless Data Transfer

Snowflake's Single Platform Improves Performance, Advances Mission Criticality, and Analytics While Supporting More Data Types

The world is undergoing a remarkable transformation fueled by data. Organizations have accumulated silos across their data infrastructure to support various workloads, languages, tools, and formats because of technology limitations. These silos can have major consequences in the form of greater operational burden, security vulnerabilities, increased total cost of ownership, incomplete insights, and reduced agility.

Snowpark Container Services: Securely Deploy and run Sophisticated Generative AI and full-stack apps in Snowflake

Containers have emerged as the modern approach to package code in any language to ensure portability and consistency across environments, especially for sophisticated AI/ML models and full-stack data-intensive apps. These types of modern data products frequently deal with massive amounts of proprietary data.

From community to creation-celebrating a year of Product Ideas

Active listening is an admired and sought after skill in both the professional and personal sphere. After all, who doesn’t love to be heard? But what happens when we apply that mindset to the way our organizations solicit feedback and interact with our customers? We don’t have to make any assumptions to answer this question, because we have the data.

Cookieless authentication in ThoughtSpot Everywhere

Amidst growing concerns around user privacy and regulatory laws, the cookieless paradigm has been gaining momentum over time in digital advertising. In addition, web browsers are increasingly blocking third-party cookies altogether in web sessions, necessitating the need for new authentication methods in web applications. Cookieless authentication is a secure way to verify user identities in web applications without relying on cookies.

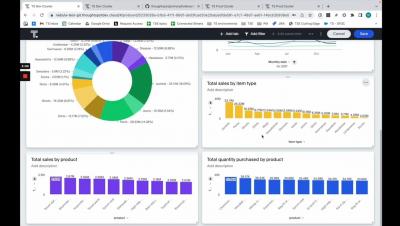

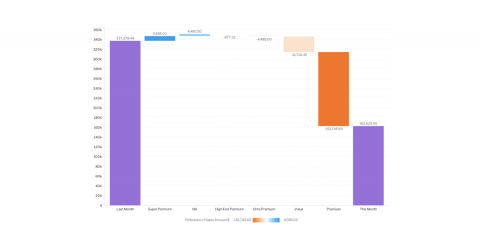

4 Financial Dashboard Examples for 2023

Financial dashboards bring performance into focus by collecting the most important metrics and indicators in one location. But when organizations build their financial dashboards from the ground up, challenges often arise. A primary hurdle is making the right design choices to create dashboards that drive successful business decisions. To overcome this hurdle, it helps to incorporate ideas that have already been implemented, evaluated, and improved on by others.

Snowflake Native App Framework Now Available to Developers in AWS

At Snowflake Summit 2022, we introduced a new way of building apps with the Snowflake Native App Framework. Today, we are excited to bring the power of the Snowflake Native App Framework to developers around the world with the public preview in AWS. Developers can now start building and testing Snowflake Native Apps in their accounts in AWS. Distribution and monetization capabilities will be available in public preview on AWS later this year.

Version control APIs with Git integration

Available in beta, we have introduced new version control REST APIs for Git integration in the 9.3.0.cl release of ThoughtSpot Analytics Cloud. ThoughtSpot administrators can now link their instances to a GitHub repository and utilize continuous integration and deployment (CI/CD) best practices to effectively manage their organization's analytic content throughout its lifecycle.

Snowflake Native App Framework

Explore the Integrate.io and Salesforce Integration

What's new in ThoughtSpot Analytics Cloud 9.3.0

ThoughtSpot acquires Mode: Empowering data teams to bring Generative AI to BI

At ThoughtSpot, we know how important it is for businesses of every size and industry to empower every knowledge worker with personalized, actionable data-driven insights. These insights are your secret sauce to making better business decisions, growing faster, and delivering customer experiences that keep people coming back for more. But how do you scale self-service analytics to business users without completely overwhelming your data teams?

How to get the most out of your dbt Cloud instance

You may already be familiar with dbt, but just to be sure we're on the same page, let's revisit the concepts. The Data Build Tool (dbt) is a widely used framework provided by dbt Labs, specifically designed to bridge the gap between data analysts, data engineers, and software engineers, resulting in the creation of a new role in data and analytics: the analytics engineer.

One Big Cluster Stuck: The Right Tool for the Right Job

Over time, using the wrong tool for the job can wreak havoc on environmental health. Here are some tips and tricks of the trade to prevent well-intended yet inappropriate data engineering and data science activities from cluttering or crashing the cluster.

The Generative AI Overload

With any new, transformative technology comes a fear of the unknown, as I explored in my first blog of a new series on generative AI. Companies are pulled between acting quickly to avoid being left behind and proceeding with caution to avoid major missteps.

Planning Your Migration to Microsoft D365 F&SCM

Over the past few years, software vendors moved their applications to the cloud. Microsoft committed heavily to the cloud across all its product lines, including the Dynamics family of ERP and CRM solutions. That will mean an eventual migration to the cloud for customers of Microsoft Dynamics AX, Microsoft Dynamics CRM, and the legacy Microsoft GP, SL, and NAV products.

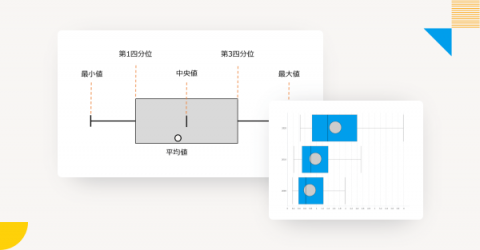

Analyzing Population Percentages Using Yellowfin Box and Whisker Charts

Behind the data model: How we transform your data

Learn how our Fivetran data models transform your connector data into analytics-ready tables.

ThoughtSpot + Mode Demo

Movers and Makers | Financial Services

Mastering ML Model Performance: Best Practices for Optimal Results

How to activate the Snowflake Data Cloud with AI-Powered Analytics

During Beyond 2023, we officially launched ThoughtSpot Sage, our new search experience that combines the power of GPT’s natural language processing and generative AI capabilities with the accuracy and security of our patented self-service analytics platform. As the experience layer of the modern data stack and an Elite Partner of Snowflake, we are constantly thinking about how our product innovation unlocks value for our shared customers.

The Power of Cohort Analysis In Real-Time Product Analytics

The fast-paced world of digital technology necessitates dynamic and responsive approaches to data analysis. In this light, cohort analysis emerges as a formidable tool, providing meaningful insights when blended with real-time analytics. This fusion is especially beneficial for digital enterprises like Countly's users, who operate within a highly volatile environment.

Application development with Cloudera Operational Database

Accelerate Search In The Data Cloud With Generative AI

Sridhar Ramaswamy (Neeva co-founder) on the LLM landscape and his excitement for Summit! https://www.snowflake.com/summit/

❄Join our YouTube community❄ https://bit.ly/3lzfeeB

Kensu launches support for Unity Catalog bringing immediate data observability to thousands of Databricks users

Do You Know Where All Your Data Is?

In spite of diligent digital transformation efforts, most financial services institutions still support a loose patchwork of siloed systems and repositories. These dis-integrated resources are “data platforms” in name only: in addition to their high maintenance costs, their lack of interoperability with other critical systems makes it difficult to respond to business change.

Celebrating Keboola's Rockstar Moment: We've Snagged the "Powered By Snowflake" Award!

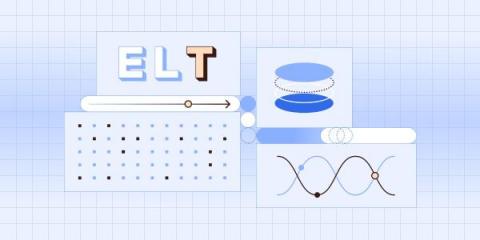

Data transformations: what's the T in ELT?

How Fivetran Transformations help you accelerate time to value and utilize the best practices of data transformation’s top tooling - dbt Core.

Supercharge your insights from Microsoft Dynamics 365

Learn how Fivetran’s connector for Microsoft Dynamics 365 CRM and Dynamics 365 Finance empowers you to activate and analyze your data effectively.

Techniques to optimize Google BigQuery performance for AI-Powered Analytics

Google BigQuery is a fully managed, enterprise-scale, cloud data warehouse that helps you store, manage, and analyze your data. This article will take you through the fundamentals of Google BigQuery. I’ll also share optimization opportunities to improve performance when you’re using both BigQuery and ThoughtSpot.

What is The Difference Between BI and Analytics?

BI and analytics are both umbrella terms referring to a type of data insight software. Many providers use them interchangeably, but some use them in conjunction, claiming to offer both business intelligence and business analytics. This of course makes us wonder: what’s the difference?

GEODIS Collaborates with Yellowfin to Launch Self-Service Analytics Interactive for Visibility Customers

Top MongoDB ETL Tools for Efficient Data Integration

Analyzing unstructured data in BigQuery with Vertex AI

From AI to Customer Experiences: Here Are 5 Key Takeaways From Accelerate:

Four approaches to data infrastructure modernization

As your organization’s data operations mature, you will need to upgrade your infrastructure to fulfill new needs and requirements.

Product Announcement: New Power Duo In Town

Highlights from a gem of a session: Qlik and Talend at Gartner London

At three full days and nearly 4,000 registered attendees, Gartner Data & Analytics Summit 2023 in London was the largest data and analytics summit in EMEA to date. That much interest in data and analytics thrills us, of course — but it was also exciting because it was one of our first major events following the announcement that Talend has been acquired by Qlik. There was a lot to talk about!

AI-Driven Observability for Snowflake

Performance. Reliability. Cost-effectiveness. Unravel is a data observability platform that provides cost intelligence, warehouse optimization, query optimization, and automated alerting and actions for high-volume users of the Snowflake Data Cloud. Unravel leverages AI and automation to deliver realtime, user-level and query-level cost reporting, code-level optimization recommendations, and automated spend controls to empower and unify DataOps and FinOps teams.

Experience Generative AI with Qlik

Five years ago, Qlik paved the way in powering our analytics platform with AI, with the introduction of natural language processing and generation. Our augmented analytics capabilities, which now also include fully interactive search, chat, and multi-language support, enabled a generative AI experience before it was cool. Today marks the next step in our AI journey with the launch of a new suite of OpenAI connectors to deliver a ChatGPT experience in Qlik Cloud.

Jitterbit vs. Talend vs. Integrate.io

Live: Product Update - Streaming & Snowpark with James & Jeff

SaaS in 60 - Add Generative AI to your Analytics and Business Processes - OpenAI Connectors #chatgpt

Can AI Chatbots Help Product Managers Do More With Less?

AI Chatbots are being talked about everywhere. Product managers rely heavily on collaborations with stakeholders to achieve success with their strategic objectives. These stakeholders include developers, sales teams, marketing teams, executives, and other key players. Yet these stakeholders alone do not guarantee success for your customers and clients. As a product manager, you need to use all the tools at your disposal to add value to their strategies and goals.

How to Get Results With Self-Service Analytics

Self-service analytics has been a leading priority in the business intelligence (BI) space for years and is likely here to stay. With data-driven culture on the rise, analytics is no longer just for IT teams and data scientists. Self-service business intelligence tools make it possible for personnel across functions to perform analytics-related tasks themselves, dramatically reducing time to insight.

21 KPIs and Metrics Your Should Include in a Procurement KPI Dashboard

Benchmarking Snowpark Vs. Spark For Data Processing

Smart(er) Reporting in BMC with Yellowfin: Webinar Recap

Logistics giant optimizes cloud data costs up front at speed & scale

One of the world’s largest logistics companies leverages automation and AI to empower every individual data engineer with self-service capability to optimize their jobs for performance and cost. The company was able to cut its cloud data costs by 70% in six months—and keep them down with automated 360° cost visibility, prescriptive guidance, and guardrails for its 3,000 data engineers across the globe.

No-Code Data Pipelines: Streamline Data Integration

#shorts - Scheduled Reload Failed? Here's why

Stitch data pipeline set up using Snowflake Partner Connect

What's in a title? Data analysts, engineers and scientists explained

For the best staffing and team-building results, respect the differences between types of data professionals.

Built with BigQuery: Quantum Metric unlocks data for frictionless customer experiences

Quantum Metric uses BigQuery to analyze vast amounts of data to drive customer-centric digital experiences.

Without data quality, your data initiatives will fail.

Chad Sanderson is passionate about data quality, and fixing the muddy relationship between data producers and consumers. He is a former Head of Data at Convoy, a LinkedIn writer, and a published author. He lives in Seattle, Washington, and is the Chief Operator of the Data Quality Camp. Without data quality, your data initiatives will fail. Despite that, data teams still struggle to gain buy-in on quality initiatives from executive teams. Here's why: 1.

Understanding Needs From All Perspectives Before Applying Tech Solutions

Why Cloud Operations Would Benefit From a "On-Premise" Approach to Cost Management

Ozone Replication with Replication Manager with PvC DS

Data Lake Architecture & The Future of Log Analytics

Statsig unlocks new features by migrating Spark to BigQuery

Migrating to BigQuery from Spark helped Statsig to develop new features for customers and help them run scalable experimentation programs.

Pros & Cons of Using Hevo Data for Zero Code ETL

ERP data, financial efficiency and the current state of enterprise data strategy

Fivetran CEO George Fraser and Snowflake CEO Frank Slootman sat with Data Cloud Now to discuss what they’re hearing from customers in their joint interview.

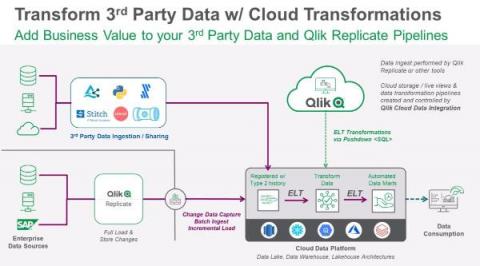

Increasing the Value of Cloud Data: Qlik Cloud Data Integration Transformation Services

Data fragmentation and the growing volume of data sources and types are putting increased focus and importance on an organization’s ability to continuously organize, transform and cost effectively deliver real-time data to its entire organization through the cloud.

Keboola & Whaly: Getting You From Raw Data to Insights Faster

Comparing AWS RDS ETL Tools

Fivetran vs. Matillion: Features, pricing, services and more

Choosing one over the other can come down to a few key differences. Let’s take a look at both to help you make an informed decision.

Smart(er) Reporting in BMC with Yellowfin

Discover the benefits of cross-cloud geospatial analytics with BigQuery Omni

BigQuery Omni lets you do data analytics on data, including geospatial data, stored across public cloud environments.

Why Yellowfin and WhereScape are a Great Combination

Integrate.io Alternatives: An ETL Tool Comparison

Restaurant KPI Dashboard: A Restaurant Owner's Handbook on Measuring KPIs to Scale Business

SaaS in 60 - Notes Permissions, Button Text, New Connectors and More!

9 Expert Ways to Find the Target Audience for Your Website in 2023

How to Create an SEO Report for Your Client in 2023 (Templates Included)

The Most Important 49 SEO KPIs Every SEO Pro Should Track and Measure in 2023

We asked 129 SEOs to weigh in on the most important SEO KPIs for measuring success and search visibility. Here are the 49 SEO metrics they recommend.

A Comprehensive Guide To Build a Successful DataOps Culture in Your Team

3 Data Silo Examples and How to Break Them Down

“Data is knowledge, knowledge is power, and bad data equals bad decisions,” says Appian Senior Solutions Consultant Ben Crawley. We’ve all felt the sting of poorly integrated solutions, hard-to-access information, and sometimes, inaccurate data. This “bad data” is often the result of information that’s spread across different systems, creating data accuracy challenges and preventing you from having a single source of truth for your organization's information.

One Big Cluster Stuck: Platform Health

Clearly environmental health and high performance are dependent on the proper implementation, tuning, and use of CDP, hardware, and microservices. Ideally you have Visibility and Transparency into existing high priority problems in your environment. The links below will carry you to regions within the Cloudera Community where you will find best practices to properly implement and tune hardware and services.

Three perspectives on data governance

How to identify and resolve conflicting incentives and priorities over data governance.

Takeaways on AI from our recent webinar with Microsoft

Discover how organizations can harness the full potential of AI through a modern data stack, as we dive into the insights from our recent joint webinar with Microsoft.

Cloudera CTO Advises | Trust Before You AI

ThoughtSpot Generative AI Meet-up - June 2023

Improve query performance and optimize costs in BigQuery using the anti-pattern recognition tool

A new BigQuery anti-pattern recognition tool scans SQL queries to identify anti-patterns, and provides optimization recommendations.

Why data centralization matters for retail

Retail businesses contend with data challenges unique to a fast-paced, high-volume industry.

How data teams can jumpstart investment in data infrastructure

Whether your organization’s growth is led by product, marketing or sales, small proofs of concept can springboard you towards better data infrastructure.

A Deep Dive into Push Notifications: Redefining Seamless Customer Communication

Push notifications have steadily solidified their role as a pivotal element in business marketing strategies, offering an immediate, unfiltered line of communication with customers. However, there is a delicate balance to maintain between efficient push notifications and those that are merely intrusive. It is here where personalization and A/B testing, among other considerations, become instrumental in honing the effectiveness of these real-time messages.

Exploratory Data Science With Cloudera Machine Learning

Talend Release Notes Highlights - R2023-05

Top 20 Website Performance Metrics Experienced Marketers Need to Track

Build your open data lakehouse on Apache Iceberg tables with Dremio and Fivetran

Combine the flexibility of data lakes, governance of data warehouses and automated data movement.

Enabling data maturity

Penguin Random House’s Pete Williams on riding the data maturity curve, people and data literacy and readiness to enable and exploit.

Top 5 ELT Tools

Seven data integration and quality scenarios for Qlik and Talend solutions

By now, you should have read the headlines that Qlik's acquisition of Talend is complete, and we're excited to expand our best-in-class capabilities to help you access, transform, trust, analyze, and take action with your data. You might have seen Mike Capone's QlikWorld keynote or the recent "What's Next is Now" webinar and wondered how to leverage these new capabilities in your organization.

Insight Advisor in Qlik Sense - Generating Automated Insights

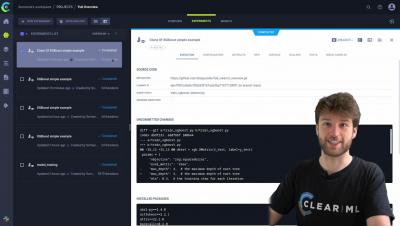

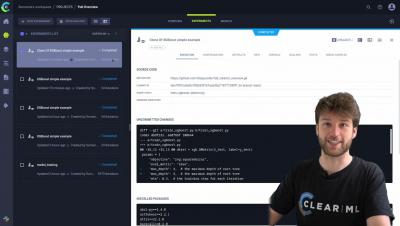

ClearML Onboarding Walkthrough - PART 3: Model serving and Monitoring

The Top 10 Google BigQuery ETL Tools

How Cloudera Supports Zero Trust for Data

By now, almost everyone across the tech landscape has heard of the Zero Trust (ZT) security model, which assumes that every device, application, or user attempting to access a network is not to be trusted (see NIST definitions below). But as models go, the idea is easier than the execution.

How to deliver successful AI products with Keboola?

7 Best Data Integration Techniques In 2023

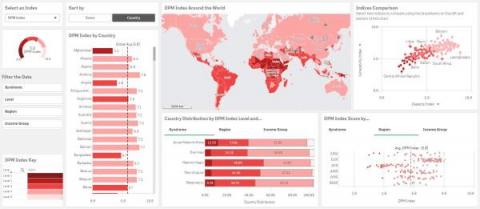

Qlik Supports the World Health Organization to Help Global Community Prepare for Future Epidemics Through Data

Qlik and its NGO partners focus on leveraging data to bring a positive impact to the world. While we are still seeing the effects of the COVID pandemic throughout society, there are tremendous lessons that we can carry forward to be better prepared for the next likely pandemic.

Integration of ChatGPT in Qlik Cloud

Industry Impact | Intelligent manufacturing operations | Short

Announcing Dataform in GA: Develop, version control, and deploy SQL pipelines in BigQuery

Dataform, now GA, lets data engineers build SQL pipelines in BigQuery while following best practices like Git, CI/CD, and code lifecycle management.

Building ML workflows in BigQuery the easy way, without code

A flexible, automated analytic workflow tool that integrates with BigQueryML allows no-code forecasting and model training.

Airbyte vs Integrate.io

Beyond Monitoring: Introducing Cloudera Observability

Increased costs and wasted resources are on the rise as software systems have moved from monolithic applications to distributed, service-oriented architectures. As a result, over the past few years, interest in observability has seen a marked rise. Observability, borrowed from its control theory context, has found a real sweet spot for organizations looking to answer the question “why,” that monitoring alone is unable to answer.

Deliver Data-Driven Decision-Making with the New Government & Education Data Cloud

Today’s governmental and educational organizations can’t fully use the wealth of data they possess to improve citizen and student outcomes. Government agencies often deal with disparate and siloed data that can impact real-time decision-making. Securely exchanging information and collaborating on data remains an essential task in almost every agency strategy.

ClearML vs Other MLOps Tools

When approaching machine learning operations, the options can be overwhelming. There may be multiple solutions available for each step in the process, and the most popular (usually open source tools) may not necessarily be good or easy to use, but they are free.

Navigating the ThoughtSpot Community Tutorial

Why the modern data stack is critical for leveraging AI

Databricks’ 2023 State of Data + AI report highlights the importance of the modern data stack in leveraging AI.

Why Fivetran supports data lakes

Flexible, affordable large-scale storage is the essential backbone for analytics and machine learning.

9 Data Quality Checks to Solve (Almost) All Your Issues

5 Steps to Successful Business-IT Alignment

Four Data Trends Redefining Success for the Modern Business

When it comes to data, state of the art is an ever-moving target. There’s also a lot of hype around “cutting edge” that isn’t always grounded in reality. At Snowflake, we’re able to see how cutting-edge companies are actually working with data on our platform.

The 10 Best Salesforce ETL Tools

How to Perform Cash Flow Analysis using Yellowfin Waterfall Charts

ClearML Onboarding Walkthrough - PART 2: Remote Task Execution and Automations

Industry Impact | Shaping the Future of Media and Entertainment

How to simplify unstructured data analytics using BigQuery ML and Vertex AI

How BigQuery’s ML inference engine can be used to run inferences against unstructured data in BigQuery using Vertex AI pre-trained models.

Build an image data classification model with BigQuery ML

Step-by-step instructions for building an image classifier with ResNet, Cloud Storage and BQML.

Unleashing the Power of Countly for Kids Gaming Industry Analytics

In the first part of this article, we delved into the importance of data privacy for children's apps, examined prominent regulations like COPPA and GDPR-K, and offered practical advice for parents and developers to safeguard children's data. As indicated by the title, this second part aims to elucidate the exceptional qualities of Countly as an analytics tool specifically tailored for the kids gaming industry.

Oracle to BigQuery: 2 Easy Methods

Azure HBase X-Region Replication for Disaster Recovery Using Cloudera Replication Manager

Using Direct Query with MS Azure SQL - New Data Source Support

Real-time data analysis for CaixaBank

10 AWS Data Lake Best Practices

12 KPIs and Metrics to Include in a Revenue Cycle KPI Dashboard to Measure Success

Customer 360 for Sports and Gaming Fans: The Data Science Best Practices You Need to Know

Sports and gaming companies are forging ahead with the use of data science as a competitive differentiator. According to an industry report, the global AI in media and entertainment market size was valued at $10.87 billion in 2021 and is estimated to grow 26.9% annually until 2030.

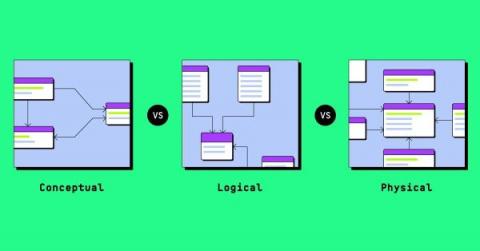

Conceptual vs logical vs physical data models

Data modeling is not about creating diagrams for documentation sake. It’s about creating a shared understanding between the business and the data teams, building trust, and delivering value with data. It’s also an investment. An investment in your data systems' stability, reliability, and future adaptability. Like all valuable initiatives, it will require some additional effort upfront.

The Modern Data Ecosystem: Use Managed Services

When monitoring cloud resources, there are several factors to consider.

The Modern Data Ecosystem: Choose the Right Instance

There are several ways to optimize cloud storage, depending on your specific needs and circumstances. Here are some general tips that can help: Overall, optimizing cloud storage requires careful planning, monitoring, and management. By following these tips, you can reduce your storage costs, improve your data management, and get the most out of your cloud storage investment.

Exporting data from Countly through DB Viewer

DB Viewer is a plugin that provides a UI to browse databases. But it is also a great option to access Database data through REST API, for example, to export data. In this article, we will explain how to navigate the data scheme and find all the needed information to export events and their granular data from Countly. Let's say you have some other database, and you want to populate it with data from Countly. Or you just want to prepare some kind of report through a third-party application.

Cloud Data Warehouse: A Comprehensive Guide

The Modern Data Ecosystem: Monitor Cloud Resources

When monitoring cloud resources, there are several factors to consider.

LLM ChatBot Augmented with Enterprise Data

Onboarding - Part 2

00:00 - Intro

01:29 - Remotely Executing Task

06:49 - Model Repository

09:10 - Workers and Queues

17:27 - Workers on K8s

19:14 - Pipelines

31:20 - Triggerscheduler

39:05 - Github CI/CD Templates

39:36 - Outro