Systems | Development | Analytics | API | Testing

July 2023

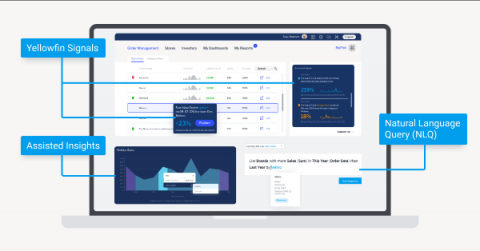

How Yellowfin Self Service Analytics Helps Automate Data Insights

Cloud cost management: How to optimize and control cloud expenses

It’s no surprise that cloud spending is rapidly increasing, so it’s also no surprise that controlling those rapidly increasing cloud costs is a top priority for business, technology, and data leaders. According to Gartner, the use of public cloud computing has increased IT spending for most organizations (54%) over the last three years, with only 29% reporting that the cloud decreased IT spending.

Cloudera launches observability offering for the hybrid cloud

Visualize Your Spreadsheet Data with Databox!

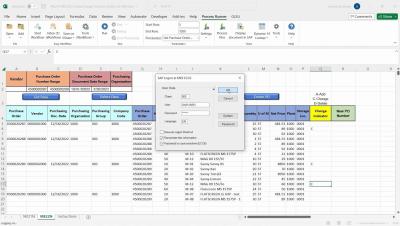

Process Runner: SAP Data Management and Excel-based Automation

Built with BigQuery: How Ternary turns customers' cloud-spending pain into gain

Ternary consolidates billing data from customers’ cloud service providers, ISVs, and other data sources using BigQuery and other Google Cloud services.

Deploying NiFi Flow | Impala Critical Exception

5 ELK Stack Pros and Cons

Data warehouse modernization: Diving deeper into Qlik Talend data integration and quality scenarios

Step right up, ladies and gentlemen, and witness the grand spectacle of the digital age! In a world where data is king, where information reigns supreme, and cloud data warehouses are multiplying like rabbits, there's a technology initiative like no other— data warehouse modernization! This article is the second in the series "Seven Data Integration and Quality Scenarios for Qlik and Talend," and answers everything you wanted to know about data warehouse modernization but were afraid to ask.

How to Monitor and Debug Your Data Pipeline

Kensu Brings Data Observability to Data Engineers

Wands for SAP: Escape the Limitations of Financial Reporting

Unlock insights faster from your MySQL data in BigQuery

Unlock insights faster from your MySQL data in BigQuery through Dataflow JDBC Templates.

5 Must Have ETL Development Tools

ThoughtSpot for Sheets delivers Generative AI to every knowledge worker

Today we're excited to officially launch AI Explain on ThoughtSpot for Sheets, the ultimate cheat code for data literacy and exploration. AI Explain integrates Google's PaLM 2 LLM, specifically leveraging the Bison model to automatically generate the top data stories for any visualization created with our Sheets extension.

3 Ways AI, ML, and Predictive Analytics Can Help Solve the Nursing Crisis

The nursing profession is in crisis. According to McKinsey, over 30% of surveyed nurses said they may leave their current patient care jobs in the next year, and for inpatient nurses it’s higher at 45%. Meanwhile, the average professional tenure of nurses dropped from 3.6 years to 2.8 years between 2020 and 2023. These alarming trends have healthcare systems on red alert. Ninety-four percent of surveyed health system senior executives said the nursing shortage is critical.

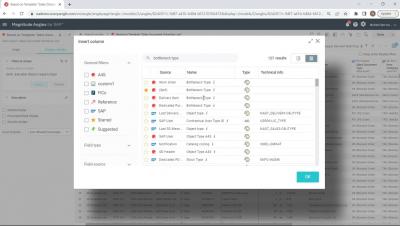

Angles Professional: Operational Reporting for Deltek from insightsoftware

Hitachi Vantara Announces Integrated Solution with Microsoft Azure that Transforms Hybrid Cloud Management

Building Trust in Generative AI

Is the generative AI honeymoon over already? After months of buzz around its transformative possibilities, excitement is now starting to be tampered by a growing concern on trust and data privacy. Just in the last few weeks, there have been several lawsuits launched against AI companies, including a well publicized charge of copyright infringement.

How To Survive a Recession in Business with Data Integration

MLOps for Generative AI in the Enterprise

Consumer Privacy: Getting More from Data Compliance with Embedded Analytics

Redshift vs. Postgres: Key Differences

Why Reinvent the Wheel? The Challenges of DIY Open Source Analytics Platforms

In their effort to reduce their technology spend, some organizations that leverage open source projects for advanced analytics often consider either building and maintaining their own runtime with the required data processing engines or retaining older, now obsolete, versions of legacy Cloudera runtimes (CDH or HDP).

How to Price Analytics Applications

CDO & CDAO Guide to Enterprise Generative AI

We all know that organizations face a huge challenge in extracting valuable insights from vast amounts of data. Chief Data Officers (CDOs) and Chief Data Analytics Officers (CDAOs) play a key role in this process, as they are responsible for managing and leveraging organizational data to drive sustainable and responsible growth. One technology that has revolutionized the way they unlock value from business data is generative artificial intelligence (AI).

Embracing the Future: How Generative AI is Transforming and Supercharging the Landscape of Knowledge Work

The world of knowledge work is undergoing a profound transformation as generative AI emerges as a powerful force driving innovation, efficiency, and productivity. With its ability to analyze vast amounts of data, generate insights, and streamline complex tasks, generative AI is reshaping the way professionals work and unlocking new possibilities. It also raises fears of replacing knowledge workers with Generative AI.

Unveiling the Key Security Concerns of CISOs Regarding Generative AI within the Enterprise

In today’s rapidly evolving technological landscape, generative artificial intelligence (AI) has emerged as a powerful tool for various industries, and it seems like enterprises are fast to adopt it. Generative AI refers to the use of machine learning algorithms to generate original and creative content such as images, text, or music.

The Art of Data Leadership | A discussion with Chief Digital Officer, Ray Kunik

Expert-Recommended eCommerce KPIs: The Essential Guide to Tracking Performance

Fivetran now supports Delta Lake on Azure as a destination

Learn all about Fivetran’s new Delta Lake on Azure destination.

Create and use a Webhook Notification in SQL Stream Builder

Salesforce's Migration to Snowflake

Geospatial analytics on our radar #EarthEngine #BigQuery

Angles Enterprise for SAP: Unlock the Insights in Your SAP ERP Data

How to use custom holidays for time-series forecasting in BigQuery ML

With custom holiday modeling features, BigQuery users can build more powerful and accurate time-series forecasting models using BigQuery ML.

Dear Databox: 6 Ways to Reduce SaaS Customer Churn by Up to 40%

Data Warehouse Modernization: Diving Deeper into Qlik Talend Data Integration and Quality Scenarios

Step right up, ladies and gentlemen, and witness the grand spectacle of the digital age! In a world where data is king, where information reigns supreme, and cloud data warehouses are multiplying like rabbits, there's a technology initiative like no other— Data Warehouse Modernization! This article is the second in the series "Seven Data Integration and Quality Scenarios for Qlik and Talend," and answers everything you wanted to know about Data Warehouse Modernization but were afraid to ask.

Data-Informed Decision Making in a Recession

How Kensu's Integration with Matillion empowers data teams to deliver reliable data

It’s a common thread amongst data-driven organizations: data teams face soaring volumes of data with varying complexities, which raise issues regarding data reliability. Efficiently monitoring data pipelines has become paramount to swiftly identifying and addressing potential data incidents, ensuring minimal impact on data practitioners and end users.

Setting data in motion with Qlik Data Integration and Confluent Cloud

Using Integrate.io & SFTP To Transfer Flat File Data

AWS Redshift vs. The Rest - What's the Best Data Warehouse?

In the age of big data, where humans generate 2.5 quintillion bytes of data every single day, organizations like yours have the potential to harness more powerful analytics than ever before. But gathering, organizing, and sorting data still proves a challenge. Put simply, there's too much information and not enough context. The most popular commercial data warehouse solutions like Amazon Redshift say they deliver structured, usable data for businesses. But is this true?

Partners in Innovation: Voice of the Customer Enhancements to Logi Symphony

Boosting Object Storage Performance with Ozone Manager

Ozone is an Apache Software Foundation project to build a distributed storage platform that caters to the demanding performance needs of analytical workloads, content distribution, and object storage use cases. The Ozone Manager is a critical component of Ozone. It is a replicated, highly-available service that is responsible for managing the metadata for all objects stored in Ozone. As Ozone scales to exabytes of data, it is important to ensure that Ozone Manager can perform at scale.

Data Lake ETL: Integrating Data From Multiple Sources

Powering the Latest LLM Innovation, Llama v2 in Snowflake, Part 1

This blog series covers how to run, train, fine-tune, and deploy large language models securely inside your Snowflake Account with Snowpark Container Services This year there has been a surge of progress in the world of open source large language models (LLMs). This world of free and open source LLMs took yet another major step forward just this week with Meta’s release of Llama v2.

Mobile A/B Testing and Conversion Rate Optimization in Product Analytics

Product analytics, as a pivotal component in the modern digital business ecosystem, empowers organizations with data-driven insights to make informed decisions and craft superior user experiences. Particularly, A/B testing and conversion rate optimization (CRO) are critical techniques for fine-tuning mobile applications. This article delves into the technical aspects of implementing and analyzing these strategies, specifically within a mobile context.

Applied Machine Learning Prototypes | The Future of Machine Learning

SaaS in 60 - New Cloud Application Sources in Qlik Cloud Data Integration

Walkthrough: Shopify to Snowflake Example - Application Sources - Qlik Cloud Data Integration

Live: Snowflake Summit Recap (w/ Native Apps demo)

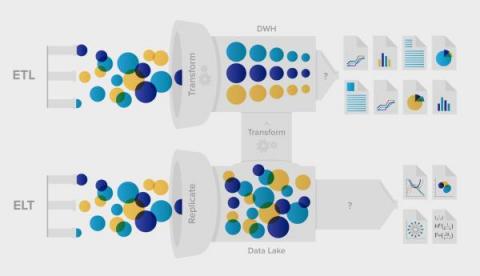

ETL vs ELT: 5 Critical Differences

How to use advance feature engineering to preprocess data in BigQuery ML

How to preprocess data using BigQuery ML so you can get better insights and models.

The EU-U.S. Data Privacy Framework and how it affects data governance (and you)

A recent decision by the European Commission should make data transit from the EU to the US simpler.

Unlock the Full Potential of Hive

In the realm of big data analytics, Hive has been a trusted companion for summarizing, querying, and analyzing huge and disparate datasets. But let’s face it, navigating the world of any SQL engine is a daunting task, and Hive is no exception. As a Hive user, you will find yourself wanting to go beyond surface-level analysis, and deep dive into the intricacies of how a Hive query is executed.

Salesforce Automation Tools: Streamline Your Sales Process

Dear Databox: How Do I Increase Traffic to My B2B SaaS Site?

How ThoughtSpot Partnered with Google Cloud to put AI at the center of BI

At ThoughtSpot, we believe making data accessible to every knowledge worker requires human-centered technology—an analytics experience that bridges the “language” barrier between technology and people. AI is the perfect compliment to search because it empowers organizations to analyze, understand, and act on data.

Integrate.io's ELT & CDC Platform Overview

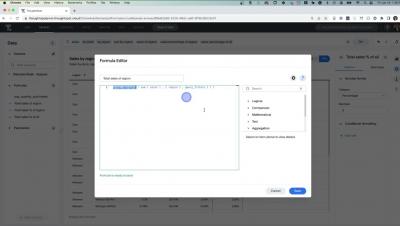

Level of Detail Analysis with ThoughtSpot

Accessing an SFTP Server Step-by-Step

One Big Cluster Stuck: Environment Health Scorecard

Throughout the One Big Cluster Stuck series we’ve explored impactful best practices to gain control of your Cloudera Data platform (CDP) environment and significantly improve its health and performance. We’ve shared code, dashboards, and tools to help you on your health improvement journey. We’d like to provide one last tool.

Five key attributes of a highly efficient data pipeline

Not all ELT solutions are created equal. Here are the capabilities your tool needs to efficiently move data.

Yellowfin 9.9 Release Highlights

Let Real-Time Data Visualization Drive Your Storytelling

How Generative AI Will Impact the Pharmaceutical Industry

By Noam Harel In the ever-changing landscape of the pharmaceutical industry, the integration of generative artificial intelligence (AI) holds immense promise and potential alongside risk, patient and consumer safety and tight regulation. Generative AI refers to the ability of machines to autonomously create new and unique content, ideas, or solutions.

Mind the Gap: Bridging the Business Unit AI Innovation Gap

By Noam Harel In the fast-paced and ever-evolving business landscape, innovation has become the lifeblood of success. Yet, many organizations fail to harness the full potential of innovation due to a significant gap between their business units. This gap, like a hidden chasm, prevents the sharing of best practices, stifling growth and hindering progress.

API Analytics and Monetization with Choreo and Moesif

We're excited to announce the partnership between Choreo and Moesif, bringing you an integration for improved API analytics and monetization. Choreo is our application development suite designed to accelerate the creation of digital experiences. It simplifies the process of building, deploying, and monitoring cloud-native applications, boosting productivity and fostering innovation in organizations.

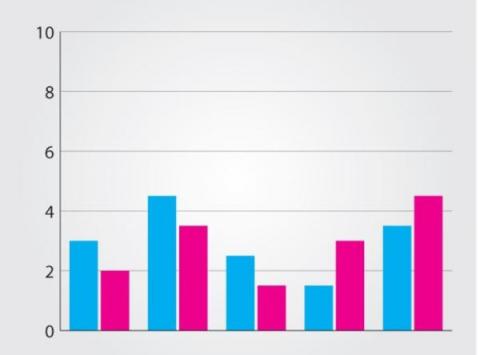

Comparing Data Visualizations: Bar vs. Stacked, Icons vs. Shapes, and Line vs. Area

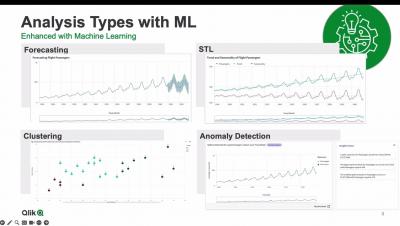

Machine Learning Services for Augmented Analytics

Transforming Banking with AI/ML | OCBC and Cloudera Unleashing Data-Driven Insights

Highlights for OCBC + Cloudera: LLM End User Meetup

Learn how Cloudera can help you trust your enterprise AI at https://www.cloudera.com/why-cloudera/enterprise-ai.html

Join optimizations with BigQuery primary keys and foreign keys

Understand how unenforced Key Constraints can benefit queries in BigQuery.

From Hive Tables to Iceberg Tables: Hassle-Free

For more than a decade now, the Hive table format has been a ubiquitous presence in the big data ecosystem, managing petabytes of data with remarkable efficiency and scale. But as the data volumes, data variety, and data usage grows, users face many challenges when using Hive tables because of its antiquated directory-based table format. Some of the common issues include constrained schema evolution, static partitioning of data, and long planning time because of S3 directory listings.

Modern data architecture allows you to have your cake and eat it, too

How data technologies circumvent ugly tradeoffs and satisfy competing priorities.

Over Half of U.K. Companies Overwhelmed by Data, as Security and Sustainability Challenges Grow

The Future of Data Pipelines: Trends and Predictions

12 Times Faster Query Planning With Iceberg Manifest Caching in Impala

Iceberg is an emerging open-table format designed for large analytic workloads. The Apache Iceberg project continues developing an implementation of Iceberg specification in the form of Java Library. Several compute engines such as Impala, Hive, Spark, and Trino have supported querying data in Iceberg table format by adopting this Java Library provided by the Apache Iceberg project.

Demo: Real-time Data Pipelines for Databricks Lakehouse with Qlik

The Peak AI Platform Lets Businesses Tap Into The Power Of Artificial Intelligence

FinOps Camp Episode 1: Governing Cost with FinOps for Cloud Analytics, Fireside Chat

Top Salesforce Trends Shaping the Future of CRM

BI complications and their solutions

Any organisation wanting to pursue digital transformation understands the value of good-quality data. Data is akin to digital gold, and it is immensely important to strategic decision-making. Ensuring that your data or BI team has everything they need is part of the challenge.

Radiall transforms decision-making with Qlik Cloud

17 Best Data Warehousing Tools and Resources

Using dbt to integrate and transform ASCII files

The final iteration of our series on ASCII files; how to combine dbt and Fivetran to integrate ASCII files.

Traditional AI vs. Generative AI

As generative AI continues to captivate attention with its transformative potential, there is a danger that traditional AI and ML become overshadowed. But as I mentioned in my last blog, this would be a mistake as traditional AI methods still hold immense value and relevance, and likely more so than generative AI in the near term.

Data Integration Trends to Watch in 2023

Integrating Cloudera Data Warehouse with Kudu Clusters

Apache Impala and Apache Kudu make a great combination for real-time analytics on streaming data for time series and real-time data warehousing use cases. More than 200 Cloudera customers have implemented Apache Kudu with Apache Spark for ingestion and Apache Impala for real-time BI use cases successfully over the last decade, with thousands of nodes running Apache Kudu.

How to Evolve Your Power BI Solution With Yellowfin

DBS Bank Uplevels Individual Engineers at Scale

DBS Bank leverages Unravel to identify inefficiencies across 10,000s of data applications/pipelines and guide individual developers and engineers on how, where, and what to improve—no matter what technology or platform they’re using.

Talend Release Notes Highlights - R2023-06

SaaS in 60 - Business Glossary Terms Linked to Master Items

Automated Data Movement: Simplify Your Data Architecture with Fivetran

BigQuery Cost Optimization: Storage

BigQuery Cost Optimization: Compute

BigQuery Cost Optimization: Select Queries

Healthcare leader uses AI insights to boost data pipeline efficiency

One of the largest health insurance providers in the United States uses Unravel to ensure that its business-critical data applications are optimized for performance, reliability, and cost in its development environment—before they go live in production. Data and data-driven statistical analysis have always been at the core of health insurance.

Cloudera Data Catalog | Data Stewardship, Data Lakes, & GDPR in Pharma

Database sync: Diving deeper into Qlik and Talend data integration and quality scenarios

A few weeks ago, I wrote a post summarizing "Seven Data Integration and Quality Scenarios for Qlik | Talend," but ever since, folks have asked if I could explain a little deeper. I'm always happy to oblige my reader (you know who you are), so let's start with the first scenario: Database-to-database synchronization.

9 ETL Tests That Ensure Data Quality and Integrity

According to Harvard Business Review – Only 3% of Companies’ Data Meets Basic Quality Standards. In the world of data integration, Extract, Transform, and Load (ETL) processes play a vital role in seamlessly moving and transforming data from diverse sources to target systems. However, ensuring the quality and integrity of this data is crucial for accurate decision-making and business success. ETL testing is the key to achieving reliable data pipelines.

From descriptive to predictive: Your first machine learning model

An overview of common low-hanging fruits to help you get started with machine learning.

Hive Acid Table Replication

Six Most Useful Types of Event Data for PLG

A connector to bring Earth Engine and BigQuery closer together for geospatial analytics

Earth Engine and BigQuery share the goal of making large-scale data processing accessible and usable by a wider range of people and applications.

Top 7 REST API Tools

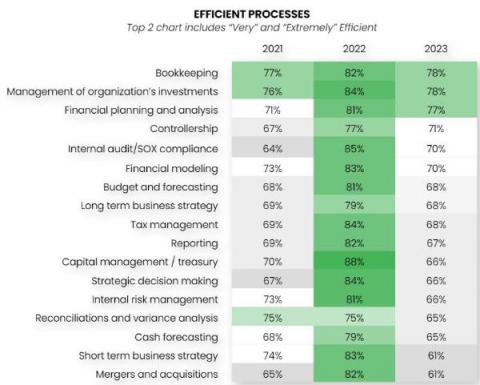

Why Finance Teams are Struggling with Efficiency in 2023

Essential GA4 Events for Optimizing Your SaaS Marketing Funnel

The Role of AI and Machine Learning in Future Product Analytics

In our data-driven world, the landscape of product analytics is rapidly evolving. With the rise of Artificial Intelligence (AI) and Machine Learning (ML), we're seeing a seismic shift in how businesses approach product development and enhancement. But how does AI and ML fit into product analytics, particularly for non-technical business leaders and marketers? And more importantly, what does this mean for the future?

Fivetran Demo: Elevate Your HubSpot Email Marketing Campaign with Fivetran and Sigma Computing

Airbyte vs. Talend: Pros & Cons Comparison

Key Takeaways on Generative AI for CEOs: Revolutionizing Business with Speed and Trust

Generative AI stands out from other technological breakthroughs due to its remarkable velocity and unprecedented speed. In a matter of mere months since its initial emergence in the limelight, this cutting-edge innovation has already achieved scalability, aiming to attain substantial return on investment. However, it is imperative to effectively harness this formidable technology, ensuring that it can deploy on a large scale and yield outcomes that garner trust from your business stakeholders.

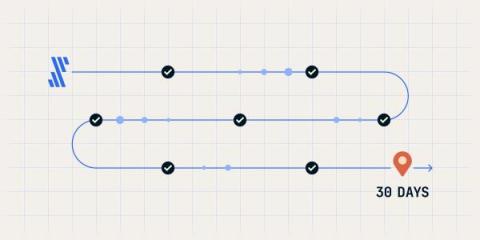

Your first 30 days as a Fivetran user

Upgraded from the free trial and wondering what’s next? Start with this easy onboarding guide!

18 Best Dashboards Recommended By Experts [Templates Included]

The 7 Best Data Orchestration Tools for Data Teams in 2023

Keboola Rocks the Stage with 18 Badges in G2's Summer 2023 Grid Report!

Salesforce Data Integration

Understanding the Elasticsearch Query DSL: A Quick Introduction

Elasticsearch is a distributed search and analytics engine that excels at handling large volumes of data in real time. When we have such a large repository of data, singling out the most suitable context can be a grueling task. And precisely that’s why we query. Querying allows us to search and retrieve relevant data from the Elasticsearch index with relative ease. Elasticsearch uses query DSL for this purpose. Query DSL is a powerful tool for executing such types of search queries.

The Showdown: Snowpark vs. Spark for Data Engineers

Calving Apache Iceberg

Integrate.io's ELT & CDC Platform Overview

Introducing the Hive-BigQuery open-source Connector

With the open-source Hive-BigQuery Connector, you now can let Apache Hive workloads read and write to BigQuery and BigLake tables.