Systems | Development | Analytics | API | Testing

October 2023

Demystifying SFTP Data Transfer: A Step-by-Step Tutorial

Managed Detection & Response Leaders Embrace Data and Analytics to Stay Ahead

The Managed Detection & Response (MDR) industry finds itself in a new era with unprecedented challenges from platform giants and the migration of the attack surface to the cloud, with innovation becoming a requirement for survival. Companies built to provide clients with 24×7 “eyes on glass” now find themselves at the intersection of rapid technological advancements and evolving threat landscapes.

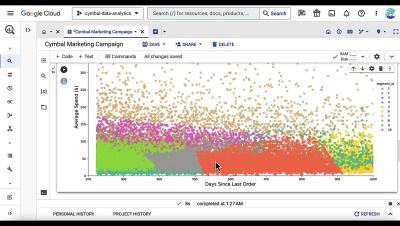

Built with BigQuery: Drive growth through data monetization

At Next ‘23, Exabeam, Dun & Bradstreet, Optimizely and LiveRamp shared how they use BigQuery and Google Data and AI Cloud for data monetization.

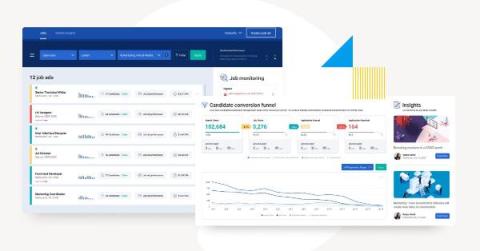

Built with BigQuery: Expedite GTM insights with ZoomInfo B2B data in Analytics Hub

ZoomInfo and Google Cloud recently expanded their partnership to offer ZoomInfo’s Data Cubes for Google Cloud customers via Google Analytics Hub.

The 14 Website Engagement Metrics Every Marketing Team Should Be Tracking in 2023

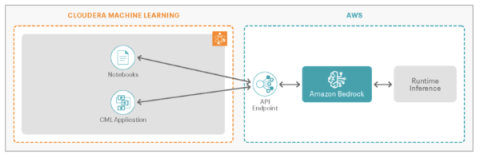

Build Modern Innovative Solutions on Cloudera Data Platform Using the Power of Generative AI with Amazon Bedrock

Enterprises see embracing AI as a strategic imperative that will enable them to stay relevant in increasingly competitive markets. However, it remains difficult to quickly build these capabilities given the challenges with finding readily available talent and resources to get started rapidly on the AI journey.

What is Yellowfin Assisted Insights? How to Get the 'Why' Faster

You wanted to learn how to work with Multiple Calendars. Here's how.

The Strangler Pattern | Microservices 101

Five ways Fivetran lays the foundation for machine learning

Data integration is essential for analytics, regression analysis and your first forays into generative AI.

Google Analytics to BigQuery ETL: 3 Easy Methods

Cloud Analytics Powered by FinOps

Cloud transformation is ranked as the cornerstone of innovation and digitalization. The legacy IT infrastructure to run the business operations—mainly data centers—has a deadline to shift to cloud-based services. Agility, innovation, and time-to-value are the key differentiators cloud service providers (CSP) claim to help organizations speed up digital transformation projects and business objectives.

Collect Logs and Traces From Your Snowflake Applications With Event Tables

We are excited to announce the general availability of Snowflake Event Tables for logging and tracing, an essential feature to boost application observability and supportability for Snowflake developers. In our conversations with developers over the last year, we’ve heard that monitoring and observability are paramount to effectively develop and monitor applications. But previously, developers didn’t have a centralized, straightforward way to capture application logs and traces.

ClearML Announces Extensive New Capabilities for Optimizing GPU Compute Resources

To ensure a frictionless AI/ML development lifecycle, ClearML recently announced extensive new capabilities for managing, scheduling, and optimizing GPU compute resources. This capability benefits customers regardless of whether their setup is on-premise, in the cloud, or hybrid. Under ClearML’s Orchestration menu, a new Enterprise Cost Management Center enables customers to better visualize and oversee what is happening in their clusters.

Building a Modern Data Stack from the Ground Up

Build AI-driven near-real-time operational analytics with Amazon Aurora zero-ETL integration with Amazon Redshift and ThoughtSpot

Every business that analyzes their operational (or transactional) data needs to build a custom data pipeline involving several batch or streaming jobs to extract transactional data from relational databases, transform it, and load it into the data warehouse. In this post, we show how you can leverage Amazon Aurora zero-ETL integration with Amazon Redshift and ThoughtSpot for GenAI driven near real-time operational analytics.

COMING SOON: Uncover Opportunities In Your Data With New Visualizations and More Powerful Charts

Send New Crashes To Your Slack Using Countly

Countly has many powerful features, and one of them is Hooks. You can set up custom triggers and actions, and we want to expand it even further. But for now, let’s check some simple but powerful use cases you can implement. Notifying you in Slack about new crashes happening in your app.

Open Data Lakehouse for Private Cloud Iceberg Replication

Kensu extends Data Observability support for Microsoft users with its Azure Data Factory integration

Announcing Apache Flink 1.18

The Apache Flink PMC is pleased to announce the release of Apache Flink 1.18.0. As usual, we are looking at a packed release with a wide variety of improvements and new features. Overall, 174 people contributed to this release completing 18 FLIPS and 700+ issues. Thank you! Let's dive into the highlights.

Salesforce REST API Integration: A Step-by-Step Guide

Prevent data issues from cascading and deliver reliable insights with Kensu + Azure Data Factory

38% of data teams spend between 20% and 40% of their time fixing data pipelines¹. Combating these data failures is a costly and stressful activity for those looking to deliver reliable data to end users. Organizations using Azure Data Factory can now benefit from the integration with Kensu to expedite this process. Their data teams can now observe data within their Azure Data Factory pipelines and receive valuable insights into data lineage, schema changes, and performance metrics.

Think Like a Data Scientist: The Importance of Building a Data-Driven Company Culture

We’ve all heard that data helps businesses make better decisions. The good news? This isn’t just speculation: research shows that companies who use data to drive decision making increase revenues by an average of more than 8%, are 23 times more likely to attract new customers, and are 19 times more likely to be profitable as a result.

How Healthcare and Life Sciences Can Unlock the Potential of Generative AI

A patient interaction turned into clinician notes in seconds, increasing patient engagement and clinical efficiency. Novel compounds designed with desired properties, accelerating drug discovery. Realistic synthetic data created at scale, expediting research in rare under-addressed disease areas.

Set Your Data in Motion with Data Streaming

Unlocking Team Potential by Accelerating Code Reviews

In the fast-paced world of software development, speed and efficiency are paramount. The State of DevOps 2023 report by Google has reinforced the significance of quick code reviews, claiming that teams with faster code reviews experience a staggering 50% higher software delivery performance. This finding underscores the importance of optimizing the code review process. But how can teams achieve this improvement?

3 Questions Marketers Should Ask When Evaluating AI Solutions

AI. It’s on everyone’s mind—and marketers are no exception. You’ve likely heard about it from co-workers, vendors and peers, and if you had a nickel for every AI mention you heard … well, you get the point.

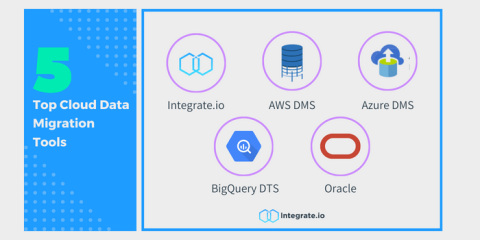

The Top 5 Cloud Data Migration Tools

Top 5 Resources to Understand the Role of AI/ML in Embedded Analytics

Customer Impact Matters: Keeping our team focused on our customers

The best partners will be the most ambitious ones

Is Shadow IT a nuisance, or does Shadow IT lead the way?

Art of Data Leadership | How to become a data leader #data

Over 40% of Businesses Experience Revenue Losses from Technology Downtime, Cloud Complexity, and Legacy System Constraints

Getting Started with Generative AI

It's not hard, it's just new. How can you, your business unit, and your enterprise utilize the exciting and emerging field of Generative AI to develop brand-new functionality? And once you’ve figured out your use cases, how do you successfully build in Generative AI? How do you scale it to production grade?

Boost Your Business with Secure SFTP File Sharing

Remove Data Siloes to Increase the Value of Your SAP Reporting

Transforming Telco with Trusted AI Everywhere

The AI technologies of today—including not just large language models (LLMs) but also deep learning, reinforcement learning, and natural-language processing (NLP) tools—will equip telcos with powerful new automation and analytics capabilities. AI-powered automation is already driving significant margin growth by reducing costs.

Expert Guide for SaaS Content Marketing in 2024 [Insights from 50 Industry Pros]

Content Marketing Benchmarks by Industry for 2023

Can generative AI be the smartest member of your company?

Fivetran COO, Taylor Brown, spearheads AI success via robust data foundation.

Data Ownership | Microservices 101

How to Make a REST API

How to map your journey towards modern data migration

Learn key strategies for migrating legacy systems to cloud-based platforms to modernize.

Snowflake To Acquire Ponder, Boosting Python Capabilities In the Data Cloud

Python’s popularity has more than doubled in the past decade¹ and it is quickly becoming the preferred language for development across machine learning, application development, pipelines, and more. One of our goals at Snowflake is to ensure we continue to deliver a best-in-class platform for Python developers.

Optimizing Personalization Gives Telecoms The Competitive Edge They Need To Win

In today’s tough market, telecommunications companies are feeling the pinch. The telecom industry has undergone enormous change in the last few years. Rapid advances in technology such as the emergence of 5G Wi-Fi, the expansion of fixed wireless access and satellite services and the explosion of IoT devices have put increased pressure on aging legacy equipment, systems and communication networks.

DoD Launches Task Force Lima to Explore Generative AI

AI is the next revolutionary technology that will accelerate the mission of the Department of Defense. Newly boundless in its applications, “AI” joins “cyber” and “cloud” as the most important information technologies that have arrived in the last 25 years.

The Art of Data Leadership | A discussion with Cloudera's CDAO, Shayde Christian

How DISH Wireless Benefits From Data Products Built With Confluent

Source XML files in Amazon S3 into a Kafka topic. Then query with SQL

Yellowfin Cool Features Part 2: Broadcast and Bookmark

Sharing Datasets across organizations with BigQuery Analytics Hub

Learn how to use BigQuery analytics hub to set up a functional dataset share across organizations.

9 Underutilized Marketing Channels for Small Businesses in 2024

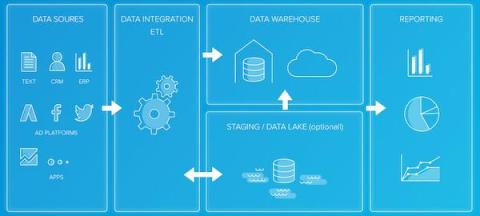

ETL and Data Warehousing Explained: ETL Tool Basics

Database connector setup: A step-by-step guide

Learn the five quick and easy steps to setup database connectors.

Apache Kafka | The Streaming Platform that Powers the World

AI-Powered Performance Summaries | Databox Analytics

How Integrate.io Helps You Build Powerful Salesforce Pipelines

New IDC analysis: The value of Fivetran for enterprise

The quantifiable results of automating, replicating and connecting sources, then moving data with speed? $1.5 million in average annual productivity benefit.

Four Ways Telcos Can Realize Data-Driven Transformation

Telecommunications companies are currently executing on ambitious digital transformation, network transformation, and AI-driven automation efforts. While navigating so many simultaneous data-dependent transformations, they must balance the need to level up their data management practices—accelerating the rate at which they ingest, manage, prepare, and analyze data—with that of governing this data.

Autonomous Microservices | Microservices 101

Using Data Contracts with Confluent Schema Registry

The Confluent Schema Registry plays a pivotal role in ensuring that producers and consumers in a streaming platform are able to communicate effectively. Ensuring the consistent use of schemas and their versions allows producers and consumers to easily interoperate, even when schemas evolve over time.

Accelerating Cost Reduction: AI Making an Impact on Financial Services

In the ever-evolving landscape of the financial services Industry, change is a constant and transformation is a requirement—to stay at pace with new regulations, risk mitigation, and the technological developments that support transformation. And just as financial services experiences its cycles, this time of year I find myself returning to the topic of cost reduction.

Top 9 Data Mining Tools for Business Insights

Data Mart vs Data Warehouse: 5 Critical Differences

Product-Led Growth: 6 Secrets for Success

Product-led growth (PLG) is a business model that emerged in the last decade with the enormous success of vendors like Slack and Datadog. Unlike traditional sales-led models, PLG models cut out the middlemen (sales reps, for example) and let customers just download and use the product without third-party onboarding. The relative novelty of the pricing model and its demonstrably successful application in growing these companies attracted a lot of attention.

How To Manage Stateful Streams with Apache Flink and Java

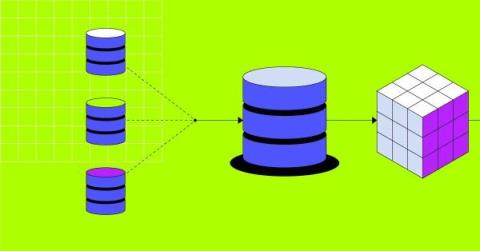

Multi-tiered architectures for data warehouse

In The fundamentals of data warehouse architecture, we covered the standard layers and shared components of a well-formed data warehouse architecture. In this second part, we’ll cover the core components of the multi-tiered architectures for your data warehouse.

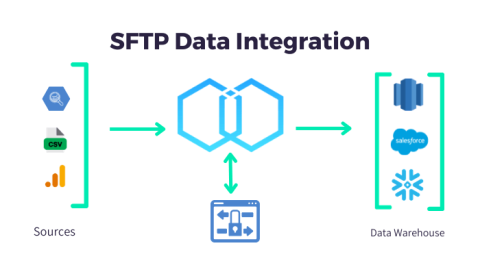

How to Optimize Your SFTP Data Integration Process

SaaS in 60 - Customer Managed Keys - Phase 2

The Value of an Enterprise Data Warehouse

Getting Started With Cloudera Open Data Lakehouse on Private Cloud

Cloudera recently released a fully featured Open Data Lakehouse, powered by Apache Iceberg in the private cloud, in addition to what’s already been available for the Open Data Lakehouse in the public cloud since last year. This release signified Cloudera’s vision of Iceberg everywhere. Customers can deploy Open Data Lakehouse wherever the data resides—any public cloud, private cloud, or hybrid cloud, and port workloads seamlessly across deployments.

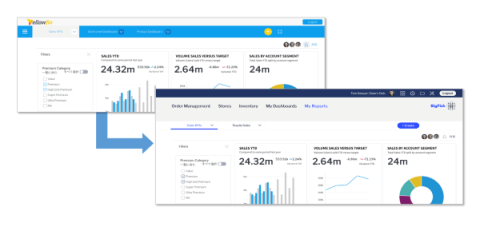

How to Build Multi-Tenant Environments with Yellowfin BI

Unleash cloud-native analytics and AI on-premises with Cloudera

Leadership Principles | Culture Insights

The Evolution of Search: How Multi-Modal LLMs Transcend Vector Databases

Build AI/ML and generative AI applications in Python with BigQuery DataFrames

Learn how to perform analytics on BigQuery data using BigQuery DataFrames and its bigframes.pandas and bigframes.ml APIs.

Securing Your Data Universe | A Deep Dive into Apache Ranger's Architecture & Capabilities

Hevo Data vs. Talend vs. Integrate.io: Key Features & More

Snowflake and Partners Develop Award-Winning Solution to Give Telecoms and Consumers the Power to Reduce Carbon Emissions with Generative AI

In the age of climate consciousness, industries worldwide are grappling with the urgent need to reduce their carbon footprints. One industry that has come under increased scrutiny is telecommunications, where Scope 3 emissions, or the indirect emissions that occur in a company’s value chain that the company has no direct control over, alone account for a staggering 85% of a typical telecom company’s carbon footprint.

Accelerate the Data Analytics Life Cycle with Unravel

Talend Release Notes Highlights - August and September

insightsoftware Introduces Logi Symphony Embedded Business Intelligence and Analytics for Any Web-Based Application

Unleashing Decision Intelligence With Logi Symphony

Streamlining Operations: A Guide to Automating SFTP

Query fresh Google Ads data in BigQuery, via Apache Beam and Dataflow

Now, you can write Google Ads data to BigQuery using Dataflow, enabling you to make data-driven decisions on campaign strategies in real-time.

Introducing Apache Kafka 3.6

We are proud to announce the release of Apache Kafka® 3.6.0. This release contains many new features and improvements. This blog post will highlight some of the more prominent features. For a full list of changes, be sure to check the release notes.

September 2023 Sustainability Reflections: Investments, AI, and Partnerships Will Lead to Progress

Every September, world leaders, business executives, investors, NGOs, and global citizens descend upon New York City to learn and discuss progress towards building a sustainable future for all. Although there was much excitement and energy surrounding the UN General Assembly and Climate Week this year, there was very sobering news that more must be done if we expect to achieve the goals set out by the UN 17 Sustainable Development Goals(SDGs) and Paris Climate Accords.

5 Trends Changing the Modern Startup Ecosystem

While the startup world listened for better news in the aftermath of a volatile 2022, a new salvo of bad news emerged: global venture capital funding declined about 49% within the first six months of 2023 alone. Worsening inflation and rising interest rates are putting pressure on startups across all stages of venture funding to reframe their tech stacks or business models along the tech-scape’s collapsing edges.

ServiceNow's Migration from SAP HANA to Snowflake

Apache Kafka 3.6 - New Features & Improvements

Unravel BigQuery Insights Feature

Unravel BigQuery TopK Feature

Unravel BigQuery Alerts Feature

Unravel BigQuery Cost 360 Feature

Hitachi Vantara Unveils Hitachi Virtual Storage Platform One, Signifying a New Hybrid Cloud Approach to Data Storage

Data Integration for Supply Chain Resilience During a Recession

Fivetran vs Integrate.io: Overview and Comparison

Yellowfin vs Qrvey: What's the difference?

Duet AI in Google Cloud - code assistance in BigQuery

From Monoliths to Microservices | Microservices 101

Increase data literacy and trust with Alation data catalog integration

When using data to make impactful business decisions, certain doubts may start to arise, like “What does this column exactly mean?” or “Can I trust this data source I want to use?” Questions like these speak to a larger need for increased data literacy and trust in data. ThoughtSpot continually invests in this area, giving users the confidence to build the correct Answers needed for their analysis—and ensuring they can trust the data they are shown.

What's New in ThoughtSpot - 9.6.0 Cloud Release

Fivetran Co-Founder, Taylor Brown, gives his insights from his recent visit to Big Data London

Having trouble loading your JSON into BigQuery? Try this!

easily load all your JSON data and redirect your focus on deriving data insights.

The Best Way to Index and Query JSON Logs

What is ETL?

These days, companies have access to more data sources and formats than ever before: databases, websites, SaaS (software as a service) applications, and analytics tools, to name a few. Unfortunately, the ways businesses often store this data make it challenging to extract the valuable insights hidden within — especially when you need it for smarter data-driven business decision-making.

Countly: The Only Self-Hosted Enterprise Solution for Product Analytics

For the past 10 years, Countly has distinguished itself in the analytics landscape, not by coincidence, but owing to its distinctive architecture, exceptional capabilities, and privacy-centric ethos. In the realm of self-hosted enterprise solutions for product analytics, Countly isn’t merely a contender - it's a pacesetter. This article delves into the myriad reasons Countly is held in high esteem as the unrivaled self-hosted enterprise solution in product analytics.

More Effectively Control and Limit Your Spend With Budgets

At Snowflake, we’re committed to helping customers effectively manage and optimize spend. To this effect, we’re excited to launch the public preview of Budgets on AWS today, which enables customers to set spending limits and receive notifications for Snowflake credit usage for either their entire Snowflake account or for a custom group of resources within an account.

Mastering Apache YuniKorn | Kubernetes Resource Scheduling, Deployment, and Beyond

Selling Smarter: Expert-Recommended Strategies for Nailing Every Step of Your Sales Process

Choosing the Right Host for SFTP: Factors to Consider

Financial transaction analytics 101: Efficiently identifying impact

The foundations of financial transaction analytics and how database pipelines play a part in trend analysis.

New Industry Research Reveals a Profound Gap Between Hyper-inflated Expectations and Business Reality When it Comes to Gen AI

Read About The Hidden Costs, Challenges, and Total Cost of Ownership of Generative AI Adoption in the Enterprise as Well as C-level Key Considerations, Challenges and Strategies for Unleashing AI at Scale ClearML recently conducted two global survey reports with the AI Infrastructure Alliance (AIIA) on the business adoption of Generative AI. We surveyed 1,000 AI Leaders and C-level executives in charge of spearheading Generative AI initiatives within their organizations.

Five Common Pitfalls on the Path to Becoming a Data-Driven Enterprise

Your company collects data from different sources and then you analyze the data to help make the right decisions. But you aren’t quite getting the results that you expect. Maybe the insights aren’t accurate. Perhaps the process is time consuming and cumbersome. Or you are only currently using data for a few use cases and struggle to implement organization wide.

An Indepth Look At The Data.World Data Catalog And Governance Platform

Power ON: Supercharge Power BI with Planning and Write-back

Yellowfin Cool Features Part 1: Making Reports Pop

The Top 10 Best API Integration Tools For Developers

Improved Hybrid Cloud Data Migration Starts with Data Modernization

Finding meaningful cost savings is a high priority for companies running their enterprise architecture in the public cloud. Cloud costs have ballooned in recent years and account for over 30% of IT budgets, reports IDC. Enterprises have sought to shift their cloud workloads from on-premises to public clouds, depending upon pricing and performance, to provide themselves with better leverage and business flexibility.

Security and risk mitigation in an LLM world

We’ve talked about the many ways large language models (LLMs) and artificial intelligence (AI) are impacting business efficiency, data and analytics, and even FinOps. But we’ve yet to talk about arguably one of the most important areas of concern: security.

Don't Blink: You'll Miss Something Amazing!

Fast moving data and real time analysis present us with some amazing opportunities. Don’t blink—or you’ll miss it! Every organization has some data that happens in real time, whether it is understanding what our users are doing on our websites or watching our systems and equipment as they perform mission critical tasks for us. This real-time data, when captured and analyzed in a timely manner, may deliver tremendous business value.

Produce Apache Kafka Messages using Apache Flink and Java

Customer Stories: How Streaming Pipelines Drive Better Business Outcomes

Kensu and CDO Magazine announce the results of the first definitive study of the Data Observability Market

Exploring 8 Business Analytics Data Collection Methods

Pincho Nation: Insights at Double Speed & Six-Figure Revenue Growth With Keboola

Configure and Manage Data Pipelines Replication in Snowflake with Ease

We are excited to announce the availability of data pipelines replication, which is now in public preview. In the event of an outage, this powerful new capability lets you easily replicate and failover your entire data ingestion and transformations pipelines in Snowflake with minimal downtime.