Systems | Development | Analytics | API | Testing

December 2021

How to Write a Great Marketing Plan? Get Inspired By These 7 Marketing Report Examples

Heroku Private Space and mTLS

2021 - The Year of Innovations for "Jobs to be Done"

While COVID-19 continues to cause devastating disruption to the global economy more than two years into the pandemic, it is also continuing to force remarkable innovation across different industries. Companies have found new ways to sell, service and operate during the crisis. For me, there is one common theme for these innovative companies, including Qlik, and it is “Jobs to be Done.”

Data Insights: Best Practices for Extracting Insights from Data

Connecting to MySQL With Python

Looking into 2022: Predictions for a New Year in MLOps

In an era where the passage of time seems to have changed somehow, it definitely feels strange to already be reflecting on another year gone by. It’s a cliche for a reason–the world definitely feels like it’s moving faster than ever, and in some completely unexpected directions. Sometimes it feels like we’re living in a time lapse when I consider the pace of technological progress I’ve witnessed in just a year.

Direct vs. Indirect Competition: Most Important Things You Can Learn from Monitoring Both

9 Expert Tips for Using Snowflake

Migrating Data During a Merger or Acquisition

Since I’m now migrating NodeGraph’s processes to Qlik, I thought it may be a good time to talk about migrating data during a merger or acquisition. There are many aspects to consider. Here are some of my thoughts on why companies merge or migrate data landscapes, common M&A migration pitfalls and how to avoid them, the time and cost involved migrating data during a merger or acquisition, and other topics.

How to Price Your SaaS Product: 9 Tips to Get Started

How to Start E-Commerce Integration

HubSpot Reporting: How to Use HubSpot to Build Better Reports for Your Department

Operationalize Your Data Warehouse With Reverse ETL

How to Write a Marketing Strategy? (5 Ready-to-Use Report Templates Included)

Why Log Data Retention Windows Fail

Why You Need a Salesforce Uploader Today!

Three reasons you need modern cloud analytics now

Data is everywhere. As the sheer volume and number of data sources continue to explode, so do new opportunities for modern businesses to create and act on insights. That is if they are equipped with the right analytics technology. Historically, many businesses have settled for “good enough” analytics tools, putting up with lackluster bundles from full-stack vendors in an attempt to minimize cost or risk.

How to Learn Python Scripting in 7 Simple Steps

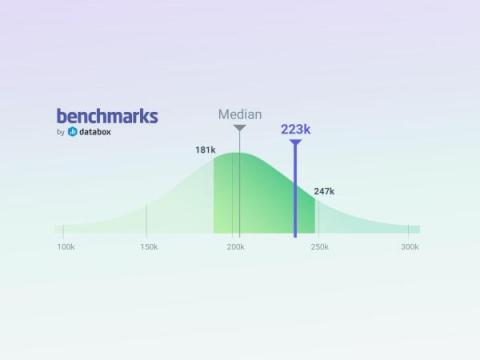

Opt-in Now to Databox's Benchmark Feature to Get Free Access

6 Data Quality Issues in Reporting and Best Practices to Overcome Them

How To Use Change Data Capture with Integrate.io

Cloudera Data Engineering 2021 Year End Review

Since the release of Cloudera Data Engineering (CDE) more than a year ago, our number one goal was operationalizing Spark pipelines at scale with first class tooling designed to streamline automation and observability. In working with thousands of customers deploying Spark applications, we saw significant challenges with managing Spark as well as automating, delivering, and optimizing secure data pipelines.

Why Understanding Dark Data Is Essential to the Future of Finance

“Water, water, everywhere, nor any drop to drink.” The famous line from Samuel Taylor Coleridge’s epic poem “The Rime of the Ancient Mariner” has a fitting application to today’s data problem. Enterprises are deluged with data, but they often have no way to leverage it. According to most experts, only a small percentage of data is usable and made useful, and most of it is in the dark — thus the term, “dark data.”

New Year, New UI: Get Started in Snowsight

Out with the old; in with the new! If you haven’t already checked out the new Snowflake® interface (aka Snowsight®), make it your New Year’s resolution. Set yourself up for success in 2022 by spending a few minutes getting to know the new features and experiences that are in public preview—available when you click the Snowsight button at the top of your console’s menu bar.

Big Data Meets the Cloud

With interest in big data and cloud increasing around the same time, it wasn’t long until big data began being deployed in the cloud. Big data comes with some challenges when deployed in traditional, on-premises settings. There’s significant operational complexity, and, worst of all, scaling deployments to meet the continued exponential growth of data is difficult, time-consuming, and costly.

Leveraging BigQuery Audit Log pipelines for Usage Analytics

In the BigQuery Spotlight series, we talked about Monitoring. This post focuses on using Audit Logs for deep dive monitoring. BigQuery Audit Logs are a collection of logs provided by Google Cloud that provide insight into operations related to your use of BigQuery. A wealth of information is available to you in the Audit Logs. Cloud Logging captures events which can show “who” performed “what” activity and “how” the system behaved.

10 Best Practices for Building a Good API

Recognizing Organizations Leading the Way in Data Security & Governance

The right set of tools helps businesses utilize data to drive insights and value. But balancing a strong layer of security and governance with easy access to data for all users is no easy task. Retrofitting existing solutions to ever-changing policy and security demands is one option. Another option — a more rewarding one — is to include centralized data management, security, and governance into data projects from the start.

13 Biggest Bottlenecks That Keep Your Business from Growing

Web Analytics Reports: How to Create, Read, and Understand Them (With 7 Examples)

Modern Data Stack using Integrate.io for the ELT

Is SSIS a Good ETL Tool?

Data Goes Around The World In 80 Seconds With Snowflake

Continual Launches With $4 Million in Seed to Bring AI to the Modern Data Stack

Will cloud ecosystems finally make insight to action a reality?

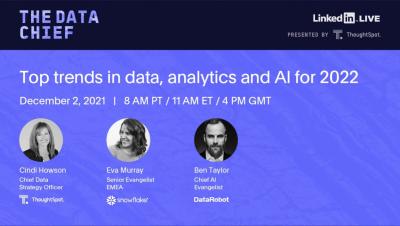

For decades, the technologies and systems that deliver analytics have undergone massive change. What hasn’t changed, however, is the goal: using data-driven insights to drive actions. Insight to action has been a consistent vision for the industry. Everyone from data practitioners to technology developers have sought this elusive goal, but as Chief Data Strategy Officer Cindi Howson points out, it has remained unfulfilled — until now.

Leverage the Power of Fivetran Business Critical on Microsoft Azure

Enterprises using Microsoft Azure can now deliver secure, automated data pipelines while boosting compliance with privacy and industry mandates using Fivetran Business Critical.

Announcing Our $4M Seed and Continual Public Beta

Today we’re excited to announce the public beta launch of Continual, the first operational AI platform built specifically for modern data teams and the modern data stack. We’re also announcing our $4M Series Seed, led by Amplify Partners, and joined by Illuminate Ventures, Wayfinder, DCF, and Essence, as well as new partnerships with Snowflake and dbt Labs.

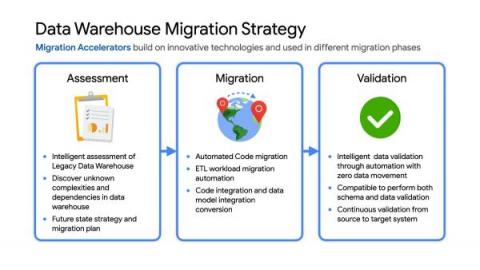

How to migrate an on-premises data warehouse to BigQuery on Google Cloud

Data teams across companies have continuous challenges of consolidating data, processing it and making it useful. They deal with challenges such as a mixture of multiple ETL jobs, long ETL windows capacity-bound on-premise data warehouses and ever-increasing demands from users. They also need to make sure that the downstream requirements of ML, reporting and analytics are met with the data processing.

Unlocking Data Literacy Part 2: Building a Training Program

What is Amazon Redshift Spectrum?

Redshift Join: How to use Redshift's Join Clause

What Are The Best ETL Tools For Vertica?

PostgreSQL to Amazon Redshift: 4 Ways to Replicate Your Data

Adopting a Production-First Approach to Enterprise AI

After a year packed with one machine learning and data science event after another, it’s clear that there are a few different definitions of the term ‘MLOps’ floating around. One convention uses MLOps to mean the cycle of training an AI model: preparing the data, evaluating, and training the model. This iterative or interactive model often includes AutoML capabilities, and what happens outside the scope of the trained model is not included in this definition.

How To Overcome Hybrid Cloud Migration Roadblocks

The Cloudera Enterprise Data Maturity Report is a global survey of 3,150 business and IT decision makers assessing organizations’ maturity when it comes to their current capabilities and handling of data and analytics.

What's new in ThoughtSpot Analytics Cloud November 2021 Release

SaaS in 60 - Catalog KPI and Qlik Lineage Connectors

Why a Data Lakehouse alone is not the answer to modern analytics

A Customer's Experience: Digital River

New Report from KX Plots a Path for Telco Operators to Capture The $200Bn Opportunity

Content Marketing Reporting: Best Practices and Tools for Building Great Reports (Free Ready to Use Templates Included)

How Data is Shaping the Future of Marketing Personalization

How One Marketing Agency Improved Recurring Revenue by 20 Percent by Reporting Video ROI with Databox

Redshift Copy: How to use Redshift's COPY command

The Ultimate Guide to Redshift ETL: Best Practices, Advanced Tips, and Resources for Mastering Redshift ETL

AWS Redshift Pricing: How much does Redshift cost?

Microsoft Azure vs Amazon Redshift

Stitch builds on its Microsoft technology partnership

Stitch is pleased to announce the availability of Microsoft SQL Server as a destination. MS SQL Server joins nine other data destinations (including Microsoft Azure Synapse) that Stitch supports to help execute all your data modeling and analysis projects. Stitch customers can immediately benefit from the new destination, which supports both Azure SQL Server and standard SQL Server editions reaching as far back as SQL Server 2012.

Why Company Data Strategies Are Indelibly Linked with DEI

About the report The Cloudera Enterprise Data Maturity Report is a global survey of 3,150 business and IT decision makers assessing organizations’ maturity when it comes to their current capabilities and handling of data and analytics.

Design With Analytics in Mind for Data Governance

The following is Part III of a three-part series. Welcome to the final installment of a three-part series discussing the areas to take seriously when you want to drive business with analytics. In Part I of this series, I discussed how to prioritize data accessibility and how to address the challenges that come with it. Those challenges include: Part II discussed where the disconnect is and addressed how organizations can bridge the gap.

Data Hub, Fabric or Mesh? Part 1 of 2

Over the course of my next two blog posts, I would like to share my thoughts around a debate raging in data architecture circles. The bone of contention? That the 21st century needs a new data management paradigm for modern analytics. First up, I’ll frame the argument and explain the two prominent approaches of data hub and data fabric. Then, I’ll cover data mesh and compare all three architectures. As always, I’d love to get your input, feedback, queries and comments!

Scaling NLP Pipelines at IHS Markit - MLOps Live #17

CDP on Azure: Harnessing the Power of Data Flow and Event Processing

Introducing Snowflake's Data Cloud Academy for Data Scientists

14 Reasons Sales And Marketing Alignment Is Crucial for Skyrocketing Company Growth

Introducing Continual Integration for dbt

Today we’re pleased to announce Continual Integration for dbt. We believe this is a radical simplification of the machine learning (ML) process for users of dbt and presents a well-defined path that bridges the gap between data analytics and data science. Read on to learn more about this integration and how you can get started.

Top 5 Reasons to Centralize Data & Become a Data-Driven Business

AI and ML: No Longer the Stuff of Science Fiction

Artificial Intelligence (AI) has revolutionized how various industries operate in recent years. But with growing demands, there’s a more nuanced need for enterprise-scale machine learning solutions and better data management systems. The 2021 Data Impact Awards aim to honor organizations who have shown exemplary work in this area.

Fighting Financial Crime and Earning Trust Using Data-Driven Compliance

One of the most challenging and complex elements of operating a financial services institution is compliance. Managing risk, security and privacy to earn customers’ trust has long been at the core of financial services, but this foundation has been shaken over recent years.

4 Tips for Recognizing and Avoiding Analytics Bias

One of the key cornerstones of the emerging field of ethical, explainable AI is recognizing and avoiding bias. As AI takes on a greater role in organizations with sometimes opaque calculations, there is an increased urgency in many businesses to get ahead of these challenges, and companies, such as IBM, Salesforce and Microsoft, have already added roles specifically with the aim of ensuring that ethics are a key consideration of AI.

A Finance Leader's Guide to Data Modernization

In today’s tech-forward companies, CFOs are tasked with managing and overseeing an increasingly expansive domain of systems and technologies to thrive. The rise of regulatory considerations, novel market drivers and a globally connected business environment is creating an entirely new set of pressures on both the structure of the department and on leadership.

Qlik Reporting Service - Brief Overview and Quick Demo - Part 1

Qlik Reporting Service - Build-out Demonstration and Bursting Example - Part 2

Qlik Reporting Service - Brief Overview with Detailed Demonstrations - Part 1 and Part 2

Australia's Department of Skills & Education and MinterEllison Discuss Our Digital Future

Experiments - Sentiment Analysis in Qlik Sense using Amazon Comprehend

Automating MLOps for Deep Learning

How Automated Reporting Saved 16 Agencies Time, Money, and Headaches

Where's the CI/CD in ML?

It’s easy to take continuous integration (CI) and continuous delivery/deployment (CD) for granted these days, but these have been transformational concepts that have drastically changed the face of software development over the past thirty years.

Getting Started with CI/CD and Continual

While CI/CD is synonymous with modern software development best practices, today’s machine learning (ML) practitioners still lack similar tools and workflows for operating the ML development lifecycle on a level on par with software engineers. For background, follow a brief history of transformational CI/CD concepts and how they’re missing from today’s ML development lifecycle.

Best ETL tools for Big Query

Stitch vs. Fivetran vs. Xplenty: A Comprehensive Comparison

New Snowflake Features Released In October And November 2021

Coming off of our Snowday event, we’ve unveiled a number of new product capabilities that expand what is possible in the Data Cloud. From helping businesses operate globally with improved replication efficiency, empowering developers with new functionality in Snowpark, and improving the security and governance of data through native object tagging, there is no shortage of exciting advancements coming to Snowflake.

Instagram Analytics Report: Tips, Tools and Best Practices for Building a Great Report

Anodot and Rivery Demo New Marketing Analytics Kit

Marketing teams routinely struggle with monitoring the performance and cost of their ad campaigns. Now, they have a solution that can be as easy as just a few clicks. We recently joined our partners at Rivery for a webinar demonstrating the new Anodot Markering Analytics Monitoring Kit. The kit allows users to track marketing campaigns in real-time and take the action needed to make the most of ad spend.

What Is Snowflake?

It's An Exciting Future in Music When We Have Clean Data

Hello, I’m Imogen Heap – musician and tech founder. Now, I know what you might be thinking. Why is a musician and composer talking to me about data? Well, for any of you that follow my work, you will know that I am a bit of a data nerd, and that’s why I loved speaking to Joe DosSantos on the latest Data Brilliant podcast. A lot of my music is powered by data. In fact, Joe and I discussed my love for how technology and data inspires me to be more creative. Want to know how?

The modern data stack is broken. It's time for Data stack as a service (DStaaS).

ThoughtSpot Sync, Powered by SeekWell, for HubSpot

How to Determine Markup Percentage for Small Businesses: What's Good, Bad and Average?

Business Analytics: The Future Is AI and It Is Here

Business analytics (BA) is the process of evaluating data in order to gauge business performance and to extract insights that may facilitate strategic planning. It aims to identify the factors that directly impact business performance, such as ie. revenue, user engagement, and technical availability. BA takes data from all business levels, from product and marketing, to operations and finance.

Best ETL Tools for Heroku

Driving Industry Transformation Through the Use of Data

As organizations look to improve business operations and outcomes, global industries are pushing for data-driven transformation. The 2021 Cloudera Data Impact Awards recognize those organizations that have pulled ahead of the pack with efforts to leverage the power of data to improve operations and better serve their customers. The finalists in the “Industry Transformation” category are MTN, National Payments Corporation of India (NPCI), Sberbank, and Bank Negara Indonesia (BNI).

Dashboard vs Report: Similarities and Differences

What Is Redshift?

The Best Time to Kickstart Your Data Strategy Was Yesterday, the Next Best Time Is Now

The Cloudera Enterprise Data Maturity Report is a global survey of 3,150 business and IT decision makers assessing organizations’ maturity when it comes to their current capabilities and handling of data and analytics.

Delivering High Performance for Cloudera Data Platform Operational Database (HBase) When Using S3

CDP Operational Database (COD) is a real-time auto-scaling operational database powered by Apache HBase and Apache Phoenix. It is one of the main Data Services that runs on Cloudera Data Platform (CDP) Public Cloud. You can access COD right from your CDP console. With COD, application developers can now leverage the power of HBase and Phoenix without the overheads related to deployment and management.

How to Set Measurable Customer Service Goals for Your Team

The Developer Playground now supports REST API

One of the best features in ThoughtSpot Everywhere is the Developer Playground. The Playground lets frontend Developers visually configure elements and generate JavaScript code to add into your web app. It is an amazing tool for testing and iterating configuration options before adding final elements such as Search, Liveboards, and visualizations into your web app. But what about a backend Developer who might be building solutions that utilize the Platform’s APIs?

IT Professionals Reveal Cloud Data Platform Highs and Lows of 2021

Customer 360: Explained

Yellowfin 9.7 release highlights

Simplifying Use of External APIs with Request/Response Translators

Snowpark has generated significant excitement and interest since it was announced. Snowpark is a developer framework that enables data engineers, data scientists, and data developers to code in their language of choice, and execute pipelines, machine learning (ML) workflows, and data applications faster and more securely. While many parts of Snowpark are in preview stages, External Functions entered General Availability earlier this year.

Introducing Qlik Application Automation Templates

Creating and Analyzing a Customer Service Report: Tips and Best Practices

Creating the Ultimate Analytics Stack with Moesif and Datadog

When looking at API analytics and monitoring platforms, many seem to be so similar that it’s hard to figure out the differences between them. We often hear this confusion from users and prospects. In a world with so many tools available, how do we figure out which ones are necessary and which are redundant? One of the most common questions we are asked revolves around how Moesif compares to Datadog and how they could work together.

ETL Tools Comparison

How Hybrid and Cloud-Based Architectures are Unlocking the Power of Data

It takes vision, purpose, and skill to unlock the power of data. It also takes the right strategy. For ExxonMobil, Ares Trading (Merck), and the University of California San Diego (UCSD), the right strategy is taking full advantage of the cloud. All three organizations have partnered with Cloudera, leveraging a hybrid or cloud-based architecture to improve the lives of the people who depend on their organizations’ data.

A Glimpse Into How AI Is Modernizing Data for the Financial Services Industry

Organizations in the financial services sector face a unique set of challenges as they consider how to wrangle and process the vast amount of data they collect. During our Financial Services Summit, I was lucky enough to speak to Brian Anthony, chief data officer for the Municipal Securities Rulemaking Board (MSRB), to learn how the MSRB is integrating technologies such as artificial intelligence (AI) and machine learning to modernize its data.

Analysts Can Now Use SQL to Build and Deploy ML Models with Snowflake and Amazon SageMaker Autopilot

Machine learning (ML) models have become key drivers in helping organizations reveal patterns and make predictions that drive value across the business. While extremely valuable, building and deploying these models remains in the hands of only a small subset of expert data scientists and engineers with deep programming and ML framework expertise.

How Snowflake Support Is Continuously Improving the Customer Experience

At Snowflake, putting the customer first is an essential company value. But “customer-centric” is more than just a buzzword: We use a data-driven, outside-in lens on everything we do, at all levels of the company. In particular, here’s how Snowflake Support is listening to you—our customers—and continuously improving the Snowflake customer experience at every touchpoint.

Qlik and UiPath - The Power of Active Intelligence and Enterprise Workflows for Action

The business world is rapidly pivoting all the time. Strategic shifts, reprioritization and being first all require being smart while moving fast. The value of agility has never stood out more due to the need to react to new realties in everything from public health, remote and in-office business policies and workflows, to broader economic concerns like supply chain as we move into recovery and revitalization.

Moving from Passive BI to Active Intelligence

Active Intelligence Platform

How Augmented Analytics Enhances Decision-Making

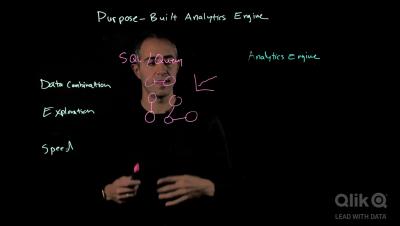

Purpose Built Analytics Engine

Modern Analytics

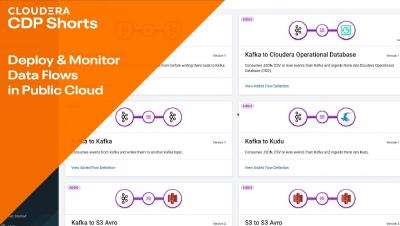

Rapidly Deploy & Monitor New Data Flows in the Public Cloud

What's a Good Profit Margin for a New Business?

How Did Your Paid Marketing Channels Perform on Black Friday?

Best ETL tools for Snowflake

In AI we trust? Why we Need to Talk About Ethics and Governance (part 2 of 2)

In part 1 of this blog post, we discussed the need to be mindful of data bias and the resulting consequences when certain parameters are skewed. Surely there are ways to comb through the data to minimise the risks from spiralling out of control. We need to get to the root of the problem. In 2019, the Gradient institute published a white paper outlining the practical challenges for Ethical AI.

Keboola vs Azure Data Factory: The 8 critical differences

Yellowfin 9.7 Release

5 Best Practices for Building a Successful Startup

What is a Marketing Research Report and How to Write It?

Taking a Closer Look at Snowflake Software

Create your Private Data Warehousing Environment Using Azure Kubernetes Service

For Cloudera ensuring data security is critical because we have large customers in highly regulated industries like financial services and healthcare, where security is paramount. Also, for other industries like retail, telecom or public sector that deal with large amounts of customer data and operate multi-tenant environments, sometimes with end users who are outside of their company, securing all the data may be a very time intensive process.

The Future of Data-Driven Product Innovation

Financial products are no longer characterized by the steps of filling out a form, waiting for a credit decision and, if successful, watching the monthly payments leaving your account.

Modernizing Your Data Analytics Architecture For the Cloud

There is an explosion of data from a myriad of sources and an insatiable demand to consume it. Traditional manual ETL methods are too brittle to keep up. Leaving many a BI team struggling to provide meaningful business insights quickly.

Future of Data Meetup: Future of data and analytics in the Hybrid & Multi Cloud

Fall 21 Talend Data Fabric Demo from data ingestion to consumption

Introducing Data Quality Service - Fall '21 release

What is Snowflake? 8 Minute Demo

The Data Chief Live: Top trends in data, analytics and AI for 2022

New Integration: Connect Freshdesk with Databox

Easier administration and management of BigQuery with Resource Charts and Slot Estimator

As customers grow their analytical workloads and footprint on BigQuery, their monitoring and management requirements evolve - they want to be able to manage their environments at scale, take action in context. They also desire capacity management capabilities to optimize their BigQuery environments. With our BigQuery Administrator Hub capabilities, customers can now better manage BigQuery at scale.

API Automation: What You Need to Know

Take control of your data with Stitch Unlimited

Without data, your business cannot survive. You need it to understand your customers, to inform your product development, and to plan the future of your company. So why would you tolerate technology that artificially throttles access to your own data and stunts your company’s growth? It just doesn't make sense. That’s why Talend is bringing you Stitch Unlimited and Stitch Unlimited Plus.