Systems | Development | Analytics | API | Testing

March 2022

Save Your Business From Churn: 9 Churn Risk Factors to Identify

How to achieve adaptive height embedding with ThoughtSpot Everywhere

Embedding an iframe is a great option to embed content because of its security and performance features, but it can be tricky to get the right fit. Especially when we interact with them, or the user changes the browser viewport. Some of the most common issues include an additional or missing scroll bar or the modal windows opening inside the iframe without showing the center of the current viewport.

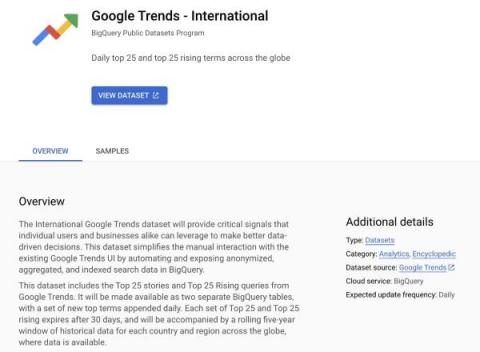

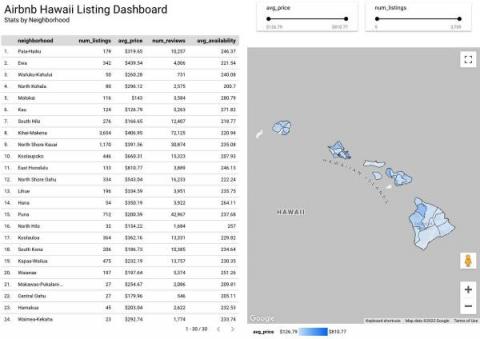

Enhance your analysis with new international Google Trends datasets in BigQuery

Sharing and exchanging data with other organizations is a critical element of any organization’s analytics strategy. In fact, BigQuery customers are already sharing data using our existing infrastructure, with over 4,500 customers swapping data across organizational boundaries. Creating seamless access to analytics workflows and insights has become that much easier with the introduction of Analytics Hub and surfacing datasets unique to Google.

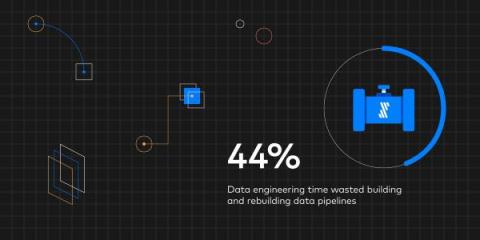

What wasting data engineering talent really costs you

Data engineers spend almost half their time maintaining data pipelines. The total average cost? $520,000 per year, according to new research.

The Usability of Dashboards (Part 1): Does Anyone Actually Use These Things?

Dashboards showing ever-increasing levels of information are more and more in demand, but perhaps less and less understood. In particular, application teams selling products are pushed by their customers to include “high-level overviews” and “real-time information” in their software. But do they use that information? How often? And what for? Sometimes a dashboard is a critical piece of software enabling near-instantaneous responses to extinction-level business catastrophes.

How to Develop an Actionable Customer Insight Report Your Whole Team Can Use

ThoughtSpot EMEA realizes 6 fold cloud growth in 12 months

A lot can happen in 18 months. In a startup, that’s even more true. Here at ThoughtSpot, where we’re known for innovation at a breakneck pace, that feels like a lifetime.

Do Data Companies Need Chief Ethics Officers?

Sometimes it takes a billion-dollar mistake to bring the murkier side of data ethics into sharp focus. Equifax found this out to their own cost in 2017 when they failed to protect the data of almost 150 million users globally. The catastrophic breach was bad enough on its own — but Equifax waited three months to go public with the news. As the public furore rose to a crescendo, the credit organization dragged its feet on disclosing exactly what kind of information had been leaked.

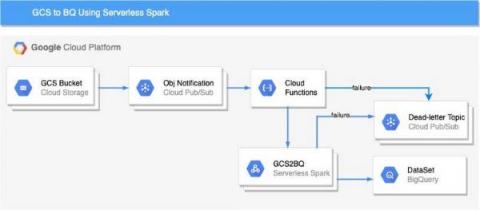

Ingesting Google Cloud Storage files to BigQuery using Cloud Functions and Serverless Spark

Apache Spark has become a popular platform as it can serve all of data engineering, data exploration, and machine learning use cases. However, Spark still requires the on-premises way of managing clusters and tuning infrastructure for each job.

What Is ELT?

Extract, load and transform, or ELT, is a data integration process that moves data directly from source to destination for analysts to transform as needed.

How to Integrate E-Commerce With ERP

Driving Value and Innovation from SAP with Qlik and Google Cloud Cortex Framework

Hybrid Data Cloud Success for State and Local Governments

State and local governments generate and store enormous amounts of data essential to their ability to deliver citizen services. But how can they capitalize on all of their data to become engines of growth and innovation, empowering and enhancing their ability to provide services and better serve their communities?

Best Reporting Practices to Different Management Levels

Introducing Talend Data Catalog 8, our most powerful governance solution ever

We’re thrilled to announce Talend Data Catalog 8, our most powerful data governance solution yet. Talend Data Catalog 8 builds on our strong foundation of metadata management and data cataloging capabilities to deliver what businesses need most: healthy data. At Talend, healthy data is data that supports business objectives, and is often the missing link between business success and failure.

How to Optimize NetSuite for Faster, Accurate Financial Reporting

Born and built in the cloud as an enterprise resource planning (ERP) solution, Oracle NetSuite enables organizations to create a unified view of their business. While it generates reports on enterprise performance, the platform wasn’t built to optimize the reporting process. For accounting and finance teams especially, the challenge makes it difficult to create flexible, customized financial reports.

The Best Integration Platform for Your Business

Section Access in the Qlik Cloud Platform - now with support for Insight Advisor Chat

Get From Data to Decision Faster with Snowsight

4 minute Introduction to the Iguazio MLOps Platform

Move Fast - Without Breaking Things

SNCF: Getting the customer experience right

Drive Retail Profitability, Stability with Snowflake's Retail Data Cloud

In 2022, retailers are still dealing with the lasting impacts of the global pandemic, including a decreased workforce, supply chain disruptions, and surging inflation. At the same time, now is the chance for a “long overdue great retail reset” that could promote stability and profitability, according to a recent Deloitte report.

DataOps Observability Datasheet

Unravel’s AI-enabled full-stack observability for the modern data stack simplifies the way data teams monitor, observe, manage, troubleshoot, and optimize the performance and cost of large-scale data applications. Unravel also accelerates data migration to the cloud by providing a data-driven assessment plan to save time and cost while also enabling you to deploy effortlessly.

8 Great Product Integrations for Your E-Commerce Store

AstraZeneca: Automating data flows

As Visionary as 1-2-3: Our take on the 2022 Gartner Magic Quadrant

Interview With The CTO of Halla, Henry Michaelson

For the newest instalment in our series of interviews asking leading technology specialists about their achievements in their field, we’ve welcomed the CTO of Halla, Inc. Henry Michaelson.

7 Ways to Improve Your Performance With KPI Scorecards

Data governance builds business value

Moving from a compliance-driven to value-driven mindset when making data governance decisions.

ThoughtSpot named a Visionary in the 2022 Magic Quadrant for Analytics and BI Platforms

Today, Gartner has positioned ThoughtSpot as a Visionary in the 2022 Magic Quadrant for Analytics and Business Intelligence Platforms, a recognition that we are so excited to continue living up to for our customers.

Gain Business Value With Big Data AI Analytics

“Data-driven” is the latest buzzword in organizations in which data-based decision making is directly connected to business success. According to Gartner’s Hype Cycle, more than 77% of the C-suite now say data science is critical to their organization meeting strategic objectives. For top organizations looking to adopt a data-driven culture to stay competitive, what does that mean?

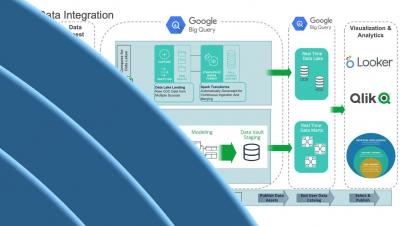

From Data to Value: Building a Scalable Data Platform with Google Cloud

At the DataOps Unleashed 2022 conference, Google Cloud’s Head of Product Management for Open Source Data Analytics Abhishek Kashyap discussed how businesses are using Google Cloud to build secure and scalable data platforms. This article summarizes key takeaways from his presentation, Building a Scalable Data Platform with Google Cloud. Data is the fuel that drives informed decision-making and digital transformation.

IPaaS: 3 Ways It Helps You Grow

Hevo Data Featured as a Leader in G2's Spring 2022 Report

The G2 Spring 2022 Grid Reports are out! We’re excited to announce that Hevo Data has been rated as a Leader in the categories – ETL Tools, Data Extraction, and Data Replication. Besides this, we have also received badges in numerous other categories, like Easiest To Use: Data Extraction and High Performer: E-commerce Data Integration, giving us a count of 17 badges in total and highlighting our strengths as a product. According to G2, 98% of our users have rated us 4 or 5-stars.

Hello World - Introducing Platform SDK

What's new in ThoughtSpot Analytics Cloud 8.1.0

How to Build a Modern DataOps Team

Simplify SAP analytics using Google Cloud Cortex Framework and HVR

HVR, a Fivetran enterprise solution, enables companies to tap into the power of Google Cloud Cortex Framework and turn SAP data into faster insights.

How to design your data stack for curiosity

"I have no particular talent, I am just passionately curious"— Albert Einstein When was the last time you thought about optimizing your analytics toolset for curiosity? Yet what is the value of all the data and analytics in the world if not paired with human curiosity?

ChaosSearch Named in 2022 Gartner Market Guide for Analytics Query Accelerators

Sales Compensation in a Consumption Pricing World

Today’s organizations want stronger alignment between the cost of SaaS solutions and the value derived from these products. As a result, many software companies are looking at adopting consumption-based pricing models as an alternative to subscription models. With consumption-based models, customers only pay for what they use, and usage is tied directly to the value customers derive. Of course, consumption means software companies don’t experience revenue until customers use the solution.

Explaining AUR: A Universal Retail Language

[DEMO] Bring data experts to solve data quality issues

Future of Data Meetup (2022): Using Apache Iceberg for Multi-Function Analytics in the Cloud

How to Create a KPI Report in Google Sheets? Step-by-Step Guide

How to set up Experimental Marketing

5 Reasons to Use Apache Iceberg on Cloudera Data Platform (CDP)

Please join us on March 24 for Future of Data meetup where we do a deep dive into Iceberg with CDP

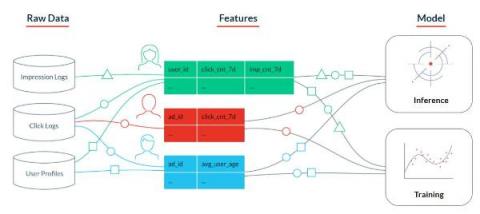

Building Production-Ready Machine Learning Features on Snowflake with Tecton's Feature Store

We are excited to announce the integration of Tecton’s enterprise feature store and Feast, the popular open source feature store, with Snowflake. The integration, available in preview to all Snowflake customers on AWS, will enable data teams to securely and reliably store, process, and manage the complete lifecycle of machine learning (ML) features for production in Snowflake. Tecton allows data teams to define features as code using Python and SQL.

Lower Privacy Risks and Transform Your Business with a Unified View of Data

It’s one thing to talk about orchestrating and automating your organization’s data operations. It is quite another to gain the confidence that comes with having a unified view of your data. This just-in-time view of the truth simultaneously reduces data privacy risk and enables your business to pursue data-driven goals.

What is the Shopify Tech Stack?

10 Common Dashboard Design Mistakes and How to Avoid Them

How to Prepare Your Workforce For the Future Data-Driven Enterprise

The digital revolution has truly transformed modern organizations, embedding data and analytics in every business process and customer interaction. Advances in technology enable smart supply chains with predictive analytics, automated logistics for same-day delivery, and AI advisors that reduce medical errors. As this continues, workers in all roles will need new a new skill—data literacy—to collaborate with these systems and each other.

Cloud Integration 101

Businesses and organizations of all types have embraced cloud integration to transform data into business intelligence. The reason for this is simple: more and more business operations are happening in hybrid cloud — or even fully cloud-to-cloud – environments, and without proper tools to manage data in the cloud, data can become siloed, overlooked, or lost altogether.

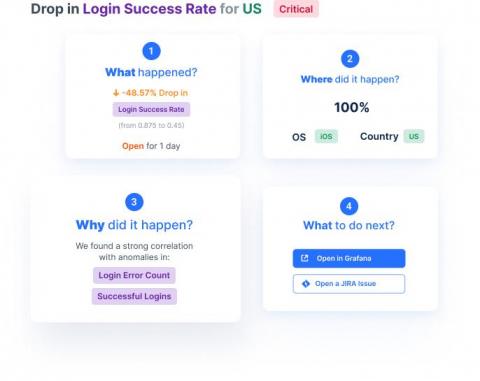

Customer Journey KPIs You Must Know

The KPIs that apply to each product are as different as products come. There are infinite variables that come into play when determining what exactly a KPI should be. Because these KPIs are centered around customer journeys, they are all user-based and purposely omit technical-based KPIs (such as crashes or errors). In a recent article in our Product Analytics Academy, we covered what makes a strategy a good one when understanding and choosing relevant metrics to form KPIs based on product analytics.

How to Accelerate Value from Merger and Acquisition Strategies with Cloudera Data Platform (CDP)

The Covid-19 pandemic has resulted in an unprecedented global economic landscape that is dominated by loose monetary policies, low borrowing costs and influx of capital in the equity markets. Against that backdrop, Mergers and Acquisitions (M&A) activity has surged since 2021 as companies are trying to take advantage of the current environment and adapt to the new business realities shaped by the global pandemic.

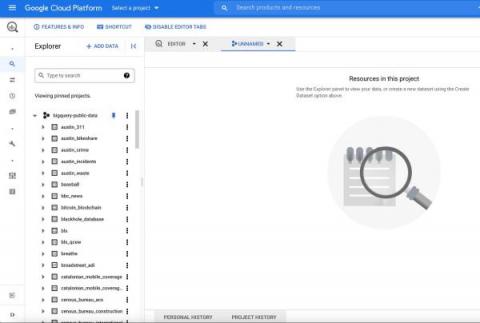

Using GeoJSON in BigQuery for geospatial analytics

The first step in most analytical workloads is to ingest the data that you need for your analysis into your data warehouse. For geospatial analysis involving point, line, or polygon data, ingesting data can be complex because geospatial data comes in myriad data formats. Two of the most popular geospatial formats are GeoJSON and GeoJSON-NL (newline-delimited geoJSON).

The Best E-Commerce Integrations

What is a data pipeline?

A data pipeline is a series of actions that combine data from multiple sources for analysis or visualization.

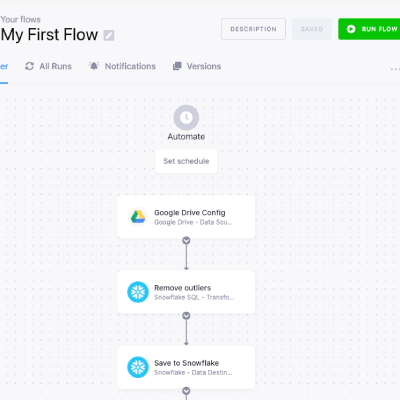

Pipelines from tasks

Overview of the Fall '21 release

Lead Velocity: What Makes It the Most Important Metric for SaaS Businesses?

From Bricks and Mortar to Taps and Swipes, Accelerated Disruption in Banking Is Set to Continue

In 2019, global venture capital investment in Fintech totaled at least $33.9 Billion. For years, the incumbent players had been warning the sector that when the next recession hit, or when fintech faced a “real” crisis, that the sector would, at worst, collapse, and, at best, see a whole bevy of fintech players disappear

Top 12 Most Challenging Operational Reports

Today’s business leaders face an uncertain economic landscape. In the aftermath of unprecedented business disruption in 2020, organizational decision-makers are turning their focus to new concerns. According to McKinsey research, supply chain disruption, inflation, and a growing labor shortage are now top concerns for the C-suite.

Operational Efficiency with Talend Stitch? Best. Gift. Ever.

It’s the ultimate Catch-22. As businesses grow, executives need to keep a close watch on operations — but that growth itself can blur visibility. Multiple teams, data sources, and silos of information can create challenges in determining who is doing what, where inefficiencies exist, and what improvements should be made.

One Line Away from your Data

Data Science tools, algorithms, and practices are rapidly evolving to solve business problems on an unprecedented scale. This makes data science one of the most exciting fields to be in. As exciting as it is, practitioners face their fair share of challenges. There are well-known barriers that slow down predictive modeling or application development. Finding the right data and getting access to it are two of the top pain points we hear from our customers.

The Meaning and Definition of IPaaS

8 Best Call Center Metrics to Measure Agent Productivity

Tribalism May Just Be the Largest Reason For Misinformation - But Understanding It Is Essential To Dismantling It

I’ve been blogging for about a year about the power of misinformation and our obligations as data professionals to combat it. In a March 2021 blog post, titled “The Power of Misinformation,” I outlined some of our biological instincts that make us susceptible to misinformation and how tricksters exploit them.

3 E-commerce SaaS Products Your Online Business Needs

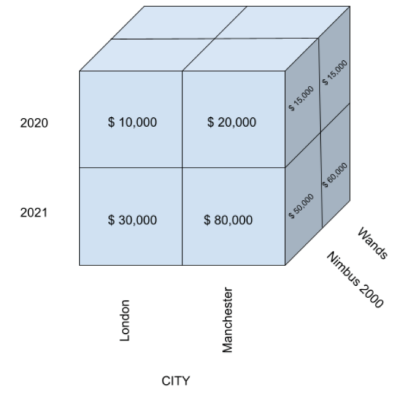

Top 5 Ways Bizview Enhances Wands for Oracle

Doing more of the work you want, rather than must, is always the dream. And it’s just plain good business. However, many financial professionals feel that they get bogged down trying to find and connect data, rather than focusing on strategic insights.

5 Reasons to Get Smart Why Bizview for Atlas

Time is Money, they say. And when you waste time on onerous menial tasks instead of improvements, you aren’t spending your time, or money, wisely. When it comes to planning and analysis, the role of financial professionals is to provide insights that helps shape the direction of the entire organization. However, many financial professionals feel that they spend too much time trying to find and connect data, rather than focusing on strategic insights.

Get your data ready for ThoughtSpot in minutes

What is the Best ETL Tool for Big Data Analysis?

ML Workflows: What Can You Automate?

When businesses begin applying machine learning (ML) workflows to their use cases, it’s typically a manual and iterative process—each step in the ML workflow is executed until a suitably trained ML model is deployed to production.

The Great Resignation: Opportunity or Threat?

I don’t think anyone could argue that the last two years have changed the workplace forever. Entire nations have been confined to their homes during months of lockdowns, and the way we conduct business and interact with each other has passed a point of no return.

Choosing an Analytical Cloud Data Platform [Webinar Recap]

Accelerate Agency Missions with Data in Motion

Data is the true currency of the digital age, and it plays an indispensable role in defining and accelerating the mission of Government agencies. Every level of government is awash in data (both structured and unstructured) that is perpetually in motion. It is constantly generated – and always growing in volume – by an ever-growing range of sources, from IoT sensors and other connected devices at the edge to web and social media to video and more.

Building data pipelines has become even easier with Keboola's Flow Builder

The 7 Best E-Commerce Integrations and APIs

4 Innovation-Boosting Features of Snowflake's New Healthcare & Life Sciences Data Cloud

A new study from McKinsey warns that profit pools in the healthcare industry are likely to be flat due to the ongoing effects of the COVID-19 pandemic. To combat this stagnancy, the study identifies a number of opportunities for innovation in the industry.

3 Reasons Developers Need Turn-Key API Analytics

Savannah Whitman When your platform runs on APIs, all of those APIs need to run perfectly. Quickly resolving issues in your API isn’t just helpful, it’s mandatory. Latency and error monitoring are only the beginning: a healthy server isn’t the same thing as a healthy product. Resolving error cases and API abuse is easiest with full visibility into your API, which is where API analytics come in.

How Observe Built An Observability Platform On Snowflake.

Continual is SOC 2 compliant

Continual is proud to announce that we are now SOC 2 Type 1 certified and compliant and SOC 2 Type 2 in progress. This certification is a publicly visible milestone that demonstrates our core commitment to keeping your data secure. We expect to make additional announcements around our security certification efforts over the coming months. Beyond third party attestations, Continual is built from the ground up with data security and governance in mind.

DataOps for the Data-Driven

The road to the data-driven enterprise is not for the faint of heart. The continuous waves of data pounding into ever-complex hybrid environments only compound the ongoing challenges of management, governance, security, skills, and rising costs, to name a few. But Hitachi Vantara has developed a path forward that combines cloud-ready infrastructure, cloud consulting and managed services to optimize applications for resiliency and performance, and automated DataOps innovations.

Weaving a New Data Fabric into Lumada for Agile DataOps

Effective data management is the keystone holding together an organization’s digital transformation. When the data foundation is well-constructed, a company can enhance both its agility and its ability to monetize data and apply it to the pursuit of business objectives.

Hitachi Vantara Launches Lumada Industrial DataOps for IIoT Scalability

Hitachi Vantara today announced the new Lumada Industrial DataOps portfolio with core IIoT platform framework capabilities. With this release, we are making it easier for organizations to take advantage of real-time insights and outcomes that can make critical operations more predictable and manageable. One of the highlights of this release is the introduction of IIoT Core software, which includes digital twins, ML (machine learning) service, and user interface components.

How to Set Up and Use Funnel Reports in Google Analytics: A Step-by-Step Guide

How to do Hyperparameter Optimization better

A Simple Hyperparameter Optimization Guide.

Magento 2 vs. Shopify Plus: How to Choose the Right Platform

5 Success Stories That Show the Value of Enterprise Public Cloud

Using Snowflake to Analyze Unstructured Data

How to Use and Interpret Google Analytics Time of Day Reports: Tips and Best Practices

4 pitfalls in your data strategy (and how to avoid them)

If you’re a data leader at an early-stage or high-growth company, you’re in a unique position to promote data appreciation. Don’t waste your golden window of opportunity. Take this moment to institute best practices, promote good habits, and build the foundation for a data-driven culture. There’s no universal data strategy that would work for all organizations — wouldn’t that be great? — but there are pitfalls that all organizations should watch out for.

What went wrong with customer 360

Three decades into the data revolution, my fellow technologists and I find ourselves asking an existential — and rather distressing — question: What happened to the promise of customer 360? The ability to get more customer data was supposed to fundamentally change the relationship between customers and brands. Companies were going to be able to offer targeted, meaningful engagements that would multiply average deal size and slash time to close.

PromoFarma Achieves Significant Time And Cost Savings With Snowflake

Barcelona based PromoFarma by DocMorris is an e-commerce marketplace which groups together the health, beauty and personal products catalogues from more than 1,000 pharmacies and other sellers into one single website.

How to Get Started With an E-Commerce Integration Platform

Import Google Analytics 4 Data into Snowflake and Translate into SQL

Hello World - Introduction to Qlik CLI

Pipelines from code

7 Effective Cold Prospecting Strategies For SaaS Sales

Operational Reporting: Why the Struggle Is Real

Ask any craftsman the secret to excellence, and you will likely hear that, in addition to skill, the craftsman needs the right tools for the project. The same can be said for finance teams as they work on operational reporting. The most skilled finance professionals still need the right tools to get the job done well.

Uncovering the future of the modern data stack

A modern data stack opens up new opportunities and frees people to focus on what they do best — no matter the team they work in.

New Snowflake Features Released in February 2022

February brought a number of exciting enhancements, especially for customers building and running data pipelines in Snowflake, including support for Snowpark stored procedures and flexible task execution. Additionally, customers get improved impact analysis through support for object dependencies, and we continue to expand Snowflake’s global reach with the regional availability of UAE North (Dubai) on Azure. Read on for more!

The Ultimate Guide to E-commerce Integrations

Healthcare & Life Sciences Data Cloud

Driving Value and Innovation from SAP with Qlik and Google Cloud Cortex Framework

Which Modern Data Architecture Should You Choose?

New Features in Cloudera Streams Messaging for CDP Public Cloud 7.2.14

With the launch of CDP Public Cloud 7.2.14, Cloudera Streams Messaging for Data Hub deployments has gotten some powerful new features! In this release, the Streams Messaging templates in Data Hub will come with Apache Kafka 2.8 and Cruise Control 2.5 providing new core features and fixes. KConnect has been added and gains additional capabilities with new connectors and Stateless Apache NiFi capabilities which can run NiFi Flows as connectors.

7 Ecommerce Personalization Examples to Help You Come Up with Your Own

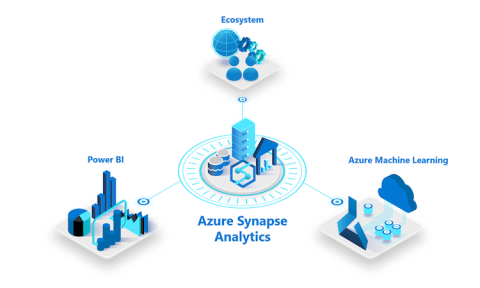

The Ultimate Guide to Azure Synapse Analytics

Should You Choose Integrate.io or Glew.io?

Experiments - Building a Predictive analysis dashboard using Qlik Sense & Qlik AutoML

7 Ways Marketers Can Use Multi-Channel Funnel Reports in Google Analytics

What is HR analytics and why do you need it?

HR analytics enables companies to use people data to drive success in hiring, retention, total rewards and employee satisfaction.

OKR 101: An introduction to data-driven planning objectives and key results (OKRs)

OKRs have taken corporate planning by storm — and for good reason. As a system for organization-wide goal-setting, OKRs make executive KPIs tangible and actionable, not just for the leaders, but for everyone in the company. They have become increasingly common in all types of businesses because they are simple and effective. For the company’s data champion, they also offer an unparalleled opportunity to get everyone speaking a common language of metrics.

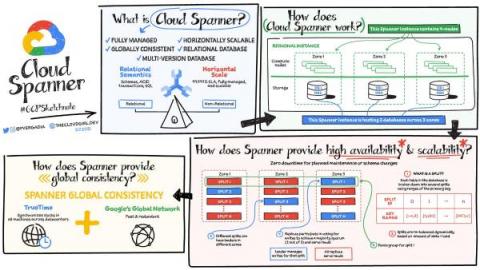

How Spanner and BigQuery work together to handle transactional and analytical workloads

As businesses scale to meet the demands of their customers, so do their need for efficient products to collect, manage and analyze data to meet their business goals. Whether you are building a multi-player game or a global e-commerce platform, it's critical to ensure that data can be stored and queried at scale with strong consistency and then processed for analysis to deliver real-time insights.

ArcGIS and BigQuery - a match made for geodata

Geographical data is one of the critical datasets for data-driven organizations to make informed business decisions. As the data is growing more than ever before, it’s becoming more challenging to manage and analyze mammoth datasets using traditional databases, this is true for geographical data as well as it requires significant computational power to process. Esri has been one of the leading companies in Geospatial software development since 1969.

Manage Your Amazon Redshift Data With Integrate.io

What Is Data Mining?

Orchestrating ML Pipelines at Scale with Kubeflow

Still waiting for ML training to be over? Tired of running experiments manually? Not sure how to reproduce results? Wasting too much of your time on devops and data wrangling? Spending lots of time tinkering around with data science is okay if you’re a hobbyist, but data science models are meant to be incorporated into real business applications. Businesses won’t invest in data science if they don’t see a positive ROI.

How Flywheel Software Is Helping Organizations Activate Customer Data On Snowflake

What Are Feature Stores and Why Are They Critical for Scaling Data Science?

A feature store provides a single pane of glass for sharing all available features across the organization. When a data scientist starts a new project, he or she can go to this catalog and easily find the features they are looking for. But a feature store is not only a data layer; it is also a data transformation service enabling users to manipulate raw data and store it as features ready to be used by any machine learning model.

How to Set Up eCommerce Tracking in Google Analytics: 7 Tips from Experts

Why your customer success strategy needs a data strategy

Three best practices to anticipate your customers’ needs at every step of the journey and boost customer success at your organization.

The Business Benefits of AI-Powered Analytics

Everyone from managers to C-suite executives wants information from analytics in order to make better decisions. Business analytics gives leaders the tools to transform a wealth of customer, operational, and product data into valuable insights that lead to agile decision-making and financial success. Traditional business intelligence and KPI dashboards have been popular solutions but they have their limitations.

The New Cloud Migration Playbook: Strategies for Data Cloud Migration and Management

Experts from Microsoft, WANdisco, and Unravel Data recently outlined a step-by-step playbook—utilizing a data-driven approach—for migrating and managing data applications in the cloud.

7 E-Commerce Metrics Every Business Should Track

Introducing SansShell: A Non-Interactive Local Host Agent

Snowflake is proud to announce the open source release of SansShell, a non-interactive local host management agent. Its purpose is to enable strong authentication, authorization, and auditing of the management of servers of any type. The source code is available on GitHub.

4 min Introduction to the Iguazio MLOps Platform

Qlik Application Automation

Blend + Fivetran: How your customer success strategy can benefit from a data strategy

Rush University Medical Center | How real-time data helps the fight against COVID-19

Globe Telecom leverages data governance tools from Talend for heightened marketing performance

Globe Telecom, one of the largest providers of digital services in the Philippines, was operating in a saturated market with limited opportunity to expand. Any strategy for growth depended on nurturing customer relationships and fostering lifelong value with each customer. For Globe Telecom, getting customer data was never the problem — telecom companies produce terabytes of data every day.

Hyperparameter Optimization (HPO) is simpler than you think.

For a quick refresher on what hyperparameter optimization is and what frameworks and strategies are supported by ClearML out of the box, check out our previous blogpost!

Google Analytics Benchmarking Reports: Use, Examples & Best Practices

Learn how to stream JSON data into BigQuery using the new BigQuery Storage Write API

The Google BigQuery Write API offers high-performance batching and streaming in one unified API. The previous post in this series introduced the BigQuery Write API. In this post, we'll show how to stream JSON data to BigQuery by using the Java client library.

Is Integrate.io or Daasity the Better Platform?

NN Investment Partners' Papyrus Team Delivers End-to-End Data Visualization with Snowflake's Data Cloud

Marcel Naumann, Senior Engineer Investment at NN Investment Partners, sees a future where fluid, easily accessible data and insights help portfolio managers achieve returns while investing in a responsible manner. We spoke with Naumann to learn how he’s making this future a reality with the Snowflake Data Cloud. NN Investment Partners (NN IP) manages assets for investors across 37 nations.

How to Track Website Sessions by Email Source | Data Snack #13 | Hubspot Marketing & Databox

Hyperparameter Optimization

Marketing Audit Report: What Are They and How to Make One?

From cost to profit center: How to transform customer 360 investments

To IT leaders, it’s obvious that data strategy deserves a special place at the table for any discussion about strategic business initiatives. However, for CMOs and CROs like myself, who must justify and weigh expenditures against bottom line impact, investing in customer data typically looks like a red-ink proposition.

Building Iterable's Data Mesh Using Snowflake: Three Components of an Innovative Data Management Strategy

Big data has been revolutionizing the digital marketing landscape: organizations are gathering data from numerous sources; data streams are being collected at unparalleled speeds; and businesses are dealing with a variety of data structures, from emails to user behaviors to financial transactions.

AstraZeneca is moving to the cloud (AWS) with Talend

The Lost Art of Questioning

Asking the right questions of your data and knowing what you are looking to find is a critical component for gaining insights from your data that drive specific actions. Data is not black and white; there is so much you can do with it. Accordingly, two people with the same data can come up with very different insights. This is because so much depends on the specific problem to be solved and the approach you take to solve it.

Understanding OLAP Cubes - A guide for the perplexed

Integration Platforms for E-Commerce Businesses

Cookies are out, conversions APIs are in

How to improve ROI on social media ads as regulations and technology change.

Data Warehouse Reporting: Definition, Tips, Best Practices, and Reporting Tools

Forrester study reveals how much you really win by using ThoughtSpot

Tell us if this analytics scenario sounds familiar: your organization employs an analyst team that uses old technology, desktop data visualization tools, or homegrown reporting systems to manually build out static dashboards and multiple weekly or monthly reports. As a result, the data analysts have to manually generate and update business reports regularly, and teams cannot keep up. In turn, the rest of the organization is unable to make timely business decisions.

Reliable data replication in the face of schema drift

Learn methods for ensuring that data replication remains robust even as schemas change.

Feature Engineering for Modern Data Teams

Feature engineering is a crucial part of any ML workflow. At Continual, we believe that it is actually the most impactful part of the ML process and the one that should have the most human intervention applied to it. However, in ML literature, the term is often overloaded among several different topics, and we wanted to provide a bit of guidance for users of Continual in navigating this concept.

Excellence in Project Intelligence: Increase efficiency and boost ROI with Insightsoftware and Deltek

Our valued partner, Deltek is a leading provider of software and information solutions for project-based businesses. Headquartered in Herndon, Virginia, the company leverages its expertise to maximize efficiency and revenue for clients through project intelligence, management, and collaboration.

FinTech Companies Thrive and Innovate with ChaosSearch

5 Ways to Increase E-Commerce Sales

Why Data Governance Is Crucial for All Enterprise-Level Businesses

Whether the enterprise uses dozens or hundreds of data sources for multi-function analytics, all organizations can run into data governance issues. Bad data governance practices lead to data breaches, lawsuits, and regulatory fines — and no enterprise is immune.

Three Venture Capitalists Weigh In on the State of DataOps 2022

The keynote presentation at DataOps Unleashed 2022 featured a roundtable panel discussion on the State of DataOps and the Modern Data Stack. Moderated by Unravel Data Co-Founder and CEO Kunal Agarwal, this session features insights from three investors who have a unique vantage point on what’s changing and emerging in the modern data world, the effects of these changes, and the opportunities being created.

DBS Bank Goes "Beyond Observability" for DataOps

At the DataOps Unleashed 2022 conference, Luis Carlos Cruz Huertas, Head of Technology Infrastructure & Automation at DBS Bank, discussed how the bank has developed a framework whereby they translate millions of telemetry data points into actionable recommendations and even self-healing capabilities.

4 Types and 20 Examples of Survey Questions to Ask Your Customers and Improve Your App

You have dedicated tons of man-hours to building your product and strengthening your service. You are trying to ensure that your application is useful but, above all, that it succeeds at making users come back for more. But then, *gasp*, they don’t! Why? What went wrong? How can you get them to come back?

From Rookie to Smart Cookie: How to Be a Better Marketer in 2022

Ultimate Guide to ESG Reporting

In recent years, investors have been placing an increased emphasis on a range of environmental, social, and governance (ESG) issues resulting in ESG reporting becoming more important. These issues are garnering more attention from legislators and regulators from around the world. As a result, there are more demands on companies to report on their activities and practices and how they impact environmental and social sustainability.

8 Tips to Build a Strong BigCommerce Tech Stack

Memory Optimizations for Analytic Queries in Cloudera Data Warehouse

Apache Impala is used today by over 1,000 customers to power their analytics in on premise as well as cloud-based deployments. Large user communities of analysts and developers benefit from Impala’s fast query execution, helping them get their work done more effectively. For these users performance and concurrency are always top of mind.

Manage the Demand of Stress Testing in Financial Services

Risk management is a highly dynamic discipline these days. Stress testing is a particular area that has become even more important throughout the pandemic. Stress tests conducted by authorities such as the Federal Reserve Bank in the US are designed to keenly monitor the financial stability of the banking sector, especially during economic downturns such as those brought on by the pandemic.

Democratizing Data Apps - Snowflake to Acquire Streamlit

Over the past few years, we’ve seen incredible growth in the number of data apps being built on Snowflake, including large customer-facing apps from companies such as BlackRock, Instacart, Lacework, and others. We’re proud of the fact that our platform helps developers bring these applications to life faster, scale better, and provide more powerful insights to their users, whether they be employees, partners, or customers.

Making Sense of Qlik APIs - Nebula TypeScript

Snowflake Announces Intent To Acquire Streamlit

Founder's Guide to Setting Up a Data Analytics Foundation

13 Marketing Habits of Successful Small Business Owners

Producing Protobuf data to Kafka

Until recently, teams were building a small handful of Kafka streaming applications. They were usually associated with Big Data workloads (analytics, data science etc.), and data serialization would typically be in AVRO or JSON. Now a wider set of engineering teams are building entire software products with microservices decoupled through Kafka. Many teams have adopted Google Protobuf as their serialization, partly due to its use in gRPC.

What is AUR and How Can It Help You Power Your E-Commerce Business?

What is next for Yellowfin?

Unravel Data Unveils Most Advanced AI-Powered DataOps

New edition enables data professionals to gain end-to-end visibility and more effectively optimize cost and performance of modern data stack

Announcing the Unravel Winter Release

Today, we’re excited to announce the Unravel Winter Release ! This winter release introduces major enhancements and improvements across the platform, including comprehensive cost management for Databricks, support for Delta Lake on Databricks, data observability for Google BigQuery, interactive pre-check before installation and upgrade.