Systems | Development | Analytics | API | Testing

October 2022

Leverage or liability - transforming data chaos into data excellence with Waterstone Mortgage

Snowflake's Retail Data Cloud

The Power of Unlocking and Unifying Data

Welcome to the decade of data

To quote Hemingway: change happens gradually, then suddenly. We see this in the world around us. Think back to 2019. There’s no denying how much the pandemic reshaped our professional and personal lives, with technology driving this change at massive scale. Yet these changes, despite their ubiquity, are really the culmination of trends like cloud and automation that were well underway.

Protect Your Assets and Your Reputation in the Cloud

A recent headline in Wired magazine read “Uber Hack’s Devastation Is Just Starting to Reveal Itself.” There is no corporation that wants that headline and the reputational damage and financial loss it may cause. In the case of Uber it was a relatively simple attack using an approach called Multi Factor Authentication (MFA) fatigue. This is when an attacker takes advantage of authentication systems that require account owners to approve a log in.

6 Best Data Extraction Tools for 2022 (Pros, Cons, Best for)

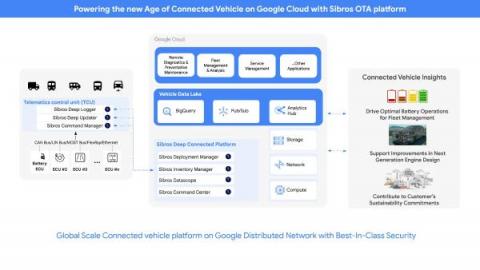

Unlocking the power of connected vehicle data and advanced analytics with BigQuery

Sibros’ Connected Vehicle Platform on Google Cloud delivers OTA data updates, collection, and commands with the flexibility and scale of the cloud

Data Science Maturity and Understanding Data Architecture/Warehousing

Transformational triumph: eBay's data fabric modernization

5 Magic Fixes for the Most Common CSV File reader Problems | ThoughtSpot

I’ve encountered a thousand different problems with spreadsheets, data importing, and flat files over the last 20 years. While there are new tools that help make the most of this data, it's not always simple. I’ve distilled this list down to the most common issues among all the databases I’ve worked with. I’m giving you my favorite magic fixes here. (Well, okay, they aren’t really “magic” but some of them took me a long time to figure out.)

How to use Google Sheets for data analysis with ThoughtSpot

Businesses have been scaling rapidly in the cloud, driven by the pandemic and lured by the promise of agility and flexibility. But here’s a dirty little secret anyone who works in data knows. Despite the value of the cloud, tons of data hasn’t made it there. So, where is it? Spreadsheets. Still the stalwart workhorse, hero, and bane of the business world. We all love how Google revolutionized this world by bringing spreadsheets to the cloud.

Using Apache Solr REST API in CDP Public Cloud

The Apache Solr cluster is available in CDP Public Cloud, using the “Data exploration and analytics” data hub template. In this article we will investigate how to connect to the Solr REST API running in the Public Cloud, and highlight the performance impact of session cookie configurations when Apache Knox Gateway is used to proxy the traffic to Solr servers. Information in this blog post can be useful for engineers developing Apache Solr client applications.

Accelerate your data to AI journey with new features in BigQuery ML

BigQuery ML reduces data to AI barrier by making it easy to manage the end-to-end lifecycle from exploration to operationalizing ML models using SQL.

7 Best Data Transformation Tools in 2022 (Pros, Cons, Best for)

5 Best ETL Tools for Snowflake in 2022 (Pros, Cons)

From Data Engineering To Data Science To Producing Results For Today's Top Brands.

Future of Data Meetup: Enrich Your Data Inline with Apache NiFi

Technology Spotlight: Applied ML Prototypes

Getting started with ThoughtSpot for Sheets

What's new in ThoughtSpot Analytics Cloud 8.8.0.cl

Demo: Unravel Data - Keep Cloud Data Budgets on Track (Automatically)

How to solve four SQL data modeling issues

SQL is the universal language of data modeling. While it is not what everyone uses, it is what most analytics engineers use. SQL is found all across the most popular modern data stack tools; ThoughtSpot’s SearchIQ query engine translates natural language query into complex SQL commands on the fly, dbt built the entire premise of their tool around SQL and Jinja. And within your Snowflake data platform, it’s used to query all your tables.

Accelerating Projects in Machine Learning with Applied ML Prototypes

It’s no secret that advancements like AI and machine learning (ML) can have a major impact on business operations. In Cloudera’s recent report Limitless: The Positive Power of AI, we found that 87% of business decision makers are achieving success through existing ML programs. Among the top benefits of ML, 59% of decision makers cite time savings, 54% cite cost savings, and 42% believe ML enables employees to focus on innovation as opposed to manual tasks.

Why normalization is critical

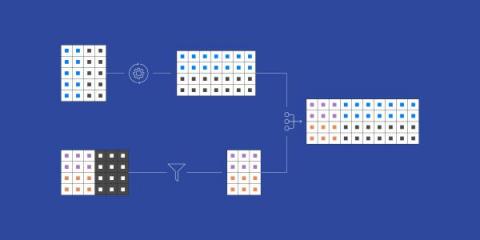

The key to Extract-Load then Transform (ELT) is that the data is landed in a normalized schema. Why? Correctness, flexibility and understandability.

Does Your Company Need a Data Observability Framework?

It is Time to Rebundle the Modern Data Stack

Power Up Your Data Operations with Templates & SpotApps

Episode 1 | Data Pipelines | Data Journey | 7 Challenges of Big Data Analytics

Extracting Maximum Value From All Of Your Company's Data

How to Broadcast a Report in Yellowfin

How to Easily Deploy Your Hugging Face Models to Production - MLOps Live #20- With Hugging Face

10 Keys to a Secure Cloud Data Lakehouse

Enabling data and analytics in the cloud allows you to have infinite scale and unlimited possibilities to gain faster insights and make better decisions with data. The data lakehouse is gaining in popularity because it enables a single platform for all your enterprise data with the flexibility to run any analytic and machine learning (ML) use case. Cloud data lakehouses provide significant scaling, agility, and cost advantages compared to cloud data lakes and cloud data warehouses.

Supercharge Yellowfin with 100+ Interactive Charts from FusionCharts

Successful Data Projects Start with Understanding Business Problems

Top Data Cleansing Tools for 2022

There is no question about the usefulness of big data these days. However, if you want the best data, you need it to be as accurate as possible. That means that your data has to be up-to-date, correct, and clean. Using one of these top data cleansing tools can help you be sure of this.

Reskilling Against the Risk of Automation

Demand for both entry-level and highly skilled tech talent is at an all-time high, and companies across industries and geographies are struggling to find qualified employees. And, with 1.1 billion jobs liable to be radically transformed by technology in the next decade, a “reskilling revolution” is reaching a critical mass.

Consolidate Your Data on AlloyDB With Integrate.io in Minutes

BigQuery's performance and scale means that everyone gets to play

Today, we’re hearing from telematics solutions company Geotab about how Google BigQuery enables them to democratize data across their entire organization and reduce the complexity of their data pipelines.

Taming the Tech Stack: Leverage Your Existing Tech Stack Securely With an Analytics Layer

Analytics and data visualizations have the power to elevate a software product, making it a powerful tool that helps each user fulfill their mission more effectively. To stand apart from the competition, today’s software applications need to deliver a lot more than just transaction processing. They must also provide insights that help drive better decisions, alert users to matters that require their attention, and deliver up-to-the-minute information about the things that matter most.

Three benefits of the modern marketing data stack

Organizations are improving the quality of their marketing analytics at less cost, which is translating into more overall marketing efficiency – all by adopting the modern data stack.

Unifying Data, Optimizing Campaigns In Real Time, & Taking Marketers To New Heights.

The Case for Embedded Analytics: How to Invest and Implement

In the past, most software applications were all about “data processing.” In the parlance of old-school management information systems, that meant an almost exclusive focus on keeping accurate transactional records alongside any master data necessary to complete that mission. Transaction processing is important, of course, but in today’s world, applications are expected to deliver a lot more than that.

Transformation for Analysis of Unintegrated Data-A Software Tautology

How Keboola benefits from using Keboola Connection - There's no party like 3rd party

Contibuting to ClearML: How to Get Started with Open Source Contributions!

dbt on Cloudera Data Platform

insightsoftware Acquires Cubeware, Expanding Global Footprint in DACH Region

Cybersecurity: A Big Data Problem

Information technology has been at the heart of governments around the world, enabling them to deliver vital citizen services, such as healthcare, transportation, employment, and national security. All of these functions rest on technology and share a valuable commodity: data. Data is produced and consumed in ever-increasing amounts and therefore must be protected. After all, we believe everything that we see on our computer screens to be true, don’t we?

Planetly: Scaling companies' carbon management with data

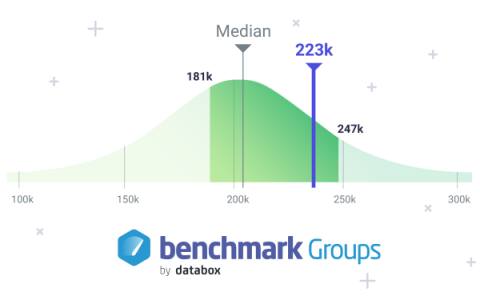

Benchmark Your Company's Performance with Databox Benchmark Groups

Google Analytics 4 Metrics Tutorial: Everything You Need to Know Before You Transition to the New Platform

Using RevOps to Drive Business Value in a Complex Economic Environment

Today's increasingly complex and unstable world, together with a continued focus from senior leaders on improving growth, use of technology and addressing workforce issues, means that many savvy organizations are looking towards Revenue Operations

Build data apps with Streamlit + ThoughtSpot APIs

I’ve been following the Streamlit framework for a while, since Snowflake announced that they would acquire it to enable data engineers to quick spin up data apps. I decided to play around with it and see how we could leverage the speed of creating an app along with the benefits that ThoughtSpot provides, especially around the ability to use NLP for search terms. Streamlit is built in Python.

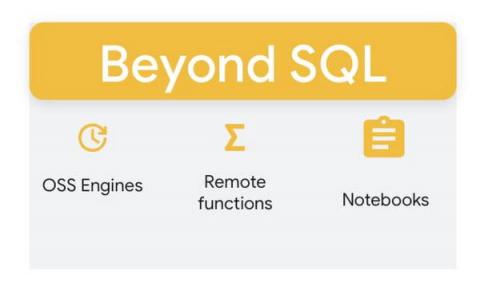

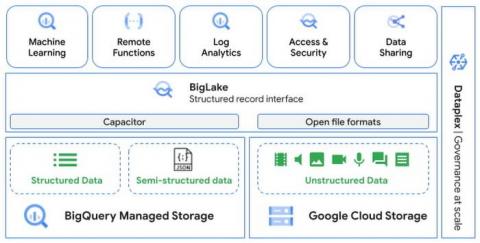

Build limitless workloads on BigQuery: New features beyond SQL

Our mission at Google Cloud is to help our customers fuel data driven transformations. As a step towards this, BigQuery is removing its limit as a SQL-only interface and providing new developer extensions for workloads that require programming beyond SQL. These flexible programming extensions are all offered without the limitations of running virtual servers.

Unlocking the value of unstructured data at scale using BigQuery ML and object tables

Most commonly, data teams have worked with structured data. Unstructured data, which includes images, documents, and videos, will account for up to 80 percent of data by 2025. However, organizations currently use only a small percentage of this data to derive useful insights. One of main ways to extract value from unstructured data is by applying ML to the data.

Integrating Observability into Your Security Data Lake Workflows

Bringing Your Cloud Data Bill Under Control

Coherent Automates The Capture Of Spreadsheet Logic

Data Journey | 7 Challenges of Big Data Analytics | Episode 0

Coalesce 2022 Live

Where data strategies go wrong: Tales from the front lines

Public or On-Prem? Telco giants are optimizing the network with the Hybrid Cloud

The telecommunications industry continues to develop hybrid data architectures to support data workload virtualization and cloud migration. However, while the promise of the cloud remains essential—not just for data workloads but also for network virtualisation and B2B offerings—the sheer volume and scale of data in the industry require careful management of the “journey to the cloud.”

Using Kafka Connect Securely in the Cloudera Data Platform

In this post I will demonstrate how Kafka Connect is integrated in the Cloudera Data Platform (CDP), allowing users to manage and monitor their connectors in Streams Messaging Manager while also touching on security features such as role-based access control and sensitive information handling. If you are a developer moving data in or out of Kafka, an administrator, or a security expert this post is for you. But before I introduce the nitty-gritty first let’s start with the basics.

Diving Deep Into a Data Lake

Fortune favors the prepared, Talend CEO keynote at Talend Connect '22

Ep 60: The Modern Milkman's CSO, John Hughes on Using Data to Save Our Oceans from Plastic

Breaking down marketing data silos with BigQuery

White Label Analytics: What It Is, Why It Matters & 5 Key Benefits

A key consideration when buying an embedded analytics solution is not only whether it supports embedding of charts and reports, but that it can integrate analytics in a way that is indistinguishable from the experience of your application. Learn what white-label BI is.

Cloudera Uses CDP to Reduce IT Cloud Spend by $12 Million

Like all of our customers, Cloudera depends on the Cloudera Data Platform (CDP) to manage our day-to-day analytics and operational insights. Many aspects of our business live within this modern data architecture, providing all Clouderans the ability to ask, and answer, important questions for the business. Clouderans continuously push for improvements in the system, with the goal of driving up confidence in the data.

How to get more from your dbt models and metrics with ThoughtSpot

If you haven’t heard of dbt, you’re missing out on one of the hottest technologies in data. dbt has been adopted by more than 15,000 organizations looking for a SQL-friendly workflow to transform the data inside their cloud data platform.

Fivetran is now a dbt Metrics Ready Partner

Integration between Fivetran and dbt Semantic Layer lets you enhance data models with rich metric definitions and derive value from data quicker and easier.

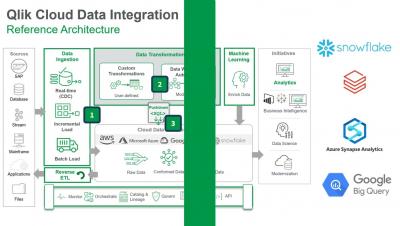

SaaS in 60 - Qlik Cloud Data Integration

Activate your dbt models and metrics with ThoughtSpot

Four ways to write faster data models with Wizard for dbt Core

Transforming your data is an essential part of ELT. Learn how Fivetran’s Wizard for dbt Core™ and BigQuery can help.

Hevo vs Fivetran vs Integrate.io: An ETL Tool Comparison

The Denver Broncos score a better fan experience with Fivetran

[DEMO] Building Customer 360 views with healthy data

Optimize your data modeling with Wizard for dbt Core

6 most useful data visualization principles for analysts

The difference between consuming data and actioning it often comes down to one thing: effective data visualization. Case in point? The John Snow’s famous cholera map. In 1854, John Snow (no, not that one) mapped cholera cases during an outbreak in London. Snow’s simple map uncovered a brand new pattern in the data—the cases all clustered around a shared water pump.

Data Lakes: The Achilles Heel of the Big Data Movement

Using Time Series Charts to Explore API Usage

One major reason for digging into API and product analytics is to be able to easily identify trends in the data. Of course, trends can be very tough to see when looking at something like raw API call logs but can be much easier when looking at a chart aimed at easily allowing you to visualize trends. Enter the Time Series chart.

How to Get Started with ClearML's Hyper-Datasets

In this blog post, we’ll be taking a closer look at Hyper-Datasets, which are essentially a supercharged version of Clear-ML Data.

3 types of data models and when to use them

Data modeling is the process of organizing your data into a structure, to make it more accessible and useful. Essentially, you’re deciding how the data will move in and out of your database, and mapping the data so that it remains clean and consistent. ThoughtSpot can take advantage of many kinds of data models, as well as modeling languages. Since you know your data best, it’s usually a good idea to spend some time customizing the modeling settings.

Automated Financial Storytelling at Your Fingertips: Here's How

Every financial professional understands that the numbers matter a great deal when it comes to reporting financial results. Accuracy, consistency, and timeliness are important. Those same professionals also know that there’s substantive meaning behind those numbers and that it’s important to tell the stories that lend additional depth and context to the raw financial statements.

Choosing The Best Approach to Data Mesh and Data Warehousing

Neustar Sets A New Bar For Accuracy In The Field Of Identity Resolutions

Universal Data Distribution with Cloudera DataFlow for the Public Cloud

Qlik Expands Google BigQuery Solutions, Adding Mainframe to SAP Business Data for Modern Analytics

In April this year, we announced that Qlik had successfully achieved Google Cloud Ready – BigQuery Designation for its Qlik Sense® cloud analytics solution and Qlik Data Integration®. We continue increasing customer confidence by combining multiple Qlik solutions alongside Google Cloud BigQuery to both help activate SAP data, and now mainframe data as well.

Increasing ABM Account Engagement by 50 Percent

Google Analytics 4 Migration: A Step By Step Guide

5 Ways Data Lake Can Benefit Your Organization

Today organizations are looking for better solutions to guarantee that their data and information are kept safe and structured. Using a data lake contributes to the creation of a centralized infrastructure for location management and enables any firm to manage, store, analyze, and efficiently categorize its data. Organizations find it extremely difficult to deal with data because the information is kept in silos and in multiple formats.

Frictionless Data? It's Here with Microsoft Intelligent Data Platform and Qlik

You’ve likely been hearing different versions of the same idea now for a while. Enterprises in every industry are investing in technology and strategies to drive more value from all their data across their entire organization.

Fivetran partners with Microsoft for the Intelligent Data Platform Partner Ecosystem

With Fivetran on Azure, enterprise customers can now implement fully automated, managed, no-code data pipelines for reliable and secure data delivery.

Changing Your ERP? Add Tax Tech That Works

Switching to a modern ERP software system affords many benefits, including increased efficiency, improved accuracy, and better control over your company’s finances. It is also an excellent opportunity to revisit many of the business processes that sit outside of your core ERP system. As you set out to improve your financial and operational procedures, you have an opportunity to rethink the way you perform tax planning, transfer pricing, budgeting, reporting, and analytics.

Reshape Your Year-Round Tax Function With Transfer Pricing Software

In many organizations, transfer pricing adjustments are like a lot of other last-minute activities. They seem to be ignored throughout most of the annual cycle. Then, they suddenly take on a great importance at year-end. That leaves the tax team scrambling to address an entire year’s worth of transactions. It also leads to interdepartmental friction in many cases. If transfer pricing is changed retroactively for the entire year, that can have far-reaching implications.

Building a Sustainable Data Warehouse Design

Alloy DB Demo - Integrate.io

AI at Scale isn't Magic, it's Data - Hybrid Data

A recent VentureBeat article , “4 AI trends: It’s all about scale in 2022 (so far),” highlighted the importance of scalability. I recommend you read the entire piece, but to me the key takeaway – AI at scale isn’t magic, it’s data – is reminiscent of the 1992 presidential election, when political consultant James Carville succinctly summarized the key to winning – “it’s the economy”.

What is Self Service Analytics? The Role of Accessible BI Explained

How to Run Workloads on Spark Operator with Dynamic Allocation Using MLRun

Demystifying the transactional database

Transactional databases are essential to many apps and software. Learn more about them and their benefits with Fivetran.

[DEMO] Streaming alerts to BigQuery

What's new in CDP Private Cloud Base 7.1.8

O'Reilly | Fundamentals of Data Observability

Enterprise data warehouses: Definition and guide

An enterprise data warehouse is critical to the long-term viability of your business.

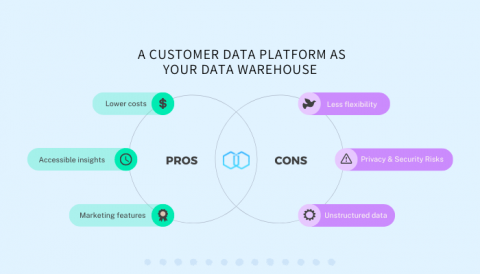

Pros & Cons of Using a Customer Data Platform as Your Data Warehouse

Using Advanced Functions in a report

How to Accelerate HuggingFace Throughput by 193%

Deploying models is becoming easier every day, especially thanks to excellent tutorials like Transformers-Deploy. It talks about how to convert and optimize a Huggingface model and deploy it on the Nvidia Triton inference engine. Nvidia Triton is an exceptionally fast and solid tool and should be very high on the list when searching for ways to deploy a model. Our developers know this, of course, so ClearML Serving uses Nvidia Triton on the backend if a model needs GPU acceleration.

How Twitter maximizes performance with BigQuery

Keboola is now officially Powered by Snowflake

Cloudera's Open Data Lakehouse Supercharged with dbt Core(tm)

dbt allows data teams to produce trusted data sets for reporting, ML modeling, and operational workflows using SQL, with a simple workflow that follows software engineering best practices like modularity, portability, and continuous integration/continuous development (CI/CD).

NRR doesn't matter

What does this mean for those of us managing or trying to understand fast-growing companies?

Credit Bureau Credibility - The Voice of the Customer

Why Doesn't the Modern Data Stack Result in a Modern Data Experience?

6 Best Data Integration Tools of 2022

Unravel Automated Data Quality Datasheet

Unravel now pulls in data quality checks from external tools into its single-pane-of-glass full-stack observability view.

Live: Educational Services for Snowflake Data Governance

How to build a self-service BI strategy

Think about the times you've wished you had more insight into your business data. Or all of the times you wished you could answer questions about your business performance without waiting for someone else to get back to you. Gone are the days when businesses rely solely on IT staff to provide reports and analytics. With self-service business intelligence (BI), users can create their own reports, dashboards, and data visualizations without relying on IT help.

Does Cost Reduction Play a Role in Digital Transformation?

Digital transformation. Everyone has their own ideas about what digital transformation means, so I decided to look up a few definitions.

Moving to Log Analytics for BigQuery export users

If you’ve already centralized your log analysis on BigQuery as your single pane of glass for logs & events…congratulations! With the introduction of Log Analytics (Public Preview), something great is now even better. It leverages BigQuery while also reducing your costs and accelerating your time to value with respect to exporting and analyzing your Google Cloud logs in BigQuery.

What Is a Data Clean Room, and Do You Need One?

There’s a lot of talk in the market these days about data clean rooms, along with some confusion about what exactly a data clean room is and how it differs from data sharing methods. In this blog post, I’d like to shed some light on this topic.

AI Infrastructure Alliance: Transforming Snowflake into an MLOps 'Feature Factory' using Iguazio

The Data Cloud & Public Sector With Deloitte

Following your local happiness gradient - Dr. Catherine Williams

How ThoughtSpot Uses ThoughtSpot for Field Marketing

As ThoughtSpot’s SVP of Corporate Marketing I oversee a field marketing team that acts as the glue between our Marketing and Field Sales teams. When people talk about field marketing, they’re often just thinking of events — but we have a far broader remit than that. Each member of the Field Marketing team sits within a specific sales region, acting as a kind of regional CMO.

3-Minute Recap: Unlocking the Value of Cloud Data and Analytics

DBTA recently hosted a roundtable webinar with four industry experts on “Unlocking the Value of Cloud Data and Analytics.” Moderated by Stephen Faig, Research Director, Unisphere Research and DBTA, the webinar featured presentations from Progress, Ahana, Reltio, and Unravel. You can see the full 1-hour webinar “Unlocking the Value of Cloud Data and Analytics” below. Here’s a quick recap of what each presentation covered.

Get Ready for the Next Generation of DataOps Observability

I was chatting with Sanjeev Mohan, Principal and Founder of SanjMo Consulting and former Research Vice President at Gartner, about how the emergence of DataOps is changing people’s idea of what “data observability” means. Not in any semantic sense or a definitional war of words, but in terms of what data teams need to stay on top of an increasingly complex modern data stack.

What Challenges Are Hindering the Success of Your Data Lake Initiative?

Developing More Accurate and Complex Machine-Learning Models with Snowpark for Python

Ep 59: New Zealand's Crown Research Institute CDAO, Jan Sheppard on Treating Data as a Treasure

7 Best Data Pipeline Tools 2022

The data pipeline is at the heart of your company’s operations. It allows you to take control of your raw data and use it to generate revenue-driving insights. However, managing all the different types of data pipeline operations (data extractions, transformations, loading into databases, orchestration, monitoring, and more) can be a little daunting. Here, we present the 7 best data pipeline tools of 2022, with pros, cons, and who they are most suitable for. 1. Keboola 2. Stitch 3. Segment 4.

Introduction to Automated Data Analytics (With Examples)

Yellowfin Named Embedded Business Intelligence Software Leader in G2 Fall Reports 2022

Overcome Financial Reporting Challenges with These 6 Best Practices

Extending The Power of Qlik Sense SaaS for OEM Partners

As the growth in embedding analytics into a product, application or service continues to accelerate in the market, some issues continue to frustrate our ISV and Data Provider partners in their journey to the cloud.

Fivetran SAP AppConnect simplifies data integration to cloud

Fivetran SAP AppConnect enables enterprises to perform log-based change data capture despite common license restrictions for databases managed by SAP applications.

Talend's contributions to Apache Beam

Apache Beam is an open-source, unified programming model for batch and streaming data processing pipelines that simplifies large-scale data processing dynamics. The Apache Beam model offers powerful abstractions that insulate you from low-level details of distributed data processing, such as coordinating individual workers, reading from sources and writing to sinks, etc.

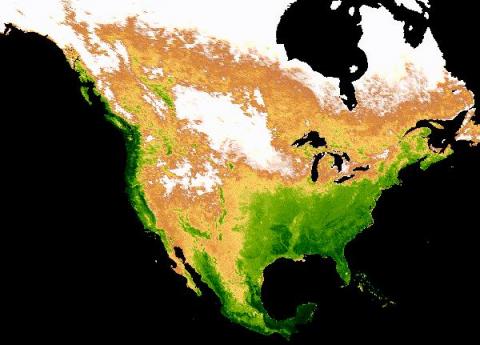

Building an automated data pipeline from BigQuery to Earth Engine with Cloud Functions

Over the years, vast amounts of satellite data have been collected and ever more granular data are being collected everyday. Until recently, those data have been an untapped asset in the commercial space. This is largely because the tools required for large scale analysis of this type of data were not readily available and neither was the satellite imagery itself. Thanks to Earth Engine, a planetary-scale platform for Earth science data & analysis, that is no longer the case.

Analyzing satellite images in Google Earth Engine with BigQuery SQL

Google Earth Engine (GEE) is a groundbreaking product that has been available for research and government use for more than a decade. Google Cloud recently launched GEE to General Availability for commercial use. This blog post describes a method to utilize GEE from within BigQuery’s SQL allowing SQL speakers to get access to and value from the vast troves of data available within Earth Engine.

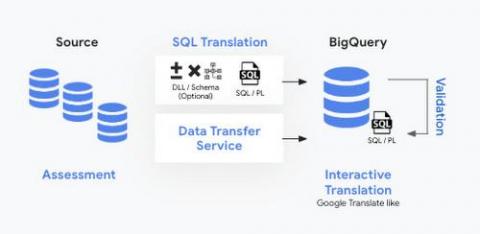

How to simplify and fast-track your data warehouse migrations using BigQuery Migration Service

Migrating data to the cloud can be a daunting task. Especially moving data from warehouses and legacy environments requires a systematic approach. These migrations usually need manual effort and can be error-prone. They are complex and involve several steps such as planning, system setup, query translation, schema analysis, data movement, validation, and performance optimization.

Scaling Kafka Brokers in Cloudera Data Hub

This blog post will provide guidance to administrators currently using or interested in using Kafka nodes to maintain cluster changes as they scale up or down to balance performance and cloud costs in production deployments. Kafka brokers contained within host groups enable the administrators to more easily add and remove nodes. This creates flexibility to handle real-time data feed volumes as they fluctuate.

Webinar: Unlocking the Value of Cloud Data and Analytics

Multiple ways to sort values in a report

Editing and saving a dashboard

Enterprise data and analytics in the cloud with Microsoft Azure and Talend

A Guide to Principal Component Analysis (PCA) for Machine Learning

Principal Component Analysis (PCA) is one of the most commonly used unsupervised machine learning algorithms across a variety of applications: exploratory data analysis, dimensionality reduction, information compression, data de-noising, and plenty more. In this blog, we will go step-by-step and cover: Before we delve into its inner workings, let’s first get a better understanding of PCA. Imagine we have a 2-dimensional dataset.

7 Best Change Data Capture (CDC) Tools of 2022

How to Do Data Labeling, Versioning, and Management for ML

It has been months ago when Toloka and ClearML met together to create this joint project. Our goal was to showcase to other ML practitioners how to first gather data and then version and manage data before it is fed to an ML model. We believe that following those best practices will help others build better and more robust AI solutions. If you are curious, have a look at the project we have created together.

How to Distribute Machine Learning Workloads with Dask

Tell us if this sounds familiar. You’ve found an awesome data set that you think will allow you to train a machine learning (ML) model that will accomplish the project goals; the only problem is the data is too big to fit in the compute environment that you’re using. In the day and age of “big data,” most might think this issue is trivial, but like anything in the world of data science things are hardly ever as straightforward as they seem.

What is a database? Definition, types and examples

Databases are important for every organization. Learn more about what databases are and the different types available.

Keboola + ThoughtSpot = Automated insights in minutes

Power Your Lead Scoring with ML for Near Real-Time Predictions

Every organization wants to identify the right sales leads at the right time to optimize conversions. Lead scoring is a popular method for ranking prospects through an assessment of perceived value and sales-readiness. Scores are used to determine the order in which high-value leads are contacted, thus ensuring the best use of a salesperson’s time. Of course, lead scoring is only as good as the information supplied.