Systems | Development | Analytics | API | Testing

December 2023

The Data Integration Landscape in Healthcare: Improving Patient Care

Workato vs Boomi: Comprehensive Comparison

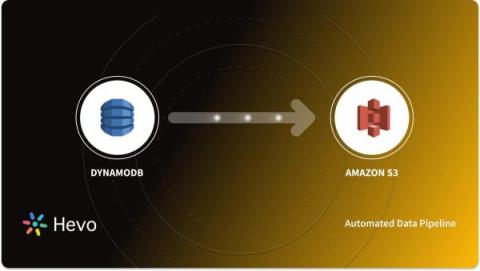

Connecting DynamoDB to S3 Using AWS Glue: 2 Easy Steps

10 Lead Enrichment Tools for Deeper Insights

People, Product, and Progress: The Pulse of Countly's 2023

This year, our fully remote team turned every corner of the world into an office. Zoom calls? More like daily digital adventures, where we navigated projects in our home-office havens (extra points for the coolest virtual backgrounds and accidentally matching outfits). Coffee catch-ups took a global twist, with us sipping our favorite brews in different time zones – talk about a 24/7 café vibe!

State of Multi-Cluster: Past, Present, and Future

#4 Kafka Live Stream | Creating Dead Letter Queues in Kafka with SQL Stream Processing

Introducing the SQL AI Assistant:Create, Edit, Explain, Optimize, and Fix Any Query

Imagine you’ve just started a new job working as a business analyst. You’ve been given a new burning business question that needs an immediate answer. How long would it take you to find the data you need to even begin to come up with a data-driven response? Imagine how many iterations of query writing you’d have to go through. In this scenario, you also have reports that need updating as well. Those contain some of the biggest hair-ball queries you’ve ever seen.

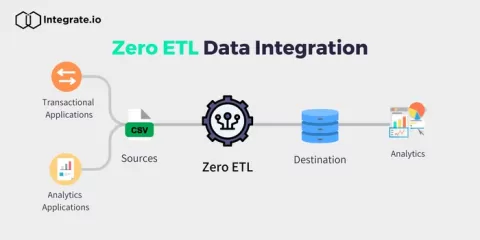

How is Zero ETL redefining modern data integration?

Cloud Data Cost Q&A 2024: Your Top 7 Cloud Data Cost Questions Answered (In 30 minutes)

Our architectural approach to support multi-product strategy

Announcing Cloudera's Enterprise Artificial Intelligence Partnership Ecosystem

Cloudera is launching and expanding partnerships to create a new enterprise artificial intelligence “AI” ecosystem. Businesses increasingly recognize AI solutions as critical differentiators in competitive markets and are ready to invest heavily to streamline their operations, improve customer experiences, and boost top-line growth.

How To Capture Data using Yellowfin Data Transformation Flow

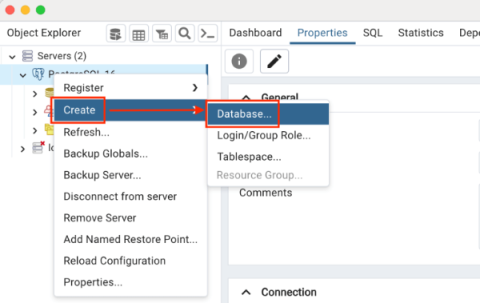

How to Set Up Amazon Aurora PostgreSQL

ServiceNow Accelerates Data-Driven Innovation by Rearchitecting Its Enterprise Data Platform on Snowflake

ServiceNow is focused on making the world work better for everyone. More than 7,700 customers rely on ServiceNow’s platform and solutions to optimize processes, break down silos and drive business value. Achieving 20% year-over-year growth with a 98% renewal rate (as of Q1 2023) requires a data-driven understanding of the customer journey.

Unlock the New Wave of Gen AI With Snowpark Container Services GPU-Powered Compute

The rise of generative AI (gen AI) is inspiring organizations to envision a future in which AI is integrated into all aspects of their operations for a more human, personalized and efficient customer experience. However, getting the required compute infrastructure into place, particularly GPUs for large language models (LLMs), is a real challenge. Accessing the necessary resources from cloud providers demands careful planning and up to month-long wait times due to the high demand for GPUs.

Establishing A Framework For Effective Adoption and Deployment of Generative AI Within Your Organization

Adopting and deploying Generative AI within your organization is pivotal to driving innovation and outsmarting the competition while at the same time, creating efficiency, productivity, and sustainable growth. Acknowledging that AI adoption is not a one-size-fits-all process, each organization will have its unique set of use cases, challenges, objectives, and resources.

Fivetran Demo: How to accelerate and automate SAP ERP data into Google Cloud and BigQuery

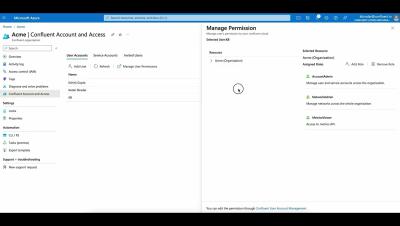

Confluent Access Management

Optimizing the Value of AI Solutions for the Public Sector

Without a doubt, 2023 has shaped up to be generative AI’s breakout year. Less than 12 months after the introduction of generative AI large language models such as ChatGPT and PaLM, image generators like Dall-E, Midjourney, and Stable Diffusion, and code generation tools like OpenAI Codex and GitHub CoPilot, organizations across every industry, including government, are beginning to leverage generative AI regularly to increase creativity and productivity.

10 Best Google Analytics ETL Tools for 2024

LiveRamp Customers Build 'Foundation of Identity' With Snowflake Native Apps

The best marketing is truly data-driven, creating powerful product promotions and offers through an understanding of customer needs and preferences. But for many organizations, building this understanding is more akin to solving an ever-growing jigsaw puzzle (with no easy edge pieces!) than reading data insights from a beautiful dashboard.

SaaS in 60 - New Pivot Table

Unlock the Power of Data with AI, Machine Learning & Automation - Do More With Qlik - Episode 48

Faster time to value with the BigQuery Migration Service

#shorts - Tabular Reporting with #qlik #exceltips #excel #saas #cloud

Simplify and Accelerate Your Data Streaming Workloads With an Intuitive User Experience

Happy holidays from Confluent! It’s that time in the quarter again, when we get to share our latest and greatest features on Confluent Cloud. To start, we’re thrilled to share that Confluent ranked as a leader in The Forrester Wave™: Streaming Data Platforms, Q4 2023, and The Forrester Wave(™): Cloud Data Pipelines, Q4 2023! Forrester strongly endorsed Confluent’s vision to transform data streaming platforms from a “nice-to-have” to a must-have.

Snowflake Announces Agreement to Acquire Samooha to Simplify Building Interoperable Data Clean Rooms in the Data Cloud

When businesses share sensitive first-party data with outside partners or customers, they must do so in a way that meets strict governance requirements around security and privacy. Data clean rooms have emerged as the technology to meet this need, enabling interoperability where multiple parties can collaborate on and analyze sensitive data in a governed way without exposing direct access to the underlying data and business logic.

ML-Based Forecasting and Anomaly Detection in Snowflake Cortex, Now in GA

Historically, only a few AI experts within an organization could develop insights using machine learning (ML) and predictive analytics. Yet in this new wave of AI, democratizing ML to more data teams is crucial—and for Snowflake SQL users, it’s now a reality.

What Lays Ahead in 2024? AI/ML Predictions for the New Year

How real-time data drives delivery this holiday season

European logistics, freight and delivery companies require real-time data across their shipping network to meet the needs of the festive season.

How to sync your Google Sheets data into your data warehouse in just a few minutes

Cross-cloud materialized views in BigQuery Omni enable multi-cloud analytics at scale

BigQuery Omni’s new cross-cloud materialized views lets you perform cross-cloud analytics.

Fivetran named a Challenger in 2023 Gartner Magic Quadrant

Fivetran is positioned in the Challengers Quadrant for its ability to execute and completeness of vision.

Crash Analytics: The Backbone of Seamless User Experience

In the realm of product analytics, crash analytics plays a pivotal role in shaping a robust and user-friendly software environment. This comprehensive guide explores the significance of crash analytics within product analytics, highlighting its impact on user experience and product development.

Private Cloud Data Services | Data Catalog

The Three Essentials to Get to Responsible AI

The excitement (and drama) around AI continues to escalate. Why? Because the stakes are high. The race for competitive advantage by applying AI to new use cases is on! The launch of generative AI last year added fuel to the fire, and for good reason. Whereas the existing portfolio of AI tools had targeted the more technically minded like data scientists and engineers, new tools like ChatGPT handed the keys to the kingdom to anyone who could type a question.

5 Best Power BI Dashboards for 2024

How Customer 360 drives retail revenue

How Fivetran and Redkite combine to help retail enterprises understand, track and meet the needs of their customers.

Implementing API Analytics with Java

There are few technologies as ubiquitous – and crucial for business success – as APIs. APIs connect different software systems together, forming a common language that allows for substantial portability, scalability, and extensibility. What is just as important as the systems themselves is understanding the systems and discovering insights about their usage.

December Newsletter: Discover the Latest from Keboola

Commands, Queries, and Events | Microservices 101

The State of Agency-Client Collaboration in 2024

Predictions: The Cybersecurity Challenges of AI

Our recently released predictions report includes a number of important considerations about the likely trajectory of cybercrime in the coming years, and the strategies and tactics that will evolve in response. Every year, the story is “Attackers are getting more sophisticated, and defenders have to keep up.” As we enter a new era of advanced AI technology, we identify some surprising wrinkles to that perennial trend.

Unlocking Insights with BigQuery Google: A Setup Guide

5 Strategies for Contextualizing Your Numbers With CXO

Experience the Best of Gemini AI Directly From Keboola

How Not to Drown in Numbers | Sudheesh Nair (ThoughtSpot) & Jai Das (Sapphire Ventures)

What is Apache Flink?

How to Setup Tabular Reporting - (with Oauth Client Setup) - Do More with Qlik

The 3 Best Segment ETL Tools

November Newsletter: Discover the Latest from Keboola

New Snowflake Features Released in September-November 2023

At our recent Snowday event, we announced a wave of Snowflake product innovations for easier application development, new AI and LLM capabilities, better cost management and more. If you missed the event or need a refresh of what was presented, watch any Snowday session on demand. Let’s dive into all new releases in September, October and November.

Top 3 Data and Analytics Trends to Prepare for in 2024

Making Flink Serverless, With Queries for Less Than a Penny

Imagine easily enriching data streams and building stream processing applications in the cloud, without worrying about capacity planning, infrastructure and runtime upgrades, or performance monitoring. That's where our serverless Apache Flink® service comes in, as announced at this year’s Current | The Next Generation of Kafka Summit.

My Top 5 Data Moments for 2023

Your AI Journey: Customize Large Language Models with Cloudera

SaaS in 60 - Tabular Reporting

Hevo Data and Kipi.bi Partner to Deliver Improved Data Maturity to Customers

FedRAMP High Authorization on AWS GovCloud (US-West and US-East) Expands Snowflake's Commitment to Serving the Public Sector

It’s a milestone moment for Snowflake to have achieved FedRAMP High authorization on the AWS GovCloud (US-West and US-East Regions) . This authorization, from the Federal Risk and Authorization Management Program (FedRAMP), is one of the most rigorous security endorsements a cloud service provider (CSP) can achieve.

Harnessing the Data Cloud to Empower Our Own Marketing Team: Building a Digital Ads Ecosystem on Snowflake

You need metrics to do your job well as a marketer but getting clear, meaningful metrics is a huge challenge. While digital advertisers and paid media professionals are on the hook to build ample sales pipeline and maximize return on ad spend (ROAS), they’re also expected to deliver personalized advertising content while navigating evolving privacy requirements and adhering to consumer expectations—all while extracting insights from siloed ad platforms.

15 Examples of Data Pipelines Built with Amazon Redshift

Using ClearML and MONAI for Deep Learning in Healthcare

This tutorial shows how to use ClearML to manage MONAI experiments. Originating from a project co-founded by NVIDIA, MONAI stands for Medical Open Network for AI. It is a domain-specific open-source PyTorch-based framework for deep learning in healthcare imaging. This blog shares how to use the ClearML handlers in conjunction with the MONAI Toolkit. To view our code example, visit our GitHub page.

What is Confluent?

Product Keynote: The Road Ahead

Turn customer feedback into opportunities using generative AI in BigQuery DataFrames

Natural language processing and large language models to help BigQuery process dataframes.

Leveraging Amazon S3 Cloud Object Storage for Analytics

The Top 6 Big Data Events for 2024

2024 Predications for SAP Finance Teams

Fostering Collaboration and Innovation through Databox Engineering Guilds

The AI data quality conundrum

Visionaries from Capgemini, Databricks and Fivetran lay out the data quality imperative for implementing enterprise AI applications.

What is an AI copilot?

The rise of generative AI and the massive popularity of OpenAI’s ChatGPT has led to widespread recognition that software applications are about to fundamentally change. Generative AI offers the potential to both deliver breakthrough new application capabilities and transform the way people interact with software.

Apache Knox Unveiled: Intro to Webshell Wonders & Practical Know-How

18 SaaS Metrics and KPIs Every Company Should Track

Growing any business is difficult, but scaling a software as a service (SaaS) company is on a whole other level. Most SaaS companies struggle to achieve predictable revenue growth, while even public SaaS companies struggle to achieve profitability. To make a SaaS company successful, you can’t just change your software delivery model to the web and expect it all to work. You have to make thoughtful, data-driven decisions when it comes to your marketing, sales, and customer success operations.

Top 5 BI Events and Conferences in 2024

Integrate.io Developer Toolkit: Building Real-Time Data Streams

Drive Your Retail Media Strategy with Data Clean Rooms

Retail media is the topic everyone is talking about in the retail and consumer goods industry. And for good reason: the $45 billion U.S. retail media market is surging as retailers capitalize on the consumer shift to ecommerce while offering advertisers access to their unique audiences and data insights. Many retailers developed their own retail media networks over the last few years, from digital marketplaces and department stores to commerce intermediaries.

Carsales Smooth Journey to the Driver's Seat with ThoughtSpot

Unlocking Snowflake: Scale your data cloud efficiently with purpose-built AI

Sundeck Query Engineering Platform Optimizes Snowflake Warehouse Utilization

Pre-deployment Testing and Verification of API flows

API Deployment

Generating a Test Flow in Astera

API Monitoring in Astera

An Introduction to REST API with Python

Snowflake Automation: The Key to Scalability and Efficiency

Enterprise Apache Kafka Cluster Strategies: Insights and Best Practices

Apache Kafka® has become the de-facto standard for streaming data, helping companies deliver exceptional customer experiences, automate operations, and become software. As companies increase their use of real-time data, we have seen the proliferation of Kafka clusters within many enterprises. Often, siloed application and infrastructure teams set up and manage new clusters to solve new use cases as they arise.

The Official 2024 Checklist for HIPAA Compliance

The 7 Best Reporting Tools for 2024

Hitachi Vantara Launches Unified Compute Platform Integrated with GKE Enterprise to Modernize and Simplify Hybrid Cloud Management

The 10 Most-Tracked Google Analytics Metrics [Original Data]

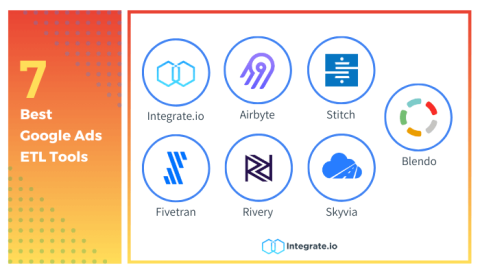

7 Top Google Ads ETL Tools for 2024

27 Best Free Human Annotated Datasets for Machine Learning

Unleashing Your Data's Potential: Virtual Storage Platform One

insightsoftware Recognized in the 2023 Gartner Magic Quadrant for Financial Planning Software Report

Snowflake's AWS re:Invent Highlights for Fast-Tracking ML, Gen AI and Application Innovations

We had a jam-packed week alongside more than 60,000 attendees at Amazon Web Services (AWS) re:Invent, one of the largest hands-on conferences in the cloud computing industry. Engaging with partners and customers — and showcasing what’s new on the Snowflake product front — made for a dynamic time in Las Vegas. Here are highlights from the collaborations, integrations and product enhancements that we were proud to dig in to throughout the week.

How To Design a Dashboard in Yellowfin: Part Two

SaaS in 60 - A Gift For You - New Layout Container

Introducing the New Layout Container - Tips, Tricks and Nuances

Snowflake Presents NetworkGenie: Revolutionizing Telecom with Generative AI

Asynchronous Events | Microservices 101

Workflow Tasks in an API Flow

Database CRUD APIs Auto-Generation

7 Big Data Security Changes You Need to Know in 2024

Data security will remain one of the biggest concerns for businesses this year. According to IBM, the average data breach in 2023 cost 4.45 million - and 82% of that involved data stored in the cloud. Damages from cybercrime, including the cost of data recovery, could total $10.5 trillion annually by 2025, causing more business owners to review their data security protocols. Which specific changes should you implement in the next 12 months?

Introducing Data Portal in Stream Governance

Today, we’re excited to announce the general availability of Data Portal on Confluent Cloud. Data Portal is built on top of Stream Governance, the industry’s only fully managed data governance suite for Apache Kafka® and data streaming. The developer-friendly, self-service UI provides an easy and curated way to find, understand, and enrich all of your data streams, enabling users across your organization to build and launch streaming applications faster.

A Beginner's Guide to Embedded Analytics and BI

Top 8 Data Analytics Tools For 2024

How to give marketers a safe, self-serve Customer 360

Deliver faster marketing insights with governed data movement and democratization.

Build an Open Data Lakehouse with Iceberg Tables, Now in Public Preview

Apache Iceberg’s ecosystem of diverse adopters, contributors and commercial support continues to grow, establishing itself as the industry standard table format for an open data lakehouse architecture. Snowflake’s support for Iceberg Tables is now in public preview, helping customers build and integrate Snowflake into their lake architecture. In this blog post, we’ll dive deeper into the considerations for selecting an Iceberg Table catalog and how catalog conversion works.

#shorts - Santa's 25 Days of Visualizations - #1 The Indexed Line Chart #data #visualization

Cloudera and AWS | Customer Obsessed, Migration Focused

Data-driven decisions with YugabyteDB and BigQuery

Integrate YugabyteDB with BigQuery using CDC and why you would want to.

Why ETL Data Modeling is Critical in 2024

Like peanut butter and jelly, ETL and data modeling are a winning combo. Data modeling can't exist without ETL, and ETL can't exist with data modeling. Not if you want to model data properly. Combining the two defines the rules for data transformations and preps data for big data analytics. In the age of big data, businesses can learn more than ever about their customers, identify new product opportunities, and so on.

Top 5 Best CDC Tools for 2024

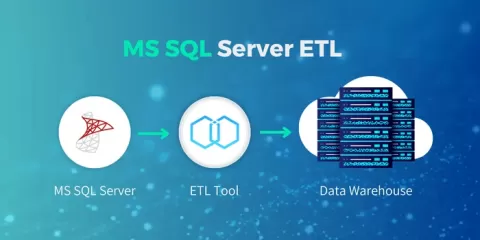

11 Top MS SQL ETL Tools for 2024

Top 5 Amazon S3 ETL Tools For 2024

Built with BigQuery: LiveRamp's open approach to optimizing customer experiences

Built with BigQuery, Live Ramp Safe Haven enables cross-channel marketing analytics to help customers execute media campaigns.

How to build a data foundation for generative AI

GenAI depends on data maturity, in which an organization demonstrates mastery over both integrating data – moving and transforming it – and governing its use.

Building Trust in Public Sector AI Starts with Trusting Your Data

In recent years, governments across the globe have recognized the transformative potential of artificial intelligence (AI) and have embarked on initiatives to harness this technology to drive innovation and serve their citizens more effectively. These government-led efforts have had a profound impact on the development and adoption of AI solutions in the public sector, paving the way for a future where data-driven decision-making and automation are the norm.

Keboola, Data Operations Supercharger, raises $32M in Series A Funding

New Countly App User Export Format: How, What, and Why?

Recently we introduced one breaking change in how user information is exported from Countly, and we wanted to explain why such change was made and what to do to keep your plugins supported. But before we dive into why's, let's first reiterate the "what" part.