ODSC West: Building Operational Pipelines for Machine and Deep Learning

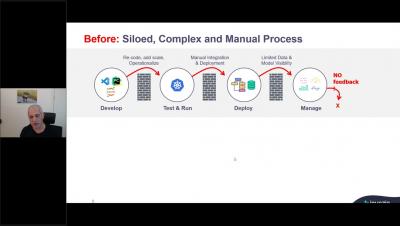

MLOps holds the key to accelerating the development and deployment of AI, so that enterprises can derive real business value from their AI initiatives. From the first model deployed to scaling data science across the organization. The foundation you set will enable your team to build and monitor a growing amount of AI applications in production. In this talk, we will share best practices from our experience with enterprise customers who have effectively built and deployed composite machine and deep learning pipelines.