A Starter Guide to Cloud ETL Tools

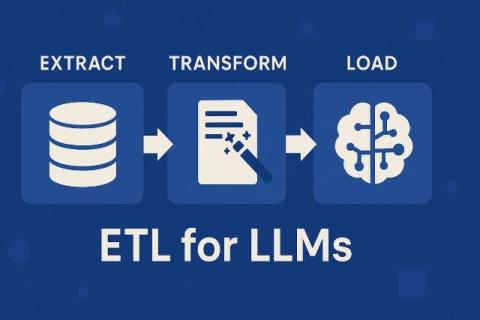

In today's world of Internet Technology and the need for instant access to a wide range of information, companies are constantly receiving unprecedented amounts of data from various sources and in different formats. Sorting through this mass of data to find patterns and actionable insights is nearly impossible. This is where the process of Extract, Transform, and Load (ETL) and, more specifically, cloud-based ETL platforms designed for low-code data integration, becomes invaluable.