Why Your Company Will Be Running OpenClaw Next Year

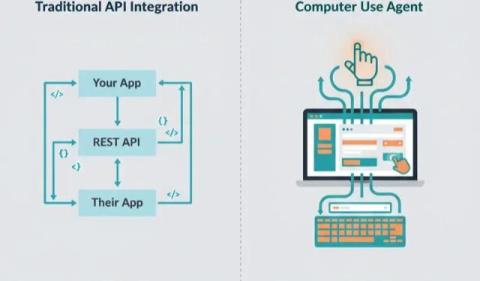

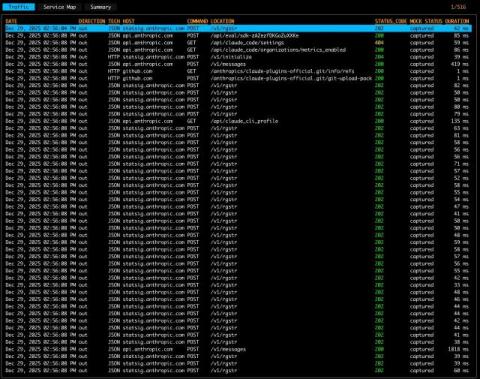

You’ve probably heard of OpenClaw. Maybe you’ve seen the demos where an AI agent opens a browser, navigates to your CRM, fills in a form, and files a support ticket. No API required. Maybe you thought “that’s cool but I’d never run that at work.” Your employees already are. According to Permiso’s research, 22% of enterprise customers have employees running OpenClaw without IT approval.