AI Coding Agents Break What Works

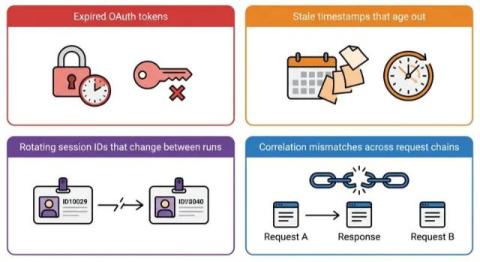

Your AI coding agent just made every test pass. Ship it, right? Not so fast. A growing class of AI-generated bugs doesn’t come from writing bad code. It comes from the AI changing working code to accommodate its own mistakes. This isn’t a theoretical risk. It’s happening now, in production codebases, and it’s harder to catch than any bug the AI might introduce from scratch.