Systems | Development | Analytics | API | Testing

ClearML Autoscaler: How It Works & Solves Problems

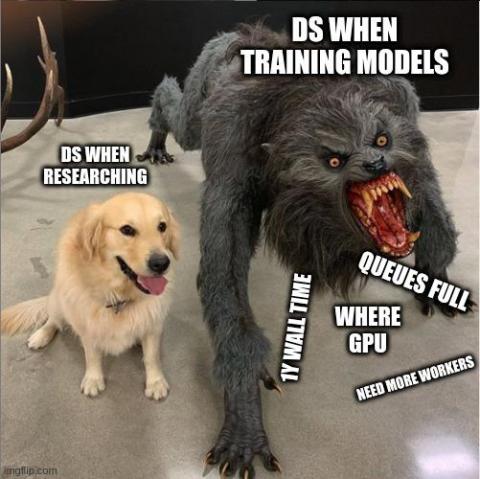

Sometimes the need for processing power you or your team requires is very high one day and very low another. Especially in machine learning environments, this is a common problem. One day a team might be training their models and the need for compute will be sky high, but other days they’ll be doing research and figuring out how to solve a specific problem, with only the need for a web browser and some coffee.

How to Use a Continual Learning Pipeline to Maintain High Performances of an AI Model in Production - Guest Blogpost

The algorithm team at WSC Sports faced a challenge. How could our computer vision model, that is working in a dynamic environment, maintain high quality results? Especially as in our case, new data may appear daily and be visually different from the already trained data. Bit of a head-scratcher right? Well, we’ve developed a system that is doing just that and showing exceptional results!

The ClearML Autoscaler

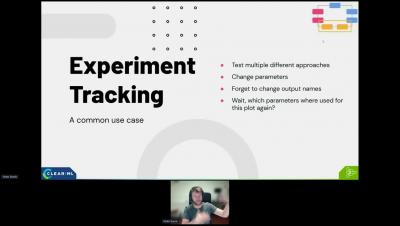

[MLOPS] the importance of experiment tracking and data tracability

Hyperparameter Optimization (A Real-Life Use Case)

This is part 3 of our 3-part Hyperparameter Optimization series, if you haven’t read the previous 2 parts where we explain ClearML’s approach towards HPO, you can find them here and here. In this blog post, we will focus on applying everything we learned to a “real world” use case.

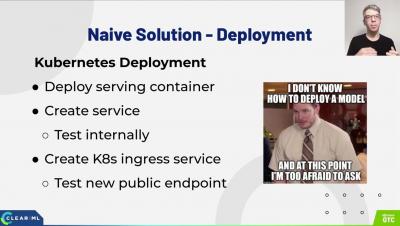

[MLOPS] From GTC22: A journey towards building a scalable AI serving solution

Improving a day in the life of: Data Scientist - How ClearML is actually used.

Cloud vendor's MLOps or Open source?

If someone had told my 15-years-ago self that I’d become a DevOps engineer, I’d have scratched my head and asked them to repeat that. Back then, of course, applications were either maintained on a dedicated server or (sigh!) installed on end-user machines with little control or flexibility. Today, these paradigms are essentially obsolete; cloud computing is ubiquitous and successful.