Confluent Connect: FY'25 Launch Highlights - Unlocking Data & Powering AI Pipelines

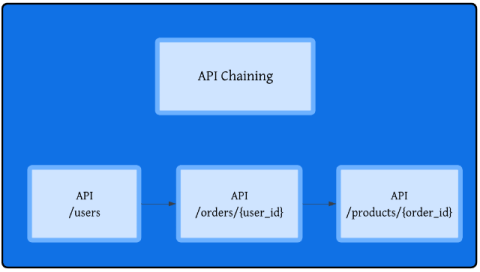

Dive into the biggest breakthroughs for the Confluent Connect ecosystem in 2025! This year, we made moving data easier than ever, from modernizing legacy systems with the Oracle XStream CDC Premium Connector to empowering developers with Custom SMTs and Custom Connectors on Google Cloud. Discover the over 10 new connectors we launched, including Snowflake Source, Azure Cosmos DB v2, and Neo4j Sink, plus the release of Confluent Hub 2.0. Learn how Confluent Cloud connectors are breaking down silos and building bridges for your next-gen AI and data modernization projects.