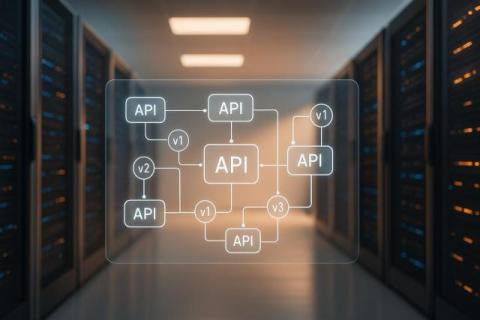

From API Automation to Data AI Gateway: Why DreamFactory's Evolution Matters Now

DreamFactory has transformed from a basic API automation tool into a Data AI Gateway, addressing modern enterprise challenges like managing APIs, integrating data, and ensuring security. Here's why this evolution is important: API Management Simplified: DreamFactory generates secure REST APIs for databases in just 5 minutes, saving time and reducing development costs by up to $201,783 annually.