Systems | Development | Analytics | API | Testing

What is an MCP for Kafka with Tun Shwe

AI agents are only as good as the data they can access. In this video, we explore the Model Context Protocol (MCP) and how it creates a bridge between AI models and Apache Kafka. Learn how MCP allows AI agents to securely produce, consume, and manage Kafka topics in real-time—transforming your event streams into actionable context for LLMs.

The $11 Billion Question: What the acquisition of Confluent by IBM means

What’s remarkable is how long Confluent competed at the highest level. Creating a category and type of application is hard; transitioning to cloud and surviving against hyper scalers is even harder. That alone is a huge achievement. Some see this as a pressured exit. But another way to look at it is as a strategic purchase by IBM to strengthen its position in enterprise data movement and integration.

JSON schemas to control your Lenses & infrastructure provisioning

When we talk about JSON schema in the world of Kafka and streaming, you may assume the schema for the events/messages. But how many times have you fumbled in the configuration about trying to get an application deployed? Schemas that describe how to configure and deploy Applications or applications-as-code, are also important, allowing us to automate application landscapes. Especially as we will soon be a wash with catalogs for AI Agents, MCP servers etc.

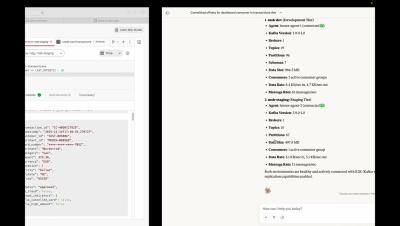

Fat Fingers? Not With Our K2K Config Schema Protector!

Picture this: It's 3 AM. You’re on-duty in case there is an outage. A team in the other part of the world merged PR and released a new version of K2K Replicator and it crashed. Consumer group lag is spiking to the universe. You’re paged & woken up, went to your laptop, the team already reverted PR, things are stabilising, but what really happened, you have to investigate now as postmortem has to be done.