Kafka Service Bundle: Managed Apache Kafka Without Lock-In

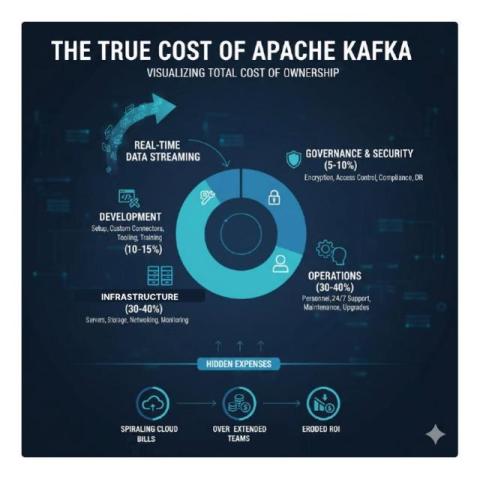

Apache Kafka delivers unmatched performance for real-time data streaming — but managing it in-house requires deep expertise. That’s where OpenLogic’s Kafka Service Bundle comes in. This managed Apache Kafka solution helps enterprises simplify operations, control costs, and maintain full ownership of their data — without the vendor lock-in of commercial clouds. Key benefits: With OpenLogic, your business gets the freedom and flexibility of open source Kafka — supported by the expertise and reliability enterprises depend on.