Running Kafka in Kubernetes: What We Learned

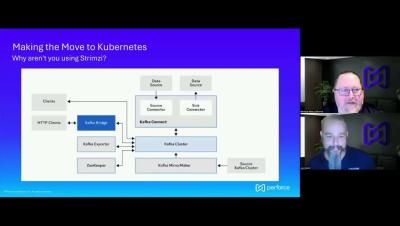

Apache Kafka is mission-critical for many organizations—but where you deploy it matters just as much as how you use it. In this video, two OpenLogic experts discuss why they increasingly encourage customers to move their Kafka clusters to Kubernetes and utilize the Strimzi operator, and what that shift unlocks from an operational, scalability, and resilience standpoint.