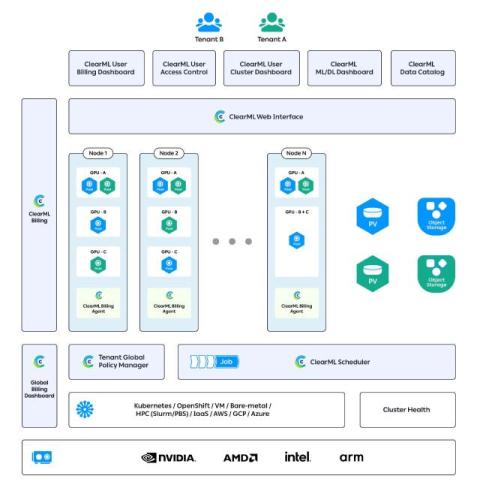

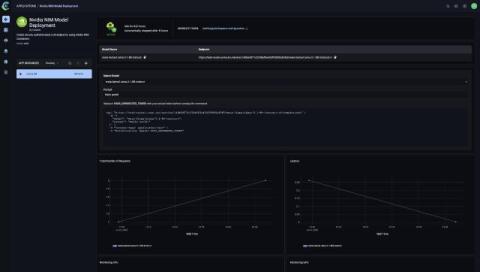

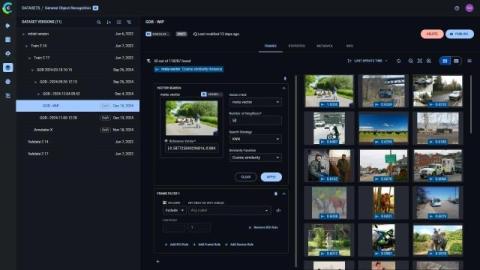

ClearML Enterprise 3.26 Is Here: Static Routes, NIM Deployment, SGLang Support, and More

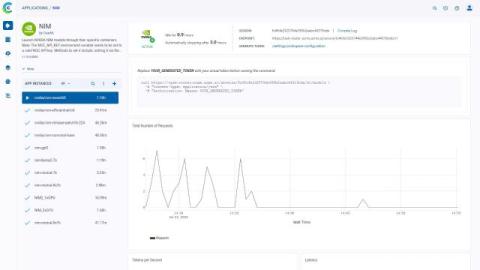

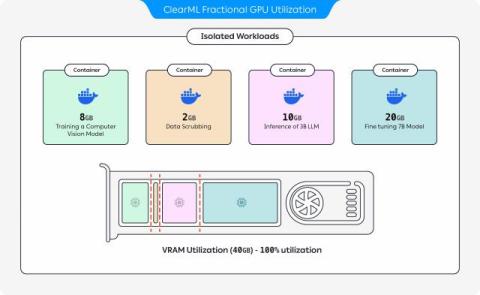

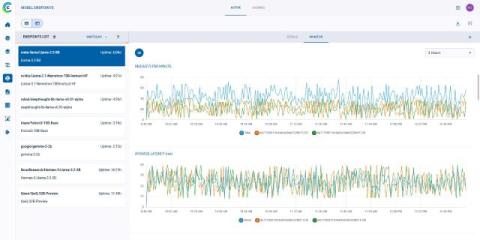

ClearML Enterprise v3.26 brings powerful upgrades across model deployment, NIMs container deployment, and dataset management – all part of our end-to-end platform for managing and scaling AI in the enterprise.