The Business Value of the DSP: Part 2 - A Framework for Measuring Impact

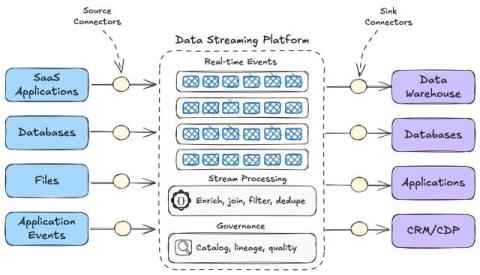

In our previous blog post, we explored how Confluent has evolved into a comprehensive data streaming platform (DSP). Now that we understand what a DSP is, let's address a key question: How does it deliver business value? When business stakeholders assess value, they typically look at how a solution might help drive benefits across three key areas: So how does a DSP support these key areas?