The Durable Sessions stack is forming

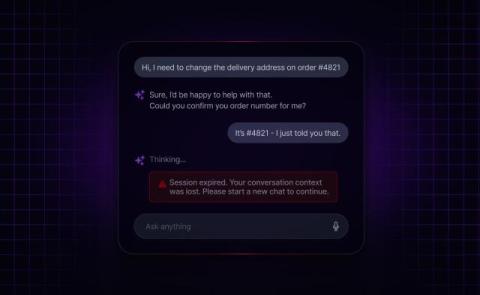

By Matt O'Riordan, CEO and Co-Founder Across AI infrastructure right now, one word is doing a lot of work: durable. It is attached to execution. To agents. To workflows. To sessions. To streams. To transports. To memory. Every few weeks, another product ships with "durable" in the name. This is not branding noise. The underlying observation is the same in every case. AI systems are long-lived. They can fail at any layer. They need infrastructure that assumes failure rather than hopes against it.