Systems | Development | Analytics | API | Testing

Streaming Edge Data Collection and Global Data Distribution

In the first blog of the Universal Data Distribution blog series, we discussed the emerging need within enterprise organizations to take control of their data flows. From origin through all points of consumption both on-prem and in the cloud, all data flows need to be controlled in a simple, secure, universal, scalable, and cost-effective way.

Data & The Culture Transformation

The Future Is Hybrid Data, Embrace It

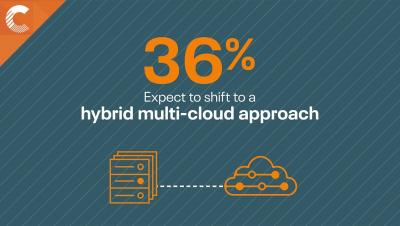

We live in a hybrid data world. In the past decade, the amount of structured data created, captured, copied, and consumed globally has grown from less than 1 ZB in 2011 to nearly 14 ZB in 2020. Impressive, but dwarfed by the amount of unstructured data, cloud data, and machine data – another 50 ZB.

The Power of Exploratory Data Analysis and Visualization for ML

Data scientists and machine learning engineers in enterprise organizations need to fully understand their data in order to properly analyze it, build models, and power machine learning use cases across their business. Due to the lack of tooling specifically designed for data discovery, exploration, and preliminary analysis, this presents a significant challenge for these teams.

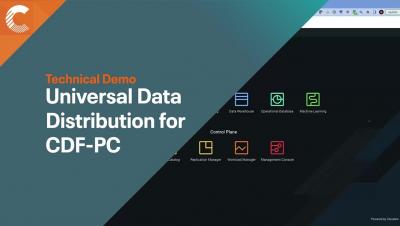

Technical Demo - Universal Data Distribution With Cloudera DataFlow for Public Cloud

Moving Enterprise Data From Anywhere to Any System Made Easy

Since 2015, the Cloudera DataFlow team has been helping the largest enterprise organizations in the world adopt Apache NiFi as their enterprise standard data movement tool. Over the last few years, we have had a front-row seat in our customers’ hybrid cloud journey as they expand their data estate across the edge, on-premise, and multiple cloud providers.

How to Overcome Hybrid Cloud Migration Roadblocks

Tailored Support Designed for You

At Cloudera we’re building the world’s only hybrid data platform that’s founded on open source and truly hybrid. What do we mean by truly hybrid? Well, not only does it seamlessly support on-premises and cloud-based deployments alike, but uniquely, it is cloud vendor agnostic, allowing multi-cloud strategies to thrive.