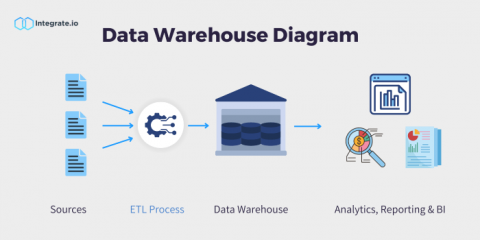

What is a Data Warehouse & Why Are They Important?

In today's digital era, a data warehouse stands as a pivotal cornerstone for businesses. A data warehouse is defined as a digital repository that houses an organization's vast amounts of data, it serves as both a vault and a library, ensuring data is not only safely stored but also easily accessible. Being able to access your company’s data is critical to business success.