Systems | Development | Analytics | API | Testing

5 Ways to Improve Data Quality with Teradata

In 1979, Teradata began life as a collaboration between Caltech and Citibank. Today, this enterprise software group is all about redefining business intelligence tools and data management. The Teradata Database is now the Teradata Vantage Advanced SQL Engine, The name not only highlights the evolution of the company but also recognizes that tech consumers now expect more from their tools.

What Is a Data Stack?

These days, there are two kinds of businesses: data-driven organizations; and companies that are about to go bust. And often, the only difference is the data stack. Data quality is an existential issue—to survive, you need a fast, reliable flow of information. The data stack is the entire collection of technologies that make this possible. Let's take a look at how any company can assemble a data stack that's ready for the future.

What is data quality, why does it matter, and how can you improve it?

We’ve all heard the war stories born out of wrong data: These stories don’t just make you and your company look like fools, they also cause great economic damages. And the more your enterprise relies on data, the greater the potential for harm. Here, we take a look at what data quality is and how the entire data quality management process can be improved.

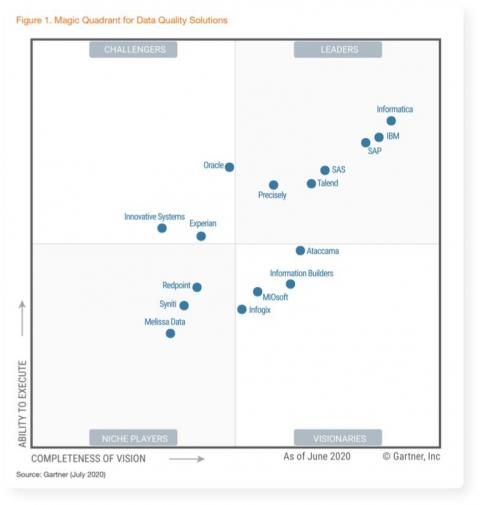

Talend Data Inventory - Turning Data Quality into a Team Sport

Our reflections on the 2020 Gartner Magic Quadrant for Data Quality Solutions

“Every organization — no matter how big or how small — needs data quality,” says Gartner in its newly published Magic Quadrant for Data Quality Solutions. However, with more and more data coming from more and more sources, it’s increasingly harder for data professionals to transform the growing data chaos into trusted and valuable data assets.