Systems | Development | Analytics | API | Testing

Beyond Hyped: Iguazio Named in 8 Gartner Hype Cycles for 2022

We’re so proud to share that Iguazio has been named a sample vendor in eight Gartner Hype Cycles in 2022: Iguazio was mentioned in the following categories: MLOps, Logical Feature Store, Adaptive ML, Data-Centric AI, AI Engineering, AI TRiSM, Operational AI Systems, ModelOps, AI Engineering in HCLS and Continuous Intelligence. We are delighted to have been mentioned alongside global industry leaders like AWS, IBM, Microsoft, Google, Databricks and Dataiku.

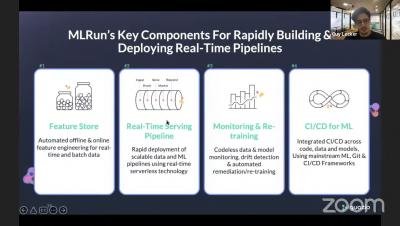

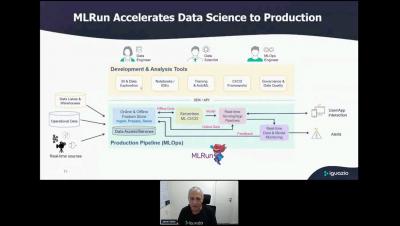

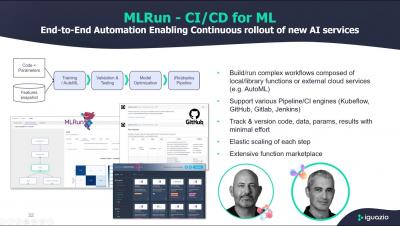

MLOps Bay Area Summit: From AutoML to AutoMLOps

AutoMLOps means automating engineering tasks so that your code is automatically ready for production. In this session, Yaron outlined the challenges, describe open-source tools available for Auto-MLOps, and more!

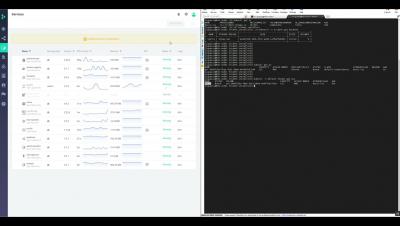

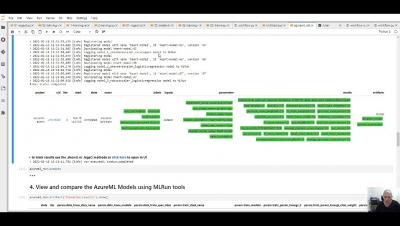

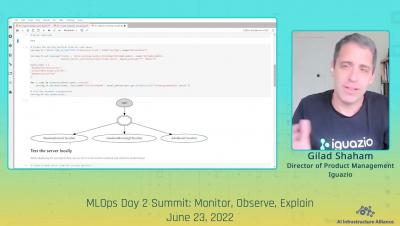

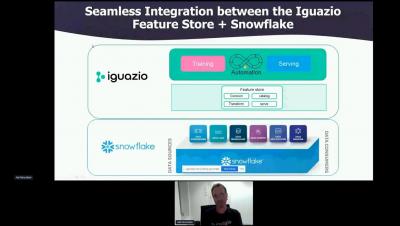

MLOps NYC Summit: Building an Automated ML Pipeline with a Feature Store using Iguazio & Snowflake

In this session, we will describe the challenges in operationalizing machine & deep learning. We’ll explain the production-first approach to MLOps pipelines - using a modular strategy, where the different components provide a continuous, automated, and far simpler way to move from research and development to scalable production pipelines. Without the need to refactor code, add glue logic, and spend significant efforts on data and ML engineering.

Best Practices for Succeeding with MLOps ft. Noah Gift - MLOps Live 18

As the MLOps practice matures, there is an accumulation of stories about what works well – and what doesn’t. If you’re building up your enterprise MLOps muscle, instead of trial and error, why not tap into the collective memory of thousands of organizations who have spent the last couple of years building their MLOps practices internally and learn from their experience?