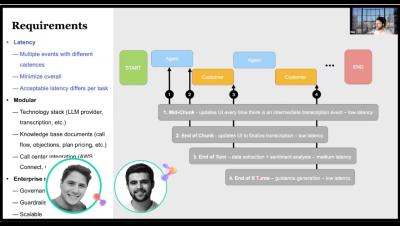

Building Agent Co-pilots for Proactive Call Centers

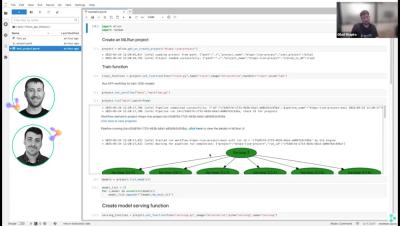

Gen AI call center co-pilots can provide enterprises with operational visibility and insights while automating repetitive tasks, to improve the customer experience. In this session, we’ll show how a large health insurance provider implemented an agentic co-pilot designed scale across multiple call centers and environments. To dive deep into the architecture and see a demo of the co-pilot, you can watch the webinar this blog is based on.