Realtime steering: interrupt, barge-in, redirect, and guide the AI

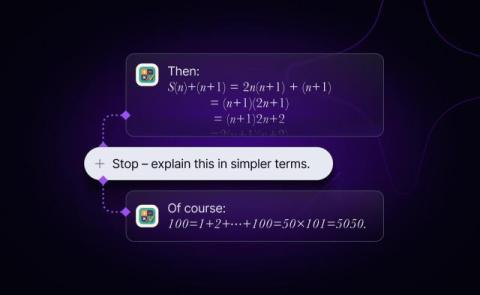

Start typing, change your mind, redirect the AI mid-response. It just works. That is the promise of realtime steering. Users expect to interrupt an answer, correct its direction, or inject new instructions on the fly without losing context or restarting the session. It feels simple, but delivering it requires low-latency control signals, reliable cancellation, and shared conversational state that survives disconnects and device switches.