Configuring Kong Dedicated Cloud Gateways with Managed Redis in a Multi-Cloud Environment

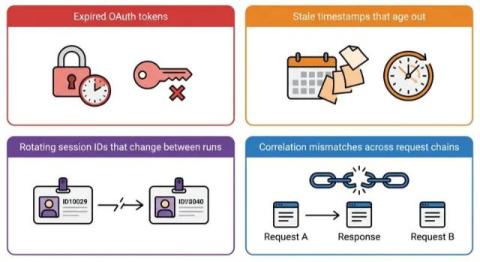

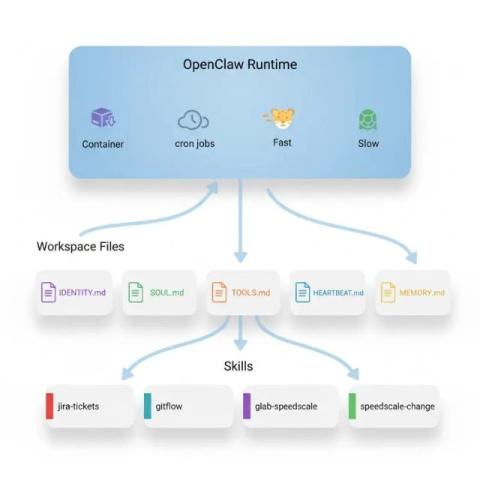

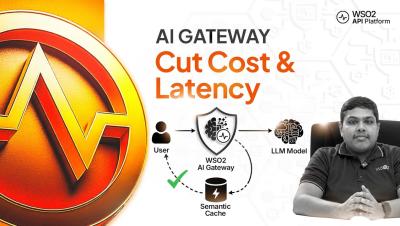

A persistent challenge arises as businesses adopt multicloud architectures and agentic AI: the need for state synchronization. API and AI gateways require a robust persistence layer to synchronize data, whether it's for governing AI token usage, facilitating agent-to-agent communication, or boosting performance through caching.