How to Use Flink SQL, Streamlit, and Kafka: Part 2

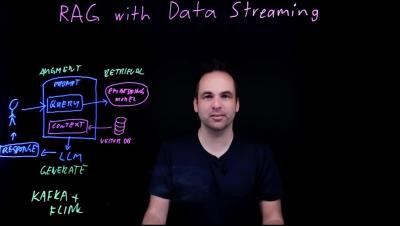

In part one of this series, we walked through how to use Streamlit, Apache Kafka, and Apache Flink to create a live data-driven user interface for a market data application to select a stock (e.g., SPY) and discussed the structure of the app at a high level. First, data with information on stock bid prices is moved via an Alpaca websocket, then, it’s produced to a Kafka topic in Confluent Cloud where it is also processed with Flink SQL.