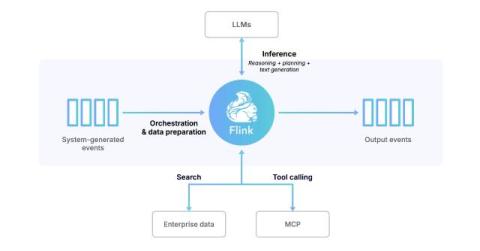

Confluent Cloud is now available in the new AWS Marketplace AI Agents and Tools category

Confluent announces the availability of Confluent Cloud in the new AI Agents and Tools category of AWS Marketplace. This enables AWS customers to easily discover, buy, and deploy AI agent solutions, including Confluent's fully managed data streaming platform Confluent Cloud, using their AWS accounts, for accelerating AI agent and agentic workflow development.