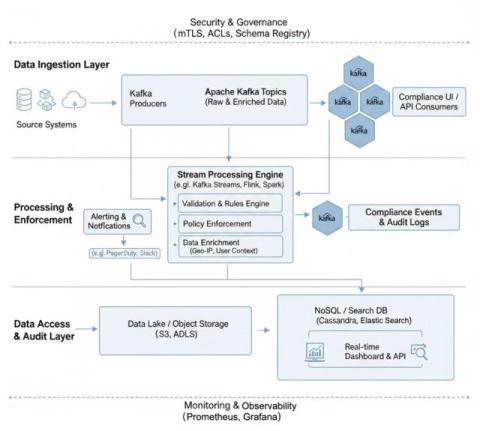

How to Build Real-Time Compliance & Audit Logging With Apache Kafka

Traditionally, compliance teams have had to rely on batch exports for their audit logs, a method that, while functional, is proving to be woefully inadequate in today's fast-paced digital landscape. The truth is, waiting hours, or even days, for batch exports of your audit data leaves your organization vulnerable.