Confluent Cloud Is Your Life (K)Raft Away From Hosted Apache Kafka

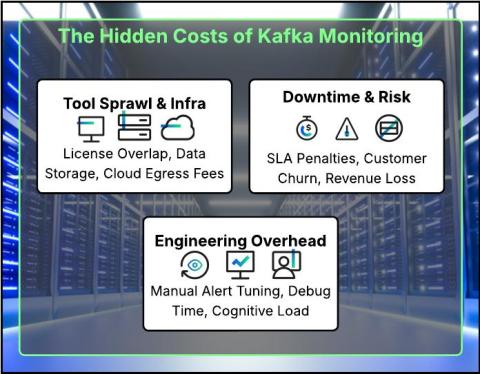

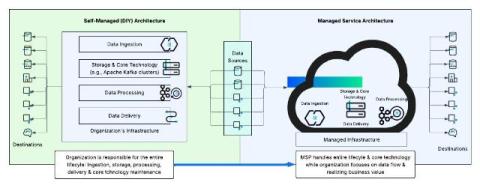

Streaming your data with Apache Kafka, at its core, involves moving data from one point to another in real time, much like a river flows from its source to its destination. However, beneath this seemingly straightforward goal lies significant complexity and hidden costs. The multitude of available deployment options, hosted and managed Kafka services, and design choices make it difficult to navigate the data streaming landscape.