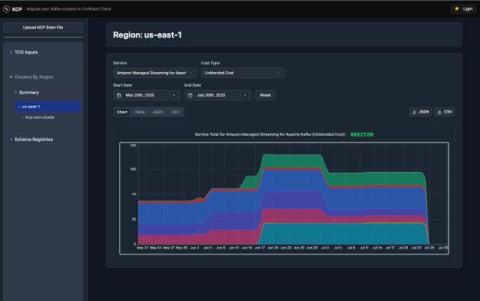

Kafka Copy Paste (KCP): How to Migrate to Confluent Cloud in Days, Not Weeks

While Apache Kafka is incredibly powerful, self-managing brokers, upgrades, capacity, security, and incidents can quickly distract teams from what matters most: building real-time applications and delivering business value. Confluent Cloud can remove that operational burden, yet migration can still be seen as risky and tedious.