Why Cluster Rebalancing Counts More Than You Think in Your Apache Kafka Costs

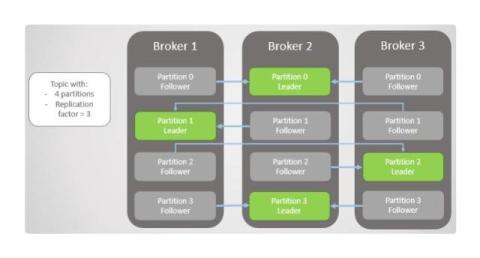

Cluster rebalancing is the redistribution of partitions across Kafka brokers to balance workload and performance. While this task is a necessary and frequent part of routine Apache Kafka operations, its true impact on infrastructure stability, resource consumption, and cloud expenditures is often underestimated.