AI Connection Pooling Best Practices | DreamFactory

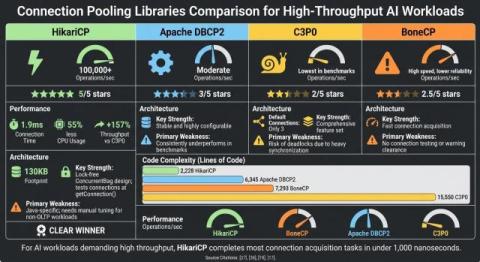

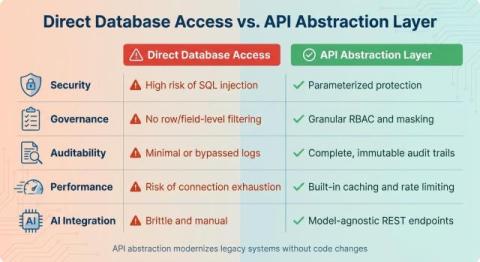

Key takeaways: For AI workloads, pooling must handle long connection hold times and heavy traffic. DreamFactory is a secure, self-hosted enterprise data access platform that provides governed API access to any data source, connecting enterprise applications and on-prem LLMs with role-based access and identity passthrough. Combined with tools like PgBouncer, these solutions free connections faster and improve scalability. Simple tweaks, such as segmenting pools and setting timeouts, can boost efficiency.