MCP in Production: Governing Agentic API Consumption | DeveloperWeek

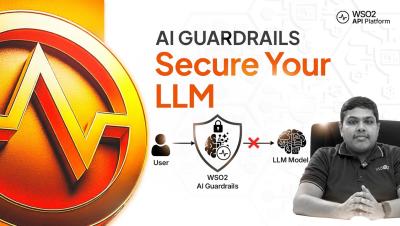

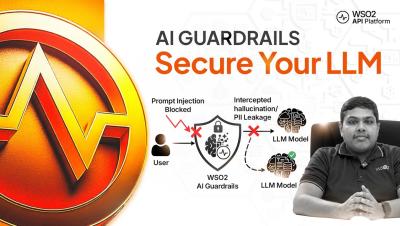

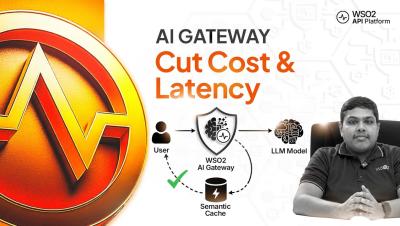

As AI agents begin interacting with APIs, traditional API governance models need to evolve. In this DeveloperWeek session, Derric Gilling (WSO2) explains how organizations can manage and secure agent-driven API consumption using the Model Context Protocol (MCP). Unlike human applications, AI agents can generate large volumes of API calls from a single prompt. Without proper controls, this can lead to unexpected costs, security risks, and limited visibility into how APIs are being used.