Systems | Development | Analytics | API | Testing

The Role of AI and Machine Learning in Product Analytics

From descriptive to predictive: Your first machine learning model

An overview of common low-hanging fruits to help you get started with machine learning.

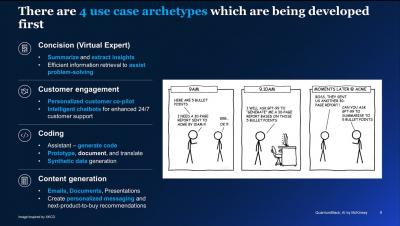

Key Takeaways on Generative AI for CEOs: Revolutionizing Business with Speed and Trust

Generative AI stands out from other technological breakthroughs due to its remarkable velocity and unprecedented speed. In a matter of mere months since its initial emergence in the limelight, this cutting-edge innovation has already achieved scalability, aiming to attain substantial return on investment. However, it is imperative to effectively harness this formidable technology, ensuring that it can deploy on a large scale and yield outcomes that garner trust from your business stakeholders.

What is Enterprise Generative AI and Why Should You Care?

It seems like we are witnessing a new quantum leap of technological advancement, with Generative AI taking the world by storm earlier this year. Generative AI (GenAI) has emerged as a powerful tool that combines artificial intelligence with creativity, empowering machines to generate original content, such as images, music, and even text, that imitates human-like creativity from structured and unstructured data.

Snowflake Expands Programmability to Bolster Support for AI/ML and Streaming Pipeline Development

At Snowflake, we’re helping data scientists, data engineers, and application developers build faster and more efficiently in the Data Cloud. That’s why at our annual user conference, Snowflake Summit 2023, we unveiled new features that further extend data programmability in Snowflake for their language of choice, without having to compromise on governance.