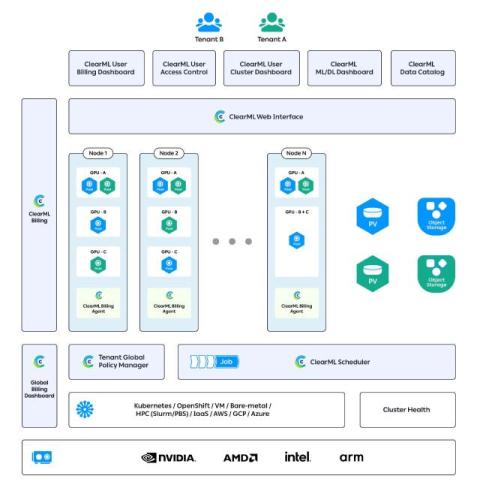

Streamlining AI Workloads: How ClearML's Infrastructure Control Plane Automates Orchestration, Scheduling, and Resource Optimization

By Noam Harel, Co-founder and CMO, ClearML AI is certainly transforming industries, but delivering it at scale is a harder task The shift to enterprise-grade AI isn’t just about building better models. It’s about managing the growing sprawl of infrastructure, tools, and people involved in every phase of your AI production From building and training to production deployment, teams are bogged down by fragmented workflows, manual provisioning, inconsistent environments, and underutilized compute.