Accelerating and Scaling AI Deployments Across Hybrid Environments - MLOps Live #40 with Safaricom

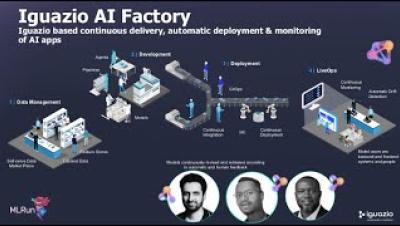

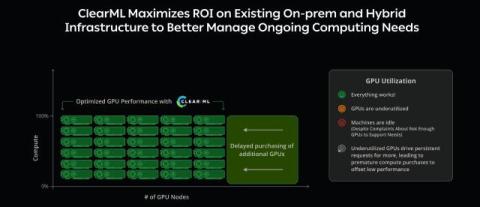

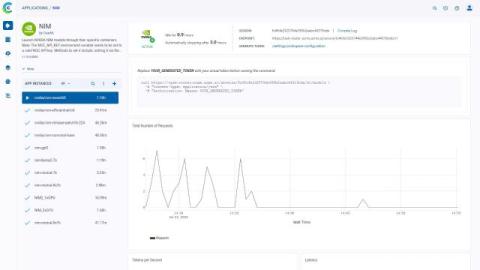

Safaricom, one of the most AI-mature mobile operators, delivers predictive modeling and hyper-personalized financial services to millions of users. But operational challenges were slowing down deployments—limiting their ability to scale and act in real time. In this session, Safaricom’s AI team shares how they: Watch now to learn how they overcame bottlenecks, scaled faster, and unlocked real-time impact at massive scale with the Iguazio technology.