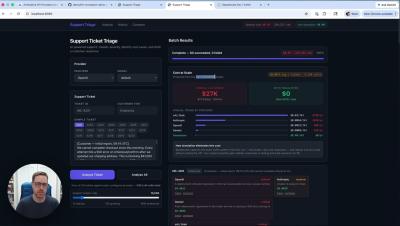

I Let AI Audit My LinkedIn Strategy (Here's what happened)

If you’re consistently posting on LinkedIn, the hard part isn’t getting data — it’s analyzing it. Most people review posts one by one, compare impressions manually, and try to “spot patterns” by eye. That’s slow. And it makes strategy reactive. In this walkthrough, Kamil Rextin, founder of 42 Agency, uses the Databox MCP with Claude to run a fast, AI-driven analysis of his LinkedIn performance — the kind of first-pass review you’d normally assign to a junior analyst.