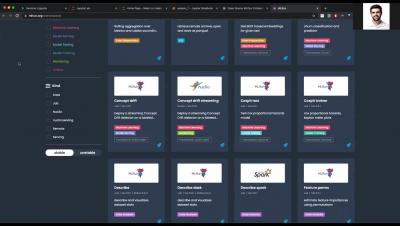

Systems | Development | Analytics | API | Testing

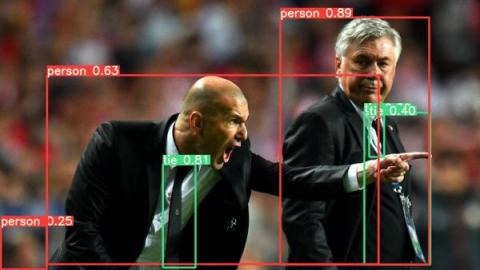

YOLOv5 Now Integrates Seamlessly with ClearML

The popular object detection model and framework made by ultralytics now has ClearML built-in. It’s now easier than ever to train a YOLOv5 model and have the ClearML experiment manager track it automatically. But that’s not all, you can easily specifiy a ClearML dataset version ID as the data input and it will automatically be used to train your model on. Follow us along in this blogpost, where we talk about the possibilities and guide you through the process of implementing them.

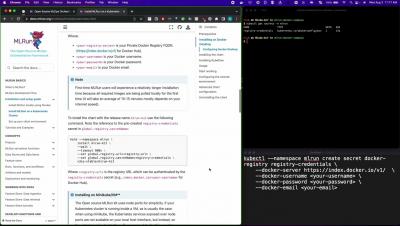

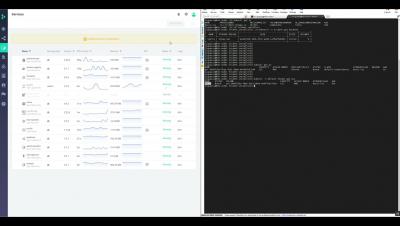

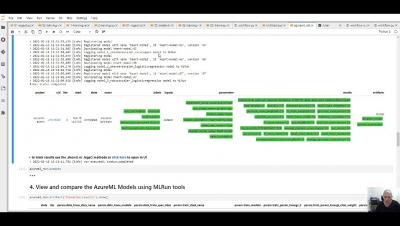

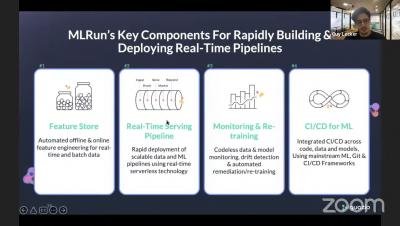

How to Deploy an MLRun Project in a CI/CD Process with Jenkins Pipeline

In this article, we will walk you through steps to run a Jenkins server in docker and deploy the MLRun project using Jenkins pipeline. Before we dive into the actual set up, let’s have a brief background on the MLRun and Jenkins.

Modernizing MLOps: Why I Chose Continual

It says something about a company and its people when they drop the process of formulaic job interviews and just let you pitch ideas for the job you want. That’s what happened when I applied to Continual as a Technical Marketing Manager. Five weeks in, I’m pleased to say I’m working on those same ideas, which I’ll detail in a couple minutes.