Systems | Development | Analytics | API | Testing

Accelerate Amazon EMR for Spark & More

BigQuery workload management best practices

In the most recent season of BigQuery Spotlight, we discussed key concepts like the BigQuery Resource hierarchy, query processing, and the reservation model. This blog focuses on extending those concepts to operationalize workload management for various scenarios.

Meet the ThoughtSpot Modern Analytics Cloud

Keys to Ensure that Data isn't Slowing Down your Innovation Efforts

In digital transformation projects, it’s easy to imagine the benefits of cloud, hybrid, artificial intelligence (AI), and machine learning (ML) models. The hard part is to turn aspiration into reality by creating an organization that is truly data-driven.

United Safety & Survivability Corporation Establishes a Unified Analytics Framework with Qlik Cloud

The manufacturing industry, like any other industry, is not immune to data challenges. Sourcing data, wrangling it and ensuring it’s being used in a governed, standardized way are not uncommon problems. Particularly in manufacturing, issues surface with inventory management, within the supply chain and with logistics.

Accelerate business value through data

How does data enable Business Value Acceleration – and where’s the big opportunity for CXOs? Click here here to learn more: https://www.qlik.com/us/executive-insights

How to monetize BigQuery datasets using Apigee

Automating Data Pipelines in CDP with CDE Managed Airflow Service

When we announced the GA of Cloudera Data Engineering back in September of last year, a key vision we had was to simplify the automation of data transformation pipelines at scale. By leveraging Spark on Kubernetes as the foundation along with a first class job management API many of our customers have been able to quickly deploy, monitor and manage the life cycle of their spark jobs with ease. In addition, we allowed users to automate their jobs based on a time-based schedule.

Data Pipeline HealthCheck

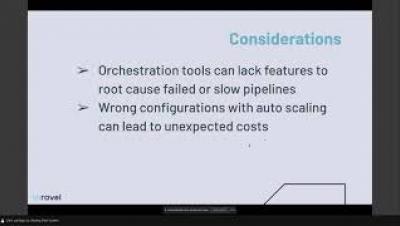

At Airflow Summit 2021, Unravel’s co-founder and CTO, Shivnath Babu, led a talk titled Data Pipeline HealthCheck for Correctness, Performance & Cost Efficiency. This story, along with the slides and videos included in it, come from the presentation.