Build Your Own Internal RAG Agent with Kong AI Gateway

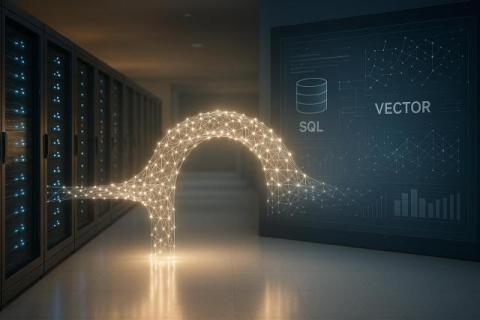

RAG (Retrieval-Augmented Generation) is not a new concept in AI, and unsurprisingly, when talking to companies, everyone seems to have their own interpretation of how to implement it. So, let’s start with a refresher. RAG (short for Retrieval-Augmented Generation) is a technique that injects relevant data from an external knowledge source directly into a prompt before sending it to a Large Language Model (LLM). “But wait, my model is already fine-tuned on my domain-specific data.