Systems | Development | Analytics | API | Testing

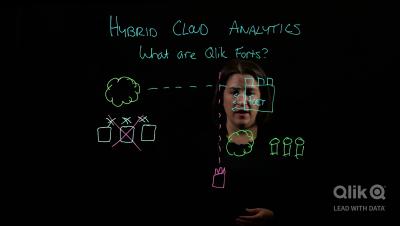

Introducing Qlik Forts - Brief Overview and Demo

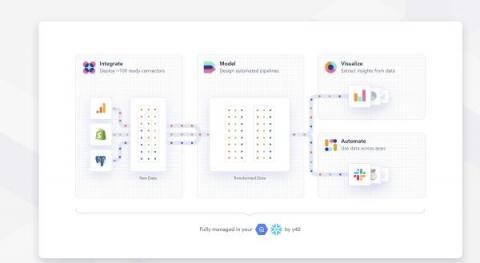

Y42 raises $31M to build the first scalable data platform that anyone can run

The Business Case for Sustainable Supply Chains Is in the Data

Businesses and consumers are getting better at recognizing the direct carbon cost of the products they use. As such, we’re seeing an increased use of sustainable materials in consumer goods and global products. That is a big positive trend, but there’s a bigger picture to explore. Value chains make up 90% of an organization’s environmental impact, according to the Carbon Trust.

New Features in Cloudera Streams Messaging Public Cloud 7.2.12

With the launch of the Cloudera Public Cloud 7.2.12, the Streams Messaging for Data Hub deployments have gotten some interesting new features! From this release, Streams Messaging templates will support scaling with automatic rebalancing allowing you to grow or shrink your Apache Kafka cluster based on demand.

Cloudera Machine Learning Workspace Provisioning Pre-Flight Checks

There are many good uses of data. With data, we can monitor our business, the overall business, or specific business units. We can segment based on the customer verticals or whether they run in the public or private cloud. We can understand customers better, see usage patterns and main consumption drivers. We can find customer pain points, see where they get stuck, and understand how different bugs affect them.

How to Bring Breakthrough Performance and Productivity To AI/ML Projects

By Jean-Baptiste Thomas, Pure Storage & Yaron Haviv, Co-Founder & CTO of Iguazio You trained and built models using interactive tools over data samples, and are now working on building an application around them to bring tangible value to the business. However, a year later, you find that you have spent an endless amount time and resources, but your application is still not fully operational, or isn’t performing as well as it did in the lab. Don’t worry, you are not alone.

Why Your Company Needs API Management

Google Analytics Automated Reports: Everything You Need to Know

How to Automate Apache NiFi Data Flow Deployments in the Public Cloud

With the latest release of Cloudera DataFlow for the Public Cloud (CDF-PC) we added new CLI capabilities that allow you to automate data flow deployments, making it easier than ever before to incorporate Apache NiFi flow deployments into your CI/CD pipelines. This blog post walks you through the data flow development lifecycle and how you can use APIs in CDP Public Cloud to fully automate your flow deployments.