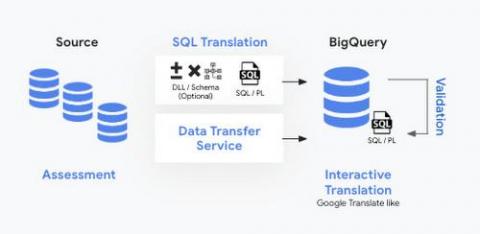

Building an automated data pipeline from BigQuery to Earth Engine with Cloud Functions

Over the years, vast amounts of satellite data have been collected and ever more granular data are being collected everyday. Until recently, those data have been an untapped asset in the commercial space. This is largely because the tools required for large scale analysis of this type of data were not readily available and neither was the satellite imagery itself. Thanks to Earth Engine, a planetary-scale platform for Earth science data & analysis, that is no longer the case.