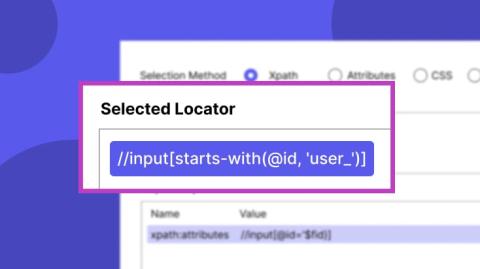

XPath vs CSS Selectors in Katalon: Write Stable Locators

Robust test automation in Katalon Studio starts with stable test objects. Flaky tests almost always trace back to one root cause: brittle locators that break the moment the UI changes. The best approach is to use unique, static attributes like id or custom data-qa attributes. When those aren't available, CSS and XPath are your tools. This post covers how to write each type of selector, when to choose one over the other, and how to handle dynamic attributes using contains() and starts-with(). At a glance.